A Mixture Model for Learning Sparse Representations

新编英语教程4课后练习答案【完整版】

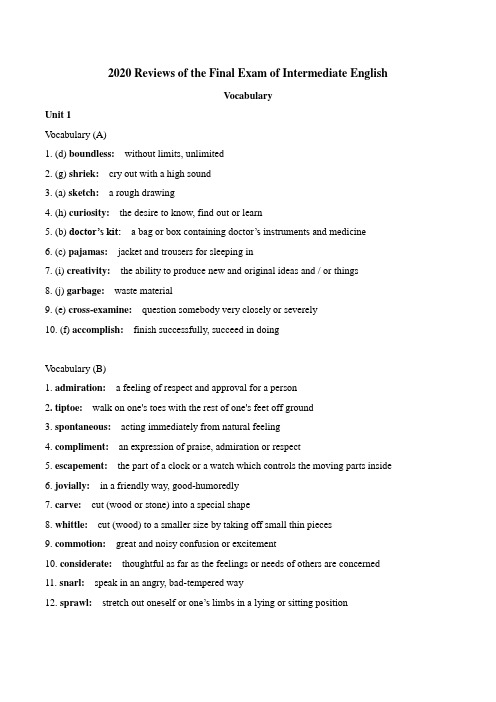

2020 Reviews of the Final Exam of Intermediate EnglishVocabularyUnit 1V ocabulary (A)1. (d) boundless: without limits, unlimited2. (g) shriek: cry out with a high sound3. (a) sketch: a rough drawing4. (h) curiosity: the desire to know, find out or learn5. (b) doctor’s kit: a bag or box containing doctor’s instruments and medicine6. (c) pajamas: jacket and trousers for sleeping in7. (i) creativity: the ability to produce new and original ideas and / or things8. (j) garbage:waste material9. (e) cross-examine:question somebody very closely or severely10. (f) accomplish: finish successfully, succeed in doingV ocabulary (B)1. admiration: a feeling of respect and approval for a person2. tiptoe: walk on one's toes with the rest of one's feet off ground3. spontaneous: acting immediately from natural feeling4. compliment: an expression of praise, admiration or respect5. escapement:the part of a clock or a watch which controls the moving parts inside6. jovially: in a friendly way, good-humoredly7. carve:cut (wood or stone) into a special shape8. whittle:cut (wood) to a smaller size by taking off small thin pieces9. commotion: great and noisy confusion or excitement10. considerate: thoughtful as far as the feelings or needs of others are concerned11. snarl: speak in an angry, bad-tempered way12. sprawl: stretch out oneself or one’s limbs in a lying or sitting positionUnit 2V ocabulary (A)1. pray: speak (usually silently) to God, showing love, giving thanks for asking for something2. was escorted:was taken3. moan:low sound of pain or suffering4. dire: terrible5. knelt:go down and/or remain on the knees6. jet-black: very dark or shiny black7. rocked:shook or or moved gently8. serenely: calmly or peacefully9. grin:smile broadly10. deceive: make sb. believe sth. that is falseV ocabulary (B)1. preach: give a religious talk, usually as part of a service in church2. by leaps and bounds:very quickly3. rhythmical:marked by regular succession of weak and strong stresses, accents, movements4. sermon: a talk usually based on a sentence or “verse” from the Bible and preached as part of a church service5. braided:twisted together into one plait6. work-gnarled: twisted, with swollen joints and rough skin as from hard work or old age7. rounder: a person who lives a vicious life, a habitual drunkard8. take his (i.e., god's) name in vain: use god's name in cursing, speak of god without respect9. punctuate: interrupt from time to time with sth.10. ecstatic:causing great joy and happinessUnit 3V ocabulary (A)1. contend: argue, claim2. mutilation: destruction3. purchase: buying4. possession:ownership5. transfer: move from one place to another6. dog-eared:having the corners of the pages turned up or down with use so that they look like a dog's ears7. intact:whole because no part has been touched or spoilt8. indispensable: absolutely, essential9. scratch pad:loosely joined sheets of paper (a pad) for writing notes10. sacred:to be treated with great respectV ocabulary (B)1. bluntly: plainly, directly2. Restrain:hold back (from doing sth.)3. dilapidated: broken and old; falling to pieces4. scribble: write hastily or carelessly5. unblemished:not spoiled, as new6. crayon:pencil of soft colored chalk or wax, used for drawing7. symphony: a musical work for a large group of instruments8. typography: the arrangement, style and appearance of printed matter9. humility: humble state of mind10. receptacle: a containerUnit 4V ocabulary (A)1. (c) zip off: move away with speed2. (f) unencumbered: not obstructed3. (j) nifty: clever4. (a) loose:let out5. (d) noodle around: play about6. (b) span:extend across7. (h) debut: make first public appearance8. (e) the élite: a group of people with a high professional or social level9. (g) juncture: a particular point in time10. (i) sparse: inadequately furnishedV ocabulary (B)1. exotic:striking or unusual in appearance2. hack: a person paid to do hard and uninteresting work3. stint:fixed amount of work4. random: chance, unplanned, unlooked for5. reside: be present (in some place)6. access:the opportunity or right to use or see sth.7. cobble:put together quickly or roughly8. lingua franca:language or way of communicating which is used by people do not speak the same native language9. quintessential: the most typical10. unconventionally: doing things not in the accepted way11. Compromise:sth. That is midway between two different things12. cash in on: profit from; turn to one's advantageUnit 5V ocabulary (A)1. radiate: send out (lights) in all directions2. appreciate: understand fully3. outweigh:are greater than4. hemmed in:surrounded5. habitation: a place to live in6. obscure: make difficult to see7. shatter: break suddenly into small pieces8. haul up: pull up with some effort9. pore:very small opening in the skin through which sweat may pass10. unveiling:discovering, learning aboutV ocabulary (B)1. distinctive: clearly marking a person or thing different from others2. spectacular: striking, out of the ordinary, amazing to see3. phenomenon: thing in nature as it appears or is experienced by the senses4. tenure: right of holding (land)5. tempestuous: very rough, stormy6. inclined: likely, tending to, accustomed to7. precipitation: (the amount of) rainfall, snow etc. which has fallen onto the ground8. disintegrate:break up into small particles or pieces, come apart9. granules:small pieces like fine grains10. mercury: a heavy silver-white metal which is liquid at ordinary temperature and is used in scientific instruments such as thermometers11. disrupt:upset, disturb12. cushion: paddingUnit 6V ocabulary (A)1. (f) brush house: house made of small branches2. (i) pulsing and vibrating:beating steadily (as the heart does) and moving rapidly, here “active”, “aler t”3. (b) strangle out: get the words out with difficulty in their keenness to speak4. (j) sting: a wound in the skin caused by the insect5. (e) giggle:laugh, not heartily, but often in a rather embarrassed way6. (a) alms-giver: person who gives money, food and clothes to poor people (NB: now a rather old-fashioned concept)7. (c) residue:that which remains after a part disappears, or is taken or used (here, a metaphor using a chemical term)8. (d) lust: very strong, obsessive desire9. (h) withheld:deliberately refused10. (g) venom: (liquid) poisonV ocabulary (B)1. scramble: move, possible climb, quickly and often with some difficulty2. dart:move forward suddenly and quickly3. panting: breathing quickly4. foaming:forming white mass of small air bubbles5. baptize: perform the Christian religious ceremony of baptism, i.e., of acceptance into the Christian Church6. judicious: with good judgment7. fat hammocks: (here) the doctor’s thick eyelids8. cackle:laugh or talk loudly and unpleasantly9. semblance: appearance, seeming likeness10. squint: look with almost closed eyes11. speculation: thoughts of possible profits12. distillate:product of distillationParaphraseUnit 11、Pretty clearly, anyone who followed my collection of rules would be blessed with a richer life, boundless love from his family and the admiration of the community.Para:Quite obviously, anyone who was determined to be guided by the rules of self improvement I collected would be happy and have a richer life, infinite affection from his family and the love and respect of the community.十分明显,遵循我所收藏的规则的人将享有丰富多彩的生活,包括来自家庭无尽的爱和邻居们的羡慕、钦佩。

人工智能原理MOOC习题集及答案 北京大学 王文敏

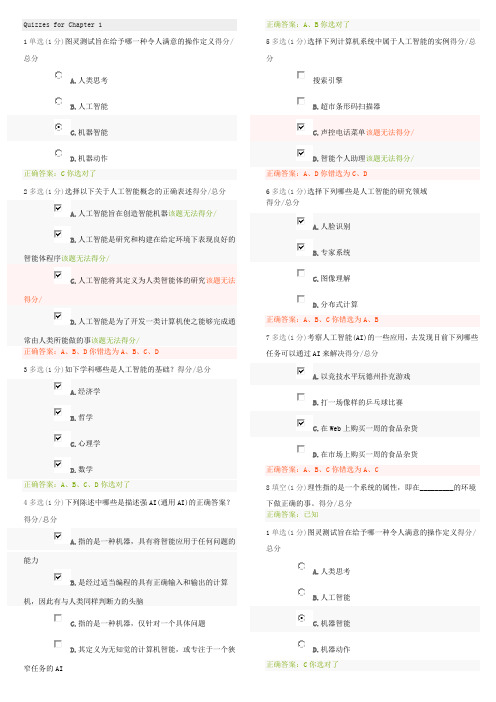

Quizzes for Chapter 11单选(1分)图灵测试旨在给予哪一种令人满意的操作定义得分/总分A.人类思考B.人工智能C.机器智能D.机器动作正确答案:C你选对了2多选(1分)选择以下关于人工智能概念的正确表述得分/总分A.人工智能旨在创造智能机器该题无法得分/B.人工智能是研究和构建在给定环境下表现良好的智能体程序该题无法得分/C.人工智能将其定义为人类智能体的研究该题无法得分/D.人工智能是为了开发一类计算机使之能够完成通常由人类所能做的事该题无法得分/正确答案:A、B、D你错选为A、B、C、D3多选(1分)如下学科哪些是人工智能的基础?得分/总分A.经济学B.哲学C.心理学D.数学正确答案:A、B、C、D你选对了4多选(1分)下列陈述中哪些是描述强AI(通用AI)的正确答案?得分/总分A.指的是一种机器,具有将智能应用于任何问题的能力B.是经过适当编程的具有正确输入和输出的计算机,因此有与人类同样判断力的头脑C.指的是一种机器,仅针对一个具体问题D.其定义为无知觉的计算机智能,或专注于一个狭窄任务的AI正确答案:A、B你选对了5多选(1分)选择下列计算机系统中属于人工智能的实例得分/总分搜索引擎B.超市条形码扫描器C.声控电话菜单该题无法得分/D.智能个人助理该题无法得分/正确答案:A、D你错选为C、D6多选(1分)选择下列哪些是人工智能的研究领域得分/总分A.人脸识别B.专家系统C.图像理解D.分布式计算正确答案:A、B、C你错选为A、B7多选(1分)考察人工智能(AI)的一些应用,去发现目前下列哪些任务可以通过AI来解决得分/总分A.以竞技水平玩德州扑克游戏B.打一场像样的乒乓球比赛C.在Web上购买一周的食品杂货D.在市场上购买一周的食品杂货正确答案:A、B、C你错选为A、C8填空(1分)理性指的是一个系统的属性,即在_________的环境下做正确的事。

得分/总分正确答案:已知1单选(1分)图灵测试旨在给予哪一种令人满意的操作定义得分/总分A.人类思考B.人工智能C.机器智能D.机器动作正确答案:C你选对了2多选(1分)选择以下关于人工智能概念的正确表述得分/总分A.人工智能旨在创造智能机器该题无法得分/B.人工智能是研究和构建在给定环境下表现良好的智能体程序该题无法得分/C.人工智能将其定义为人类智能体的研究该题无法得分/D.人工智能是为了开发一类计算机使之能够完成通常由人类所能做的事该题无法得分/正确答案:A、B、D你错选为A、B、C、D3多选(1分)如下学科哪些是人工智能的基础?得分/总分A.经济学B.哲学C.心理学D.数学正确答案:A、B、C、D你选对了4多选(1分)下列陈述中哪些是描述强AI(通用AI)的正确答案?得分/总分A.指的是一种机器,具有将智能应用于任何问题的能力B.是经过适当编程的具有正确输入和输出的计算机,因此有与人类同样判断力的头脑C.指的是一种机器,仅针对一个具体问题D.其定义为无知觉的计算机智能,或专注于一个狭窄任务的AI正确答案:A、B你选对了5多选(1分)选择下列计算机系统中属于人工智能的实例得分/总分搜索引擎B.超市条形码扫描器C.声控电话菜单该题无法得分/D.智能个人助理该题无法得分/正确答案:A、D你错选为C、D6多选(1分)选择下列哪些是人工智能的研究领域得分/总分A.人脸识别B.专家系统C.图像理解D.分布式计算正确答案:A、B、C你错选为A、B7多选(1分)考察人工智能(AI)的一些应用,去发现目前下列哪些任务可以通过AI来解决得分/总分A.以竞技水平玩德州扑克游戏B.打一场像样的乒乓球比赛C.在Web上购买一周的食品杂货D.在市场上购买一周的食品杂货正确答案:A、B、C你错选为A、C8填空(1分)理性指的是一个系统的属性,即在_________的环境下做正确的事。

2009 Fields of Experts(stefan roth et al)

Int J Comput Vis(2009)82:205–229DOI10.1007/s11263-008-0197-6Fields of ExpertsStefan Roth·Michael J.BlackReceived:22January2008/Accepted:17November2008/Published online:24January2009©Springer Science+Business Media,LLC2009Abstract We develop a framework for learning generic, expressive image priors that capture the statistics of nat-ural scenes and can be used for a variety of machine vi-sion tasks.The approach provides a practical method for learning high-order Markov randomfield(MRF)models with potential functions that extend over large pixel neigh-borhoods.These clique potentials are modeled using the Product-of-Experts framework that uses non-linear func-tions of many linearfilter responses.In contrast to previous MRF approaches all parameters,including the linearfilters themselves,are learned from training data.We demonstrate the capabilities of this Field-of-Experts model with two ex-ample applications,image denoising and image inpainting, which are implemented using a simple,approximate infer-ence scheme.While the model is trained on a generic im-age database and is not tuned toward a specific applica-tion,we obtain results that compete with specialized tech-niques.Keywords Markov randomfields·Low-level vision·Image modeling·Learning·Image restorationThe work for this paper was performed while S.R.was at Brown University.S.Roth( )Department of Computer Science,TU Darmstadt,Darmstadt, Germanye-mail:sroth@cs.tu-darmstadt.deM.J.BlackDepartment of Computer Science,Brown University,Providence, RI,USAe-mail:black@ 1IntroductionThe need for prior models of image or scene structure oc-curs in many machine vision and graphics problems includ-ing stereo,opticalflow,denoising,super-resolution,image-based rendering,volumetric surface reconstruction,and tex-ture synthesis to name a few.Whenever one has“noise”or uncertainty,prior models of images(or depth maps,flowfields,three-dimensional volumes,etc.)come into play. Here we develop a method for learning priors for low-level vision problems that can be used in many standard vision, graphics,and image processing algorithms.The key idea is to formulate these priors as a high-order Markov random field(MRF)defined over large neighborhood systems.This is facilitated by exploiting ideas from sparse image patch representations.The resulting Field of Experts(FoE)mod-els the prior probability of an image,or other low-level rep-resentation,in terms of a randomfield with overlapping cliques,whose potentials are represented as a Product of Experts(Hinton1999).While this model applies to a wide range of low-level representations,this paper focuses on its applications to modeling images.In other work(Roth and Black2007b)we have already studied the application to modeling vector-valued opticalflowfields;other potential applications will be discussed in more detail below.To study the application of Fields of Experts to modeling natural images,we train the model on a standard database of natural images(Martin et al.2001)and develop a diffusion-like scheme that exploits the prior for approximate Bayesian inference.To demonstrate the power of the FoE model,we use it in two different applications:image denoising and im-age inpainting(Bertalmío et al.2000)(i.e.,filling in missing pixels in an image).Despite the generic nature of the prior and the simplicity of the approximate inference,we obtainFig.1Image restoration using a Field of Experts.(a )Image from the Corel database with additive Gaussian noise (σ=15,PSNR =24.63dB).(b )Image denoised using a Field of Experts(PSNR =30.72dB).(c )Original photograph with scratches.(d )Im-age inpainting using the FoE modelresults near the state of the art that,until now,were not pos-sible with MRF approaches.Figure 1illustrates the appli-cation of the FoE model to image denoising and image in-painting.We perform a detailed analysis of various aspects of the model and use image denoising as a running exam-ple for quantitative comparisons with the state of the art.We also provide quantitative results for the problem of image inpainting.Modeling image priors is challenging due to the high-dimensionality of images,their non-Gaussian statistics,and the need to model correlations in image structure over ex-tended image neighborhoods.It has been often observed that,for a wide variety of linear filters,the marginal filter responses are non-Gaussian,and that the responses of differ-ent filters are usually not independent (Huang and Mumford 1999;Srivastava et al.2002;Portilla et al.2003).As discussed in more detail below,there have been a number of attempts to overcome these difficulties and to model the statistics of small image patches as well as of entire images.Image patches have been modeled using a variety of sparse coding approaches or other sparse rep-resentations (Olshausen and Field 1997;Teh et al.2003).Many of these models,however,do not easily generalize to models for entire images,which has limited their impact for machine vision applications.Markov random fields on the other hand can be used to model the statistics of en-tire images (Geman and Geman 1984;Besag 1986).They have been widely used in machine vision,but often exhibit serious limitations.In particular,MRF priors typically ex-ploit hand-crafted clique potentials and small neighborhood systems,which limit the expressiveness of the models and only crudely capture the statistics of natural images.A no-table exception to this is the FRAME model by Zhu et al.(1998),which learns clique potentials for larger neighbor-hoods from training data by modeling the responses of a set of predefined linear filters.The goal of the current paper is to develop a frame-work for learning expressive yet generic prior models for low-level vision problems.In contrast to example-based ap-proaches,we develop a parametric representation that usesexamples for training,but does not rely on examples as part of the representation.Such a parametric model has advan-tages over example-based methods in that it generalizes bet-ter beyond the training data and allows for the use of more elegant optimization methods.The core contribution is to extend Markov random fields beyond FRAME by model-ing the local field potentials with learned filters.To do so,we exploit ideas from the Product-of-Experts (PoE)frame-work (Hinton 1999),which is a generic method for learn-ing high dimensional probability distributions.Previous ef-forts to model images using Products of Experts (Teh et al.2003)were patch-based and hence inappropriate for learn-ing generic priors for images or other low-level representa-tions of arbitrary size.We extend these methods,yielding a translation-invariant prior.The Field-of-Experts framework provides a principled way to learn MRFs from examples and the improved modeling power makes them practical for complex tasks.12Background and Previous WorkFormal models of image or scene structure play an impor-tant role in many vision problems where ambiguity,noise,or missing sensor data make the recovery of world or image structure difficult or impossible.Models of a priori structure are used to resolve,or regularize,such problems by provid-ing additional constraints that impose prior assumptions or knowledge.For low-level vision applications the need for modeling such prior knowledge has long been recognized (Geman and Geman 1984;Poggio et al.1985),for example due to their frequently ill-posed nature.Often these mod-els entail assuming spatial smoothness or piecewise-smooth-ness of various image properties.While there are many ways of imposing prior knowledge,we focus here on probabilistic prior models,which have a long history and provide a rig-orous framework within which to combine different sources1Thispaper is an extended version of Roth and Black (2005).of information.Other regularization methods,such as deter-ministic ones,including variational approaches(Poggio et al.1985)will only be discussed briefly.For problems in low-level vision,such probabilistic prior models of the spatial structure of images or scene proper-ties are often formulated as Markov randomfields(MRFs) (Wong1968;Kashyap and Chellappa1981;Geman and Ge-man1984;Besag1986;Marroquin et al.1987;Szeliski 1990)(see Li2001for a recent overview and introduction). Markov randomfields have found many areas of applica-tion including image denoising(Sebastiani and Godtlieb-sen1997),stereo(Sun et al.2003),opticalflow estimation (Heitz and Bouthemy1993),texture classification(Varma and Zisserman2005),to name a few.MRFs are undirected graphical models,where the nodes of the graph represent random variables which,in low-level vision applications, typically correspond to image measurements such as pixel intensities,range values,surface normals,or opticalflow vectors.Formally,we let the image measurements x be rep-resented by nodes V in a graph G=(V,E),where E are the edges connecting nodes.The edges between the nodes indicate the factorization structure of the probability den-sity p(x)described by the MRF.More precisely,the maxi-mal cliques x(k),k=1,...,K of the graph directly corre-spond to factors of the probability density.The Hammersley-Clifford theorem(Moussouris1974)establishes that we can write the probability density of this graphical model as a Gibbs distributionp(x)=1Zexp−kU k(x(k)),(1)where x is an image,U k(x(k))is the so-called potential func-tion for clique x(k),and Z is a normalizing term called the partition function.In many cases,it is reasonably assumed that the MRF is homogeneous;i.e.,the potential function is the same for all cliques(or in other terms U k(x(k))= U(x(k))).This property gives rise to the translation-invari-ance of an MRF model for low-level vision applications.2 Equivalently,we can also write the density under this model asp(x)=1Zkf k(x(k)),(2)which makes the factorization structure of the model even more explicit.Here,f k(x(k))are the factors defined on clique x(k),which,in an abuse of terminology,we also sometimes call potentials.2When we talk about translation-invariance,we disregard the fact that thefinite size of the image will make this property hold only approxi-mately.Because of the regular structure of images,the edges of the graph are usually chosen according to some regular neighborhood structure.In almost all cases,this neighbor-hood structure is chosen a priori by hand,although the type of edge structure and the choice of potentials varies sub-stantially.The vast majority of models use a pairwise graph structure;each node(i.e.,pixel)is connected to its4di-rect neighbors to the left,right,top,and bottom(Geman and Geman1984;Besag1986;Sebastiani and Godtlieb-sen1997;Tappen et al.2003;Neher and Srivastava2005). This induces a so-called pairwise MRF,because the maxi-mal cliques are simply pairs of neighboring nodes(pixels), and hence each potential is a function of two pixel values:p(x)=1Zexp⎛⎝−(i,j)∈EU(x i,x j)⎞⎠.(3)Moreover,the potential is typically defined in terms of some robust function of the difference between neighboring pixel valuesU(x i,x j)=ρ(x i−x j),(4)where a typicalρ-function is shown in Fig.2.The truncated quadraticρ-function in Fig.2allows spatial discontinuities by not heavily penalizing large neighbor differences.The difference between neighboring pixel values also has an intuitive interpretation,as it approximates a hori-zontal or vertical image derivative.The robust function can thus be understood as modeling the statistics of thefirst derivatives of the images.These statistics,as well as the study of the statistics of natural images in general have re-ceived a lot of attention in the literature(Ruderman1994; Olshausen and Field1996;Huang and Mumford1999; Srivastava et al.2003).A review of this literature is well be-yond the scope of this paper and the reader is thus referred to above papers for an overview.Despite their long history,MRF methods have often pro-duced disappointing results when applied to the recovery of complex scene structure.One of the reasons for this is that the typical pairwise model structure severely restricts the image structures that can be represented.In the major-ity of the cases,the potentials are furthermore hand-defined, and consequently are only ad hoc models of image or scene structure.The resulting probabilistic models typically do not well represent the statistical properties of natural images and scenes,which leads to poor application performance.For example,Fig.2shows the result of using a pairwise MRF model with a truncated quadratic potential function to re-move noise from an image.The estimated image is char-acteristic of many MRF results;the robust potential func-tion produces sharp boundaries but the result is piecewise smooth and does not capture the more complex textural pro-perties of natural scenes.Fig.2Typical pairwise MRF potential and results:(a)Example of a common robust potential function(negative log-probability).This truncated quadratic is often used to model piecewise smooth surfaces.(b)Image with Gaussian noise added.(c)Typical result of denoising using an ad-hoc pairwise MRF(obtained using the method of Felzen-szwalb and Huttenlocher2004).Note the piecewise smooth nature of the restoration and how it lacks the textural detail of natural scenesFor some years it was unclear whether the limited ap-plication performance of pairwise MRFs was due to limi-tations of the model,or due to limitations of the optimiza-tion approaches used with non-convex models.Yanover et al.(2006)have recently obtained global solutions to low-level vision problems even with non-convex pairwise MRFs. Their results indicate that pairwise models are incapable of producing very high-quality solutions for stereo prob-lems and suggest that richer models are needed for low-level modeling.Gimel’farb(1996)proposes a model with multiple and more distant neighbors,which are able to model more com-plex spatial properties(see also Zalesny and van Gool2001). Of particular note,this method learns the neighborhood structure that best represents a set of training data;in the case of texture modeling,different textures result in quite different neighborhood systems.This work however has been limited to modeling specific classes of image texture and our experiments with modeling more diverse classes of generic image structure suggest these methods do not scale well beyond narrow,class-specific,image priors.2.1High-Order Markov Random FieldsThere have been a number of attempts to go beyond these very simple pairwise models,which only model the statistics offirst derivatives in the image structure(Geman et al.1992; Zhu and Mumford1997;Zhu et al.1998;Tjelmeland and Besag1998;Paget and Longstaff1998).The basic insight behind such high-order models is that the generality of MRFs allows for richer models through the use of larger maximal cliques.One approach uses the second derivatives of image structure.Geman and Reynolds(1992),for ex-ample,formulate MRF potentials using polynomials deter-mined by the order of the(image)surface being modeled (k=1,2,3for constant,planar,or quadric).In the context of this work,we think of these polynomi-als as defining linearfilters,J i,over local neighborhoodsof Fig.3Filters representingfirst and second order neighborhood sys-tems(Geman and Reynolds1992).The left twofilters correspond to first derivatives,the right threefilters to second derivatives pixels.For the quadric case,the corresponding3×3filters are shown in Fig.3.In this example,the maximal cliques are square patches of3×3pixels and their corresponding potential for clique x(k)centered at pixel k is written asU(x(k))=5i=1ρ(J T i x(k)),(5)where the J i are the shown derivativefilters.Whenρis a robust potential,this corresponds to the weak plate model (Blake and Zisserman1987).The above models are capable of representing richer structural properties beyond the piecewise spatial smooth-ness of pairwise models,but have remained largely hand-defined.The designer decides what might be a good model for a particular problem and chooses a neighborhood sys-tem,the potential function,and its parameters.2.2Learning MRF ModelsHand selection of parameters is not only somewhat arbitrary and can cause models to only poorly capture the statistics of the data,but is also particularly cumbersome for models with many parameters.There exist a number of methods for learning the parameters of the potentials from training data (see Li2001for an overview).In the context of images,Be-sag(1986)for example uses the pseudo-likelihood criterion to learn the parameters of a parametric potential function for a pairwise MRF from training data.Applying pseudo-likelihood in the high-order case is,however,hindered bythe fact that computing the necessary conditionals is often difficult.For Markov randomfield modeling in general(i.e.,not specifically for vision applications),maximum likelihood (ML)(Geyer1991)is probably the most widely used learn-ing criterion.Nevertheless,due to its often extreme compu-tational demands,it has long been avoided.Hinton(2002) recently proposed a learning rule for energy-based models, called contrastive divergence(CD),which resembles max-imum likelihood,but allows for much more efficient com-putation.In this paper we apply contrastive divergence to the problem of learning Markov randomfield models of im-ages;details will be discussed below.Other learning meth-ods include iterative scaling(Darroch and Ratcliff1972; della Pietra et al.1997),score matching(Hyvaärinen2005), discriminative training of energy-based models(LeCun and Huang2005),as well as a large set of variational(and re-lated)approximations to maximum likelihood(Jordan et al.1999;Yedidia et al.2003;Welling and Sutton2005; Minka2005).In this work,Markov randomfields are used to model prior distributions of images and potentially other scene properties,but in the literature,MRF models have also been used to directly model the posterior distribution for partic-ular low-level vision applications.For these applications, it can be beneficial to train MRF models discriminatively (Ning et al.2005;Kumar and Hebert2006).This is not pur-sued here.In low-level vision applications,most of these learn-ing methods have not found widespread use.Nevertheless, maximum likelihood has been successfully applied to the problem of modeling images(Zhu and Mumford1997; Descombes et al.1999).One model that is of particular im-portance in the context of this paper is the FRAME model of Zhu et al.(1998).It took a step toward more practical MRF models,as it is of high-order and allows its parameters to be learned from training data,for example from a set of nat-ural images(Zhu and Mumford1997).This method uses a “filter pursuit”strategy to selectfilters from a pre-defined set of standard imagefilters;the potential functions model the responses of thesefilters using aflexible,discrete,non-parametric representation.The discrete nature of this repre-sentation complicates its use,and,while the method exhib-ited good results for texture synthesis,the reported image restoration results appear to fall below the current state of the art.To model more complex local statistics a number of au-thors have turned to empirical probabilistic models captured by a database of image patches.Freeman et al.(2000)pro-pose an MRF model that uses example image patches and a measure of consistency between them to model scene struc-ture.This idea has been exploited as a prior model for image based rendering(Fitzgibbon et al.2003)and super-resolu-tion(Pickup et al.2004).The roots of these models are in example-based texture synthesis(Efros and Leung1999).In contrast,our approach uses parametric(and differen-tiable)potential functions applied tofilter responses.Unlike the FRAME model,we learn thefilters themselves as well as the parameters of the potential functions.As we will show, the resultingfilters appear quite different from standardfil-ters and achieve better performance than do standardfilters in a variety of tasks.A computational advantage of our para-metric model is that it is differentiable,which facilitates var-ious learning and inference methods.2.3InferenceTo apply MRF models to actual problems in low-level vi-sion,we compute a solution using tools from probabilis-tic inference.Inference in this context typically means ei-ther performing maximum a posteriori(MAP)estimation, or computing expectations over the solution mon to all MRF models in low-level vision is the fact that infer-ence is challenging,both algorithmically and computation-ally.The loopy structure of the underlying graph makes ex-act inference NP-hard in the general case,although special cases exist where polynomial time algorithms are known. Because of that,inference is usually performed in an approx-imate fashion,for which there are a wealth of different tech-niques.Classical techniques include Gibbs sampling(Ge-man and Geman1984),deterministic annealing(Hofmann et al.1998),and iterated conditional modes(Besag1986). More recently,algorithms based on graph cuts(Kolmogorov and Zabih2004)have become very popular for MAP infer-ence.Variational techniques and related ones,such as belief propagation(Yedidia et al.2003),have also enjoyed enor-mous popularity,both for MAP inference and computing marginals.Nevertheless,even with such modern approxi-mate techniques,inference can be quite slow,which has prompted the development of models that simplify infer-ence(Felzenszwalb and Huttenlocher2004).While these may make inference easier,they typically give the answer to the wrong problem,as the model does not capture the rel-evant statistics well(cf.Fig.2).Inference in high-order MRF models is particularly de-manding,because the larger size of the cliques complicates the(approximate)inference process.Because of that,we rely on very simple approximate inference schemes using the conjugate gradient method.Nevertheless,the applica-bility of more sophisticated inference techniques to models such as the one proposed here,promises to be a fruitful area for future work(cf.Potetz2007;Kohli et al.2007).2.4Other Regularization MethodsIt is worth noting that prior models of spatial structure are also often formulated as energy terms(e.g.,log-probability)and used in non-probabilistic regularization methods(Pog-gio et al.1985).While we pursue a probabilistic framework here,the methods are applicable to contexts where determin-istic regularization methods are applied.This suggests that our FoE framework is applicable to a wide class of varia-tional frameworks(see Schnörr et al.1996for a review of such techniques).Interestingly,many of these deterministic regularization approaches,for example variational(Schnörr et al.1996)or nonlinear-diffusion related methods(Weickert1997),suffer from very similar limitations as typical MRF approaches. This is because they penalize large image derivatives sim-ilar to pairwise MRFs.Moreover,in order to show the ex-istence of a unique global optimum,many models are re-stricted to be convex,and are furthermore mostly hand-defined.Non-convex regularizers often show superior per-formance in practice(Black et al.1998),and the missing connection to the statistics of natural images or scenes can be viewed as problematic.There have been variational and diffusion-related approaches that try to overcome some of these limitations(Gilboa et al.2004;Trobin et al.2008).2.5Models of Image PatchesEven though typically motivated from an image-coding or neurophysiological point of view,there is a large amount of related work in the area of sparse coding and component analysis,which attempts to model complex image struc-ture.Such models typically encode structural properties of images through a set of linearfilter responses or compo-nents.For example,Principal Component Analysis(PCA) (Roweis and Ghahramani1999)of image patches yields vi-sually intuitive components,some of which resemble deriv-ativefilters of various orders and orientations.The marginal statistics of suchfilters are highly non-Gaussian(Ruder-man1994)and are furthermore not independent,making this model unsuitable for probabilistically modeling image patches.Independent Component Analysis(ICA)(Bell and Sej-nowski1995),for example,assumes non-Gaussian statis-tics andfinds the linear components such that the statisti-cal dependence between the components is minimized.As opposed to the principal components,ICA yields localized components,which resemble Gaborfilters of various orien-tations,scales,and locations.Since the components(i.e.,fil-ters)J i∈R n found by ICA are by assumption indepen-dent,one can define a probabilistic model of image patchesx∈R n by multiplying the marginal distributions,p i(J T i x), of thefilter responses:p(x)∝ni=1p i(J T i x).(6)Notice that projecting an image patch onto a linear compo-nent(J T i x)is equivalent tofiltering the patch with a linearfilter described by J i.However,in the case of image patchesof n pixels it is generally impossible tofind n fully inde-pendent linear components,which makes the ICA modelonly an approximation.Somewhat similar to ICA are sparse-coding approaches(e.g.,Olshausen and Field1996),whichalso represent image patches in terms of a linear combina-tion of learnedfilters,but in a synthesis-based manner(seealso Elad et al.2006).Most of these methods,however,focus on image patchesand provide no direct way of modeling the statistics of wholeimages.Several authors have explored extending sparse cod-ing models to full images.For example,Sallee and Ol-shausen(2003)propose a prior model for entire images,butinference with this model requires Gibbs sampling,whichmakes it somewhat problematic for many machine visionapplications.Other work has integrated translation invari-ance constraints into the basisfinding process(Hashimotoand Kurata2000;Wersing et al.2003).The focus in thatwork,however,remains on modeling the image in terms ofa sparse linear combination of basisfilters with an empha-sis on the implications for human vision.Modeling entireimages has also been considered in the context of image de-noising(Elad and Aharon2006).While these approaches aremotivated in a way that is quite different from Markov ran-domfield approaches as emphasized here,they are similarin that they model the response to linearfilters and even al-low thefilters themselves to be learned.Another differenceis that the model of Elad and Aharon(2006)is not trainedoffline on a general database of natural images,but the pa-rameters are instead inferred“online”in the context of theapplication at hand.While this may also have advantages,itfor example makes the application to problems with missingdata(e.g.,inpainting)more difficult.Popular approaches to modeling images also includewavelet-based methods(Portilla et al.2003).Since neigh-boring wavelet coefficients are not independent,it is ben-eficial to model their dependencies.This has for examplebeen done in patches using Products of Experts(Gehler andWelling2006)or over entire wavelet subbands using MRFs(Lyu and Simoncelli2007).While such a modeling of thedependencies between wavelet coefficients bears similaritiesto the FoE model,these wavelet approaches do not directlyyield generic image priors due to the fact that they modelthe coefficients of an overcomplete wavelet transform.Theirapplicability has thus mostly been restricted to specific ap-plications,such as denoising.2.6Products of ExpertsProducts of Experts(PoE)(Hinton1999)have also beenused to model image patches(Welling et al.2003;Teh et al.。

全国大学英语CET四级考试试卷及答案指导(2025年)

2025年全国大学英语CET四级考试模拟试卷及答案指导一、写作(15分)CET-4 Writing SectionDirections: For this part, you are allowed 30 minutes to write a short essay entitled “The Importance of Teamwork”. You should write at least 120 words but no more than 180 words.Sample Essay: The Importance of TeamworkIn today’s fast-paced and highly competitive world, the concept of teamwork has become more crucial than ever. It is often said that one can go fast alone, but to go far, one must go together. This saying underlines the importance of teamwork in achieving common goals effectively and efficiently.Teamwork allows for the pooling of diverse skills and talents, which leads to more innovative solutions and better decision-making. When individuals with different backgrounds and expertise collaborate, they bring unique perspectives to the table, fostering an environment where creativity thrives. Furthermore, working as a team builds a support system, enabling members to rely on each other during challenging times, thus reducing stress and increasing job satisfaction.Another significant benefit of teamwork is the ability to accomplish tasksthat would be impossible for an individual to handle. By dividing work among team members based on their strengths, teams can tackle complex projects, ensuring all aspects are thoroughly covered. This not only improves the quality of work but also accelerizes the completion time.In conclusion, the value of teamwork cannot be overstated. It is through collaboration and mutual support that we can achieve great things, overcome obstacles, and reach our full potential. Embracing the spirit of teamwork is essential for both personal and professional success in our interconnected world.Analysis:•Introduction: The essay begins with a clear statement about the increasing significance of teamwork in the modern era, setting up the main argument.•Body Paragraphs:•The first body paragraph discusses how teamwork enhances innovation and decision-making by combining varied skills and viewpoints.•The second body paragraph highlights the supportive nature of teamwork, emphasizing its role in managing stress and boosting morale.• A third point is made about the efficiency and effectiveness gained from dividing labor according to individual strengths, allowing for thesuccessful execution of complex tasks.•Conclusion: The concluding paragraph reinforces the thesis, summarizing the key benefits of teamwork and linking them to broader concepts ofachievement and personal growth.This sample response adheres to the word limit (156 words), maintains a coherent structure, and provides specific examples to support the main points, making it a strong example for the CET-4 writing section.二、听力理解-短篇新闻(选择题,共7分)第一题News Item 1:A new study has found that the popularity of online shopping has led to a significant increase in the use of plastic packaging. The researchers analyzed data from various e-commerce platforms and discovered that the amount of plastic packaging used in online orders has doubled over the past five years. This has raised concerns about the environmental impact of e-commerce and the need for more sustainable packaging solutions.Questions:1、What is the main issue addressed in the news?A) The decline of traditional shopping methods.B) The environmental impact of online shopping.C) The growth of e-commerce platforms.D) The advantages of plastic packaging.2、According to the news, what has happened to the use of plastic packaging in online orders over the past five years?A) It has decreased by 50%.B) It has remained stable.C) It has increased by 25%.D) It has doubled.3、What is the primary concern raised by the study regarding online shopping?A) The increase in the number of e-commerce platforms.B) The high cost of online shopping.C) The environmental impact of plastic packaging.D) The difficulty in returning products.Answers:1、B) The environmental impact of online shopping.2、D) It has doubled.3、C) The environmental impact of plastic packaging.第二题Section B: Short NewsIn this section, you will hear one short news report. At the end of the news report, you will hear three questions. After each question, there is a pause. During the pause, you must read the four choices marked A), B), C) and D), and decide which is the best answer. Then mark the corresponding letter on the Answer Sheet with a single line through the center.News Report:The World Health Organization announced today that it has added the ChineseSinovac COVID-19 vaccine to its list of vaccines approved for emergency use. This move will facilitate the distribution of the vaccine in lower-income countries participating in the COVAX initiative aimed at ensuring equitable access to vaccines globally. The WHO praised the Sinovac vaccine for its easy storage requirements, making it ideal for areas with less sophisticated medical infrastructure.Questions:1、According to the news report, what did the WHO announce?A)The end of the pandemicB)Approval of a new vaccineC)Launch of a global health campaignD)Increased funding for vaccine researchAnswer: B) Approval of a new vaccine2、What was highlighted about the Sinovac vaccine by the WHO?A)It is the most effective vaccine availableB)It requires simple storage conditionsC)It is cheaper than other vaccinesD)It has no side effectsAnswer: B) It requires simple storage conditions3、What is the purpose of the COVAX initiative mentioned in the report?A)To speed up vaccine developmentB)To provide financial support to vaccine manufacturersC)To ensure equal access to vaccines worldwideD)To promote travel between countriesAnswer: C) To ensure equal access to vaccines worldwide三、听力理解-长对话(选择题,共8分)第一题Part Three: Long ConversationsIn this section, you will hear 1 long conversation. The conversation will be played twice. After you hear a part of the conversation, there will be a pause. Both the questions and the conversation will be spoken only once. After you hear a question, you must choose the best answer from the four choices marked A), B), C), and D). Then mark the corresponding letter on Answer Sheet 2 with a single line through the center.Now, listen to the conversation.Conversational Excerpt:M: Hey, Jane, how was your day at the office today?W: Oh, it was quite a challenge. I had to deal with a lot of issues. But I think I handled them pretty well.M: That’s good to hear. What were the main issues you faced?W: Well, first, we had a problem with the new software we’re tryin g to implement. It seems to be causing some technical difficulties.M: Oh no, that sounds frustrating. Did you manage to fix it?W: Not yet. I’m still trying to figure out what’s wrong. But I’m workingon it.M: That’s important. The company can’t afford a ny downtime with this software.W: Exactly. And then, I had to deal with a customer complaint. The customer was really upset because of a delayed shipment.M: That’s never a good situation. How did you handle it?W: I tried to be understanding and offered a discount on their next order. It seemed to calm them down a bit.M: That was a good move. Did it resolve the issue?W: Yes, it did. They’re satisfied now, and I think we’ve avoided a bigger problem.M: It sounds like you had a busy day. But you did a good job handling everything.W: Thanks, I’m glad you think so.Questions:1、What was the main issue the woman faced with the new software?A) It was causing problems with the computer systems.B) It was taking longer to install than expected.C) It was causing technical difficulties.D) It was not compatible with their existing systems.2、How did the woman deal with the customer complaint?A) She escalated the issue to her supervisor.B) She offered a discount on the customer’s next order.C) She apologized directly to the customer.D) She sent the customer a refund check.3、What was the woman’s impression of her day at work?A) It was uneventful and unchallenging.B) It was quite stressful but rewarding.C) It was a day filled with unnecessary meetings.D) It was a day where she didn’t accomplish much.4、What did the man say about the woman’s day at work?A) He thought it was unproductive.B) He felt she had handled everything well.C) He thought she should have asked for help.D) He believed she should take a break.Answers:1、C2、B3、B4、B第二题对话内容:Man:Hey, Sarah. I heard you’re planning to go on a trip next month. Where are you heading?Sarah:Oh, hi, Mike! Yes, I’m really excited about it. I’m going to Japan. It’s my first time there.Man:That sounds amazing! How long will you be staying? And what places are you planning to visit?Sarah:I’ll be there for two weeks. My plan is to start in Tokyo and then travel to Kyoto, Osaka, and Hiroshima. I’ve always been fascinated by the mix of traditional and modern culture in Japan.Man: Two weeks should give you plenty of time to see a lot. Are you going alone or with someone?Sarah:Actually, I’m going with a group of friends from college. We all decided to take this trip together after graduation. It’ll be great to experience it with them.Man:That’s wonderful! Do you have everything planned out, like accommodations and transportation?Sarah:Mostly, yes. We’ve booked our flights and hotels, and we’re using the Japan Rail Pass for getting around. B ut we’re leaving some room for spontaneity too. Sometimes the best experiences come unexpectedly!Man:Absolutely, that’s the spirit of traveling. Well, I hope you have an incredible time. Don’t forget to try some local food and maybe bring back some souvenirs!Sarah:Thanks, Mike! I definitely won’t miss out on trying sushi and ramen, and I already have a list of gifts to buy for family and friends. I can’t waitto share my adventures with everyone when I get back.1、How long is Sarah planning to stay in Japan?•A) One week•B) Two weeks•C) Three weeks•D) One month答案: B) Two weeks2、Which of the following ci ties is NOT mentioned as part of Sarah’s itinerary?•A) Tokyo•B) Kyoto•C) Sapporo•D) Hiroshima答案: C) Sapporo3、Who is Sarah going to Japan with?•A) By herself•B) With her family•C) With a group of friends•D) With coworkers答案: C) With a group of friends4、What has Sarah and her friends prepared for their trip besides booking flights and hotels?•A) They have hired a personal guide.•B) They have reserved spots for cultural workshops.•C) They have purchased a Japan Rail Pass.•D) They have enrolled in a language course.答案: C) They have purchased a Japan Rail Pass.四、听力理解-听力篇章(选择题,共20分)第一题Section CDirections: In this section, you will hear a passage three times. When the passage is read for the first time, listen carefully for its general idea. When the passage is read for the second time, fill in the blanks with the exact words you have just heard. Finally, when the passage is read for the third time, check what you have written.Passage:In recent years, the concept of “soft skills” has become increasingly popular in the workplace. These are skills that are not traditionally taught in schools but are essential for success in the professional world. Soft skills include communication, teamwork, problem-solving, and time management.1、Many employers believe that soft skills are just as important as technical skills because they help employees adapt to changing work environments.2、One of the most important soft skills is communication. Effectivecommunication can prevent misunderstandings and improve relationships with colleagues.3、Teamwork is also crucial in today’s workplace. Being able to work well with others can lead to better productivity and innovation.4、Problem-solving skills are essential for overcoming obstacles and achieving goals. Employees who can think creatively and solve problems efficiently are highly valued.5、Time management is another key soft skill. Being able to prioritize tasks and manage time effectively can help employees meet deadlines and reduce stress.Questions:1、What is the main idea of the passage?A) The importance of technical skills in the workplace.B) The definition and examples of soft skills.C) The increasing popularity of soft skills in the workplace.D) The impact of soft skills on employee performance.2、Why do many employers believe soft skills are important?A) They are easier to teach than technical skills.B) They are not necessary for most jobs.C) They help employees adapt to changing work environments.D) They are more difficult to acquire than technical skills.3、Which of the following is NOT mentioned as a soft skill in the passage?A) Communication.B) Leadership.C) Problem-solving.D) Time management.Answers:1、C) The increasing popularity of soft skills in the workplace.2、C) They help employees adapt to changing work environments.3、B) Leadership.Second Part: Listening Comprehension - Passage QuestionsListen to the following passage carefully and then choose the best answer for each question.Passage:Every year, millions of people flock to beaches around the world for their vacations. While enjoying the sun and sand, few give much thought to the tiny organisms that make up the very sand they’re lying on. Sand is actually made from rock particles that have been broken down over time by natural processes. However, on some unique beaches, like those found in Hawaii, the sand has a significant component of coral and shell fragments, giving it a distinctive white color. Beaches not only provide relaxation but also play a crucial role in supporting marine life and protecting coastal areas from erosion.Questions:1、What do millions of people go to the beaches for annually?2、What makes the sand on Hawaiian beaches distinctive?3、Besides providing relaxation, what other important role do beaches serve?Answers:1、Vacations.2、The presence of coral and shell fragments.3、Supporting marine life and protecting coastal areas from erosion.第三题PassageThe rise of e-commerce has revolutionized the way we shop. With just a few clicks, customers can purchase products from all over the world and have them delivered to their doorstep. However, this convenience has also brought about some challenges, particularly in terms of logistics and environmental impact.One of the biggest concerns is the environmental impact of packaging. Traditional packaging materials, such as plastic bags and boxes, are not biodegradable and often end up in landfills, contributing to pollution.E-commerce companies have started to address this issue by offering packaging-free options and promoting the use of sustainable materials.Another challenge is the issue of returns. With the ease of online shopping, customers often order more items than they need, leading to a high rate of returns. This not only increases the carbon footprint of shipping but also creates additional waste. Some companies have introduced policies to encourage customers to return fewer items, such as offering incentives for reuse or donation.Despite these challenges, the e-commerce industry is not standing still. There are innovative solutions being developed to make the process more sustainable. For example, some companies are experimenting with drone delivery to reduce the number of vehicles on the road. Others are investing in energy-efficient data centers to power their operations.1、What is one of the main concerns related to e-commerce packaging?A)The high cost of shipping materials.B)The environmental impact of non-biodegradable materials.C)The difficulty in recycling packaging materials.2、How does the high rate of returns affect e-commerce?A)It increases the demand for new packaging materials.B)It leads to a decrease in the cost of shipping.C)It creates additional waste and increases the carbon footprint.3、What is an innovative solution being developed to make e-commerce more sustainable?A)The use of reusable packaging.B)The implementation of strict return policies.C)The introduction of drone delivery.Answers:1、B2、C3、A五、阅读理解-词汇理解(填空题,共5分)First QuestionPassage:In today’s fast-paced world, conservation has become a major concern for environmentalists and policymakers alike. Preserving natural resources is not just about protecting the environment; it also plays a critical role in ensuring sustainable development and improving the quality of life for future generations. Innovative methods are being explored to achieve this goal, including the use of renewable energy sources and promoting eco-friendly practices in industries.Questions:1、The word “conservation” in the passage most likely means:A) The act of using something economically or sparingly.B) The protection of natural resources from being wasted.C) The process of changing something fundamentally.D) The act of restoring something to its original state.Answer: B) The protection of natural resources from being wasted.2、The word “innovative” in the passage is closest in meaning to:A) Outdated.B) Traditional.C) Creative.D) Unchanged.Answer: C) Creative.3、Based on the context, t he term “eco-friendly” would be best described as:A) Practices that are harmful to the environment.B) Practices that are beneficial to the environment.C) Practices that have no impact on the environment.D) Practices that focus solely on economic growth.Answer: B) Practices that are beneficial to the environment.4、The phrase “sustainable development” in the text refers to:A) Development that uses up all available resources quickly.B) Development that meets present needs without compromising the ability of future generations to meet their own needs.C) Development that focuses only on immediate economic gains.D) Development that disregards environmental concerns.Answer: B) Development that meets present needs without compromising the ability of future generations to meet their own needs.5、When the passage mentions “quality of life,” it implies:A) A decrease in living standards over time.B) An improvement in the overall conditions under which people live and work.C) The absence of any efforts to improve living conditions.D) The focus on increasing industrial activities regardless of their impact.Answer: B) An improvement in the overall conditions under which people live and work.This format closely follows the structure you might find in an actual CET Band 4 exam, with a passage followed by vocabulary questions that test understanding of context and word meanings.第二题Reading PassagesIn today’s fast-paced world, staying informed about current events is more important than ever. One of the best ways to keep up with the news is to read newspapers. However, not all newspapers are created equal. Here is an overview of some of the most popular newspapers in the world.1.The New York Times (USA): Established in 1851, The New York Times is one of the most prestigious and influential newspapers in the world. It covers a wide range of topics, including national and international news, politics, business, science, technology, and culture.2.The Guardian (UK): The Guardian is a British newspaper that has been in circulation since 1821. It is known for its liberal bias and its commitment to investigative journalism. The Guardian covers a variety of issues, including politics, the environment, and social justice.3.Le Monde (France): Le Monde is a French newspaper that was founded in 1944. It is one of the most widely read newspapers in France and is known for its in-depth reporting and analysis of global events.4.The Times (UK): The Times is another British newspaper that has been in circulation since 1785. It is a conservative newspaper that focuses on politics, business, and finance.5.El País (Spain): El País is a Spanish newspaper that was founde d in 1976. It is one of the most popular newspapers in Spain and is known for its comprehensive coverage of national and international news.Vocabulary UnderstandingChoose the best word or phrase to complete each sentence. Write your answers in the spaces provided.1、The____________of The New York Times is that it is one of the most prestigious and influential newspapers in the world.a.reputationb.historyc.popularityd.bias2、The Guardian is known for its____________bias and its commitment to investigative journalism.a.liberalb.conservativec.moderated.biased3、Le Monde is one of the most widely read newspapers in France and is known forits____________reporting and analysis.a.shallowb.superficialc.in-depthd.brief4、The Times is a conservative newspaper that focuses on____________issues.a.socialb.economicc.politicald.cultural5、El País is one of the most popular newspapers in Spain and is known for its comprehensive____________of national and international news.a.reportingb.analysisc.coveraged.editorialAnswers:1、a. reputation2、a. liberal3、c. in-depth4、c. political5、c. coverage六、阅读理解-长篇阅读(选择题,共10分)第一题Reading Passage OneIn recent years, with the rapid development of the internet and mobile technology, online learning has become increasingly popular among students. Online courses, such as those offered by MOOCs (Massive Open Online Courses), provide students with convenient access to high-quality educational resources from around the world. However, despite the benefits of online learning, there are also some challenges and considerations that need to be addressed.1.The following passage is about:A. The advantages and disadvantages of online learningB. The impact of online learning on traditional educationC. The history of MOOCs and their role in educationD. The challenges faced by students in online learning2.According to the passage, what is one of the main benefits of online learning?A. It allows students to study at their own paceB. It provides access to a wider range of educational resourcesC. It increases the interaction between students and teachersD. It reduces the cost of education3.The passage mentions that online learning has become increasingly popular due to:A. The advancements in internet technologyB. The decline of traditional education systemsC. The desire for flexible learning schedulesD. All of the above4.What is one of the challenges mentioned in the passage that online learners may face?A. Limited access to technological devicesB. Difficulty in maintaining self-disciplineC. Lack of face-to-face interaction with teachersD. All of the above5.The passage suggests that in order to succeed in online learning, students should:A. Attend online classes regularlyB. Engage in active discussions with peersC. Set clear goals and deadlines for their studiesD. All of the above答案:1.A2.B3.D4.D5.D第二题Reading Passage OneThe rise of the Internet has revolutionized the way we communicate and accessinformation. One of the most significant impacts has been the transformation of education, with online learning becoming increasingly popular. This passage explores the benefits and challenges of online learning.The Benefits of Online Learning1.Flexibility: Online learning offers students the flexibility to study at their own pace and on their own schedule. This is particularly beneficial for working professionals and those with other commitments.2.Access to a Wide Range of Resources: Online courses often provide access to a wealth of resources, including textbooks, videos, and interactive materials that can enhance the learning experience.3.Diverse Learning Opportunities: Online learning platforms offer a wide variety of courses, ranging from traditional academic subjects to specialized and niche areas of study.4.Cost-Effective: Online courses can be more affordable than traditional classroom-based programs, especially for those who live far from educational institutions.The Challenges of Online Learning1.Self-Discipline: Online learning requires a high level of self-discipline and motivation, as students must manage their time and stay focused without the structure of a traditional classroom.2.Limited Interaction: Online courses often lack the face-to-face interaction that is common in traditional classrooms, which can impact the learning experience and social development of students.3.Technical Issues: Online learning relies heavily on technology, which can lead to technical issues that disrupt the learning process.4.Quality Assurance: With the proliferation of online courses, ensuring the quality and integrity of these courses can be a challenge.Questions:1、What is one of the main advantages of online learning mentioned in the passage?A. It is more expensive than traditional education.B. It requires students to be self-disciplined.C. It provides flexibility in studying.D. It lacks face-to-face interaction.2、According to the passage, what can online learning platforms offer that traditional classrooms might not?A. Limited access to textbooks.B. Fewer specialized courses.C. More interactive learning materials.D. No video resources.3、Which of the following is a challenge that online learning may present?A. Students can easily attend classes at a local university.B. There are no technical issues with online learning.C. It is difficult to ensure the quality of online courses.D. Online learning is always more affordable than traditional education.4、The passage suggests that online learning can be beneficial for:A. Students who prefer face-to-face interaction.B. Individuals with other commitments.C. Those who want to avoid textbooks.D. People who have no access to technology.5、What is one potential drawback of online learning that the passage discusses?A. The ability to study at any time.B. The use of a wide range of resources.C. The possibility of technical disruptions.D. The convenience of studying from home.Answers:1、C2、C3、C4、B5、C七、阅读理解-仔细阅读(选择题,共20分)第一题Reading PassagesIn the following passage, there are some blanks. For each blank there arefour choices marked A, B, C, and D. You should choose the one that best fits into the passage.The digital revolution is changing the way we live, work, and communicate. One of the most significant changes is the rise of artificial intelligence (AI). AI refers to the development of computer systems that can perform tasks that typically require human intelligence, such as visual perception, speech recognition, and decision-making.The potential of AI is enormous. It has the potential to transform industries, improve efficiency, and make our lives more convenient. However, with great power comes great responsibility. The ethical implications of AI are complex and multifaceted.1、The passage is mainly aboutA. the benefits of the digital revolutionB. the rise of artificial intelligenceC. the challenges of the digital revolutionD. the ethical implications of AI2、What is the main concern regarding AI mentioned in the passage?A. Its potential to disrupt traditional industriesB. Its potential to replace human jobsC. Its potential to be used for unethical purposesD. Its potential to cause social inequalities3、The author suggests that AI has the potential to。

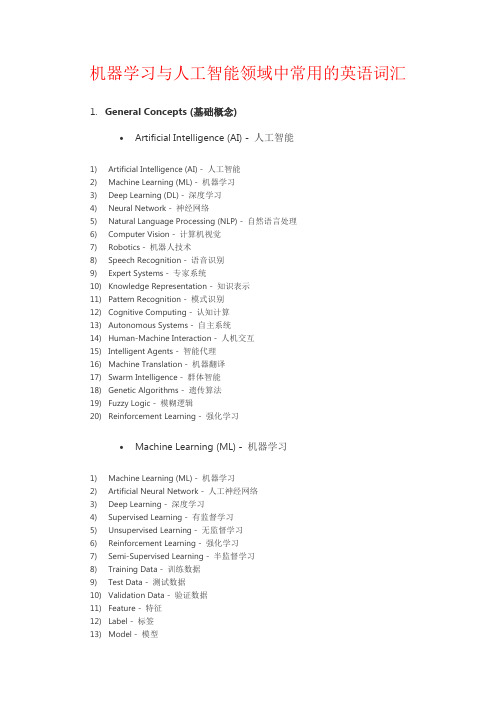

机器学习与人工智能领域中常用的英语词汇