Evolutionary function approximation for reinforcement learning

Self-adaptive differential evolution algorithm for numerical optimization

n

Abstract—In this paper, we propose an extension of Self-adaptive Differential Evolution algorithm (SaDE) to solve optimization problems with constraints. In comparison with the original SaDE algorithm, the replacement criterion was modified for handling constraints. The performance of the proposed method is reported on the set of 24 benchmark problems provided by CEC2006 special session on constrained real parameter optimization.

2006 IEEE Congress on Evolutionary Computation Sheraton Vancouver Wall Centre Hotel, Vancouver, BC, Canada July 16-21, 2006

Self-adaptive Differential Evolution Algorithm for Constrained Real-Parameter Optimization

“DE/rand/1”: Vi ,G = Xr ,G + F ⋅ Xr ,G − Xr G

1 2 3,

(

“DE/best/1”: Vi ,G = Xbest ,G + F ⋅ Xr ,G − X r G 1 2,

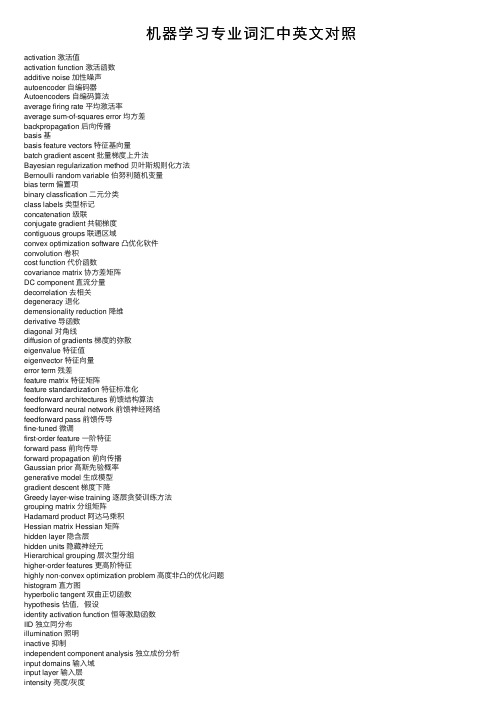

机器学习专业词汇中英文对照

机器学习专业词汇中英⽂对照activation 激活值activation function 激活函数additive noise 加性噪声autoencoder ⾃编码器Autoencoders ⾃编码算法average firing rate 平均激活率average sum-of-squares error 均⽅差backpropagation 后向传播basis 基basis feature vectors 特征基向量batch gradient ascent 批量梯度上升法Bayesian regularization method 贝叶斯规则化⽅法Bernoulli random variable 伯努利随机变量bias term 偏置项binary classfication ⼆元分类class labels 类型标记concatenation 级联conjugate gradient 共轭梯度contiguous groups 联通区域convex optimization software 凸优化软件convolution 卷积cost function 代价函数covariance matrix 协⽅差矩阵DC component 直流分量decorrelation 去相关degeneracy 退化demensionality reduction 降维derivative 导函数diagonal 对⾓线diffusion of gradients 梯度的弥散eigenvalue 特征值eigenvector 特征向量error term 残差feature matrix 特征矩阵feature standardization 特征标准化feedforward architectures 前馈结构算法feedforward neural network 前馈神经⽹络feedforward pass 前馈传导fine-tuned 微调first-order feature ⼀阶特征forward pass 前向传导forward propagation 前向传播Gaussian prior ⾼斯先验概率generative model ⽣成模型gradient descent 梯度下降Greedy layer-wise training 逐层贪婪训练⽅法grouping matrix 分组矩阵Hadamard product 阿达马乘积Hessian matrix Hessian 矩阵hidden layer 隐含层hidden units 隐藏神经元Hierarchical grouping 层次型分组higher-order features 更⾼阶特征highly non-convex optimization problem ⾼度⾮凸的优化问题histogram 直⽅图hyperbolic tangent 双曲正切函数hypothesis 估值,假设identity activation function 恒等激励函数IID 独⽴同分布illumination 照明inactive 抑制independent component analysis 独⽴成份分析input domains 输⼊域input layer 输⼊层intensity 亮度/灰度intercept term 截距KL divergence 相对熵KL divergence KL分散度k-Means K-均值learning rate 学习速率least squares 最⼩⼆乘法linear correspondence 线性响应linear superposition 线性叠加line-search algorithm 线搜索算法local mean subtraction 局部均值消减local optima 局部最优解logistic regression 逻辑回归loss function 损失函数low-pass filtering 低通滤波magnitude 幅值MAP 极⼤后验估计maximum likelihood estimation 极⼤似然估计mean 平均值MFCC Mel 倒频系数multi-class classification 多元分类neural networks 神经⽹络neuron 神经元Newton’s method ⽜顿法non-convex function ⾮凸函数non-linear feature ⾮线性特征norm 范式norm bounded 有界范数norm constrained 范数约束normalization 归⼀化numerical roundoff errors 数值舍⼊误差numerically checking 数值检验numerically reliable 数值计算上稳定object detection 物体检测objective function ⽬标函数off-by-one error 缺位错误orthogonalization 正交化output layer 输出层overall cost function 总体代价函数over-complete basis 超完备基over-fitting 过拟合parts of objects ⽬标的部件part-whole decompostion 部分-整体分解PCA 主元分析penalty term 惩罚因⼦per-example mean subtraction 逐样本均值消减pooling 池化pretrain 预训练principal components analysis 主成份分析quadratic constraints ⼆次约束RBMs 受限Boltzman机reconstruction based models 基于重构的模型reconstruction cost 重建代价reconstruction term 重构项redundant 冗余reflection matrix 反射矩阵regularization 正则化regularization term 正则化项rescaling 缩放robust 鲁棒性run ⾏程second-order feature ⼆阶特征sigmoid activation function S型激励函数significant digits 有效数字singular value 奇异值singular vector 奇异向量smoothed L1 penalty 平滑的L1范数惩罚Smoothed topographic L1 sparsity penalty 平滑地形L1稀疏惩罚函数smoothing 平滑Softmax Regresson Softmax回归sorted in decreasing order 降序排列source features 源特征sparse autoencoder 消减归⼀化Sparsity 稀疏性sparsity parameter 稀疏性参数sparsity penalty 稀疏惩罚square function 平⽅函数squared-error ⽅差stationary 平稳性(不变性)stationary stochastic process 平稳随机过程step-size 步长值supervised learning 监督学习symmetric positive semi-definite matrix 对称半正定矩阵symmetry breaking 对称失效tanh function 双曲正切函数the average activation 平均活跃度the derivative checking method 梯度验证⽅法the empirical distribution 经验分布函数the energy function 能量函数the Lagrange dual 拉格朗⽇对偶函数the log likelihood 对数似然函数the pixel intensity value 像素灰度值the rate of convergence 收敛速度topographic cost term 拓扑代价项topographic ordered 拓扑秩序transformation 变换translation invariant 平移不变性trivial answer 平凡解under-complete basis 不完备基unrolling 组合扩展unsupervised learning ⽆监督学习variance ⽅差vecotrized implementation 向量化实现vectorization ⽮量化visual cortex 视觉⽪层weight decay 权重衰减weighted average 加权平均值whitening ⽩化zero-mean 均值为零Letter AAccumulated error backpropagation 累积误差逆传播Activation Function 激活函数Adaptive Resonance Theory/ART ⾃适应谐振理论Addictive model 加性学习Adversarial Networks 对抗⽹络Affine Layer 仿射层Affinity matrix 亲和矩阵Agent 代理 / 智能体Algorithm 算法Alpha-beta pruning α-β剪枝Anomaly detection 异常检测Approximation 近似Area Under ROC Curve/AUC Roc 曲线下⾯积Artificial General Intelligence/AGI 通⽤⼈⼯智能Artificial Intelligence/AI ⼈⼯智能Association analysis 关联分析Attention mechanism 注意⼒机制Attribute conditional independence assumption 属性条件独⽴性假设Attribute space 属性空间Attribute value 属性值Autoencoder ⾃编码器Automatic speech recognition ⾃动语⾳识别Automatic summarization ⾃动摘要Average gradient 平均梯度Average-Pooling 平均池化Letter BBackpropagation Through Time 通过时间的反向传播Backpropagation/BP 反向传播Base learner 基学习器Base learning algorithm 基学习算法Batch Normalization/BN 批量归⼀化Bayes decision rule 贝叶斯判定准则Bayes Model Averaging/BMA 贝叶斯模型平均Bayes optimal classifier 贝叶斯最优分类器Bayesian decision theory 贝叶斯决策论Bayesian network 贝叶斯⽹络Between-class scatter matrix 类间散度矩阵Bias 偏置 / 偏差Bias-variance decomposition 偏差-⽅差分解Bias-Variance Dilemma 偏差 – ⽅差困境Bi-directional Long-Short Term Memory/Bi-LSTM 双向长短期记忆Binary classification ⼆分类Binomial test ⼆项检验Bi-partition ⼆分法Boltzmann machine 玻尔兹曼机Bootstrap sampling ⾃助采样法/可重复采样/有放回采样Bootstrapping ⾃助法Break-Event Point/BEP 平衡点Letter CCalibration 校准Cascade-Correlation 级联相关Categorical attribute 离散属性Class-conditional probability 类条件概率Classification and regression tree/CART 分类与回归树Classifier 分类器Class-imbalance 类别不平衡Closed -form 闭式Cluster 簇/类/集群Cluster analysis 聚类分析Clustering 聚类Clustering ensemble 聚类集成Co-adapting 共适应Coding matrix 编码矩阵COLT 国际学习理论会议Committee-based learning 基于委员会的学习Competitive learning 竞争型学习Component learner 组件学习器Comprehensibility 可解释性Computation Cost 计算成本Computational Linguistics 计算语⾔学Computer vision 计算机视觉Concept drift 概念漂移Concept Learning System /CLS 概念学习系统Conditional entropy 条件熵Conditional mutual information 条件互信息Conditional Probability Table/CPT 条件概率表Conditional random field/CRF 条件随机场Conditional risk 条件风险Confidence 置信度Confusion matrix 混淆矩阵Connection weight 连接权Connectionism 连结主义Consistency ⼀致性/相合性Contingency table 列联表Continuous attribute 连续属性Convergence 收敛Conversational agent 会话智能体Convex quadratic programming 凸⼆次规划Convexity 凸性Convolutional neural network/CNN 卷积神经⽹络Co-occurrence 同现Correlation coefficient 相关系数Cosine similarity 余弦相似度Cost curve 成本曲线Cost Function 成本函数Cost matrix 成本矩阵Cost-sensitive 成本敏感Cross entropy 交叉熵Cross validation 交叉验证Crowdsourcing 众包Curse of dimensionality 维数灾难Cut point 截断点Cutting plane algorithm 割平⾯法Letter DData mining 数据挖掘Data set 数据集Decision Boundary 决策边界Decision stump 决策树桩Decision tree 决策树/判定树Deduction 演绎Deep Belief Network 深度信念⽹络Deep Convolutional Generative Adversarial Network/DCGAN 深度卷积⽣成对抗⽹络Deep learning 深度学习Deep neural network/DNN 深度神经⽹络Deep Q-Learning 深度 Q 学习Deep Q-Network 深度 Q ⽹络Density estimation 密度估计Density-based clustering 密度聚类Differentiable neural computer 可微分神经计算机Dimensionality reduction algorithm 降维算法Directed edge 有向边Disagreement measure 不合度量Discriminative model 判别模型Discriminator 判别器Distance measure 距离度量Distance metric learning 距离度量学习Distribution 分布Divergence 散度Diversity measure 多样性度量/差异性度量Domain adaption 领域⾃适应Downsampling 下采样D-separation (Directed separation)有向分离Dual problem 对偶问题Dummy node 哑结点Dynamic Fusion 动态融合Dynamic programming 动态规划Letter EEigenvalue decomposition 特征值分解Embedding 嵌⼊Emotional analysis 情绪分析Empirical conditional entropy 经验条件熵Empirical entropy 经验熵Empirical error 经验误差Empirical risk 经验风险End-to-End 端到端Energy-based model 基于能量的模型Ensemble learning 集成学习Ensemble pruning 集成修剪Error Correcting Output Codes/ECOC 纠错输出码Error rate 错误率Error-ambiguity decomposition 误差-分歧分解Euclidean distance 欧⽒距离Evolutionary computation 演化计算Expectation-Maximization 期望最⼤化Expected loss 期望损失Exploding Gradient Problem 梯度爆炸问题Exponential loss function 指数损失函数Extreme Learning Machine/ELM 超限学习机Letter FFactorization 因⼦分解False negative 假负类False positive 假正类False Positive Rate/FPR 假正例率Feature engineering 特征⼯程Feature selection 特征选择Feature vector 特征向量Featured Learning 特征学习Feedforward Neural Networks/FNN 前馈神经⽹络Fine-tuning 微调Flipping output 翻转法Fluctuation 震荡Forward stagewise algorithm 前向分步算法Frequentist 频率主义学派Full-rank matrix 满秩矩阵Functional neuron 功能神经元Letter GGain ratio 增益率Game theory 博弈论Gaussian kernel function ⾼斯核函数Gaussian Mixture Model ⾼斯混合模型General Problem Solving 通⽤问题求解Generalization 泛化Generalization error 泛化误差Generalization error bound 泛化误差上界Generalized Lagrange function ⼴义拉格朗⽇函数Generalized linear model ⼴义线性模型Generalized Rayleigh quotient ⼴义瑞利商Generative Adversarial Networks/GAN ⽣成对抗⽹络Generative Model ⽣成模型Generator ⽣成器Genetic Algorithm/GA 遗传算法Gibbs sampling 吉布斯采样Gini index 基尼指数Global minimum 全局最⼩Global Optimization 全局优化Gradient boosting 梯度提升Gradient Descent 梯度下降Graph theory 图论Ground-truth 真相/真实Letter HHard margin 硬间隔Hard voting 硬投票Harmonic mean 调和平均Hesse matrix 海塞矩阵Hidden dynamic model 隐动态模型Hidden layer 隐藏层Hidden Markov Model/HMM 隐马尔可夫模型Hierarchical clustering 层次聚类Hilbert space 希尔伯特空间Hinge loss function 合页损失函数Hold-out 留出法Homogeneous 同质Hybrid computing 混合计算Hyperparameter 超参数Hypothesis 假设Hypothesis test 假设验证Letter IICML 国际机器学习会议Improved iterative scaling/IIS 改进的迭代尺度法Incremental learning 增量学习Independent and identically distributed/i.i.d. 独⽴同分布Independent Component Analysis/ICA 独⽴成分分析Indicator function 指⽰函数Individual learner 个体学习器Induction 归纳Inductive bias 归纳偏好Inductive learning 归纳学习Inductive Logic Programming/ILP 归纳逻辑程序设计Information entropy 信息熵Information gain 信息增益Input layer 输⼊层Insensitive loss 不敏感损失Inter-cluster similarity 簇间相似度International Conference for Machine Learning/ICML 国际机器学习⼤会Intra-cluster similarity 簇内相似度Intrinsic value 固有值Isometric Mapping/Isomap 等度量映射Isotonic regression 等分回归Iterative Dichotomiser 迭代⼆分器Letter KKernel method 核⽅法Kernel trick 核技巧Kernelized Linear Discriminant Analysis/KLDA 核线性判别分析K-fold cross validation k 折交叉验证/k 倍交叉验证K-Means Clustering K – 均值聚类K-Nearest Neighbours Algorithm/KNN K近邻算法Knowledge base 知识库Knowledge Representation 知识表征Letter LLabel space 标记空间Lagrange duality 拉格朗⽇对偶性Lagrange multiplier 拉格朗⽇乘⼦Laplace smoothing 拉普拉斯平滑Laplacian correction 拉普拉斯修正Latent Dirichlet Allocation 隐狄利克雷分布Latent semantic analysis 潜在语义分析Latent variable 隐变量Lazy learning 懒惰学习Learner 学习器Learning by analogy 类⽐学习Learning rate 学习率Learning Vector Quantization/LVQ 学习向量量化Least squares regression tree 最⼩⼆乘回归树Leave-One-Out/LOO 留⼀法linear chain conditional random field 线性链条件随机场Linear Discriminant Analysis/LDA 线性判别分析Linear model 线性模型Linear Regression 线性回归Link function 联系函数Local Markov property 局部马尔可夫性Local minimum 局部最⼩Log likelihood 对数似然Log odds/logit 对数⼏率Logistic Regression Logistic 回归Log-likelihood 对数似然Log-linear regression 对数线性回归Long-Short Term Memory/LSTM 长短期记忆Loss function 损失函数Letter MMachine translation/MT 机器翻译Macron-P 宏查准率Macron-R 宏查全率Majority voting 绝对多数投票法Manifold assumption 流形假设Manifold learning 流形学习Margin theory 间隔理论Marginal distribution 边际分布Marginal independence 边际独⽴性Marginalization 边际化Markov Chain Monte Carlo/MCMC 马尔可夫链蒙特卡罗⽅法Markov Random Field 马尔可夫随机场Maximal clique 最⼤团Maximum Likelihood Estimation/MLE 极⼤似然估计/极⼤似然法Maximum margin 最⼤间隔Maximum weighted spanning tree 最⼤带权⽣成树Max-Pooling 最⼤池化Mean squared error 均⽅误差Meta-learner 元学习器Metric learning 度量学习Micro-P 微查准率Micro-R 微查全率Minimal Description Length/MDL 最⼩描述长度Minimax game 极⼩极⼤博弈Misclassification cost 误分类成本Mixture of experts 混合专家Momentum 动量Moral graph 道德图/端正图Multi-class classification 多分类Multi-document summarization 多⽂档摘要Multi-layer feedforward neural networks 多层前馈神经⽹络Multilayer Perceptron/MLP 多层感知器Multimodal learning 多模态学习Multiple Dimensional Scaling 多维缩放Multiple linear regression 多元线性回归Multi-response Linear Regression /MLR 多响应线性回归Mutual information 互信息Letter NNaive bayes 朴素贝叶斯Naive Bayes Classifier 朴素贝叶斯分类器Named entity recognition 命名实体识别Nash equilibrium 纳什均衡Natural language generation/NLG ⾃然语⾔⽣成Natural language processing ⾃然语⾔处理Negative class 负类Negative correlation 负相关法Negative Log Likelihood 负对数似然Neighbourhood Component Analysis/NCA 近邻成分分析Neural Machine Translation 神经机器翻译Neural Turing Machine 神经图灵机Newton method ⽜顿法NIPS 国际神经信息处理系统会议No Free Lunch Theorem/NFL 没有免费的午餐定理Noise-contrastive estimation 噪⾳对⽐估计Nominal attribute 列名属性Non-convex optimization ⾮凸优化Nonlinear model ⾮线性模型Non-metric distance ⾮度量距离Non-negative matrix factorization ⾮负矩阵分解Non-ordinal attribute ⽆序属性Non-Saturating Game ⾮饱和博弈Norm 范数Normalization 归⼀化Nuclear norm 核范数Numerical attribute 数值属性Letter OObjective function ⽬标函数Oblique decision tree 斜决策树Occam’s razor 奥卡姆剃⼑Odds ⼏率Off-Policy 离策略One shot learning ⼀次性学习One-Dependent Estimator/ODE 独依赖估计On-Policy 在策略Ordinal attribute 有序属性Out-of-bag estimate 包外估计Output layer 输出层Output smearing 输出调制法Overfitting 过拟合/过配Oversampling 过采样Letter PPaired t-test 成对 t 检验Pairwise 成对型Pairwise Markov property 成对马尔可夫性Parameter 参数Parameter estimation 参数估计Parameter tuning 调参Parse tree 解析树Particle Swarm Optimization/PSO 粒⼦群优化算法Part-of-speech tagging 词性标注Perceptron 感知机Performance measure 性能度量Plug and Play Generative Network 即插即⽤⽣成⽹络Plurality voting 相对多数投票法Polarity detection 极性检测Polynomial kernel function 多项式核函数Pooling 池化Positive class 正类Positive definite matrix 正定矩阵Post-hoc test 后续检验Post-pruning 后剪枝potential function 势函数Precision 查准率/准确率Prepruning 预剪枝Principal component analysis/PCA 主成分分析Principle of multiple explanations 多释原则Prior 先验Probability Graphical Model 概率图模型Proximal Gradient Descent/PGD 近端梯度下降Pruning 剪枝Pseudo-label 伪标记Letter QQuantized Neural Network 量⼦化神经⽹络Quantum computer 量⼦计算机Quantum Computing 量⼦计算Quasi Newton method 拟⽜顿法Letter RRadial Basis Function/RBF 径向基函数Random Forest Algorithm 随机森林算法Random walk 随机漫步Recall 查全率/召回率Receiver Operating Characteristic/ROC 受试者⼯作特征Rectified Linear Unit/ReLU 线性修正单元Recurrent Neural Network 循环神经⽹络Recursive neural network 递归神经⽹络Reference model 参考模型Regression 回归Regularization 正则化Reinforcement learning/RL 强化学习Representation learning 表征学习Representer theorem 表⽰定理reproducing kernel Hilbert space/RKHS 再⽣核希尔伯特空间Re-sampling 重采样法Rescaling 再缩放Residual Mapping 残差映射Residual Network 残差⽹络Restricted Boltzmann Machine/RBM 受限玻尔兹曼机Restricted Isometry Property/RIP 限定等距性Re-weighting 重赋权法Robustness 稳健性/鲁棒性Root node 根结点Rule Engine 规则引擎Rule learning 规则学习Letter SSaddle point 鞍点Sample space 样本空间Sampling 采样Score function 评分函数Self-Driving ⾃动驾驶Self-Organizing Map/SOM ⾃组织映射Semi-naive Bayes classifiers 半朴素贝叶斯分类器Semi-Supervised Learning 半监督学习semi-Supervised Support Vector Machine 半监督⽀持向量机Sentiment analysis 情感分析Separating hyperplane 分离超平⾯Sigmoid function Sigmoid 函数Similarity measure 相似度度量Simulated annealing 模拟退⽕Simultaneous localization and mapping 同步定位与地图构建Singular Value Decomposition 奇异值分解Slack variables 松弛变量Smoothing 平滑Soft margin 软间隔Soft margin maximization 软间隔最⼤化Soft voting 软投票Sparse representation 稀疏表征Sparsity 稀疏性Specialization 特化Spectral Clustering 谱聚类Speech Recognition 语⾳识别Splitting variable 切分变量Squashing function 挤压函数Stability-plasticity dilemma 可塑性-稳定性困境Statistical learning 统计学习Status feature function 状态特征函Stochastic gradient descent 随机梯度下降Stratified sampling 分层采样Structural risk 结构风险Structural risk minimization/SRM 结构风险最⼩化Subspace ⼦空间Supervised learning 监督学习/有导师学习support vector expansion ⽀持向量展式Support Vector Machine/SVM ⽀持向量机Surrogat loss 替代损失Surrogate function 替代函数Symbolic learning 符号学习Symbolism 符号主义Synset 同义词集Letter TT-Distribution Stochastic Neighbour Embedding/t-SNE T – 分布随机近邻嵌⼊Tensor 张量Tensor Processing Units/TPU 张量处理单元The least square method 最⼩⼆乘法Threshold 阈值Threshold logic unit 阈值逻辑单元Threshold-moving 阈值移动Time Step 时间步骤Tokenization 标记化Training error 训练误差Training instance 训练⽰例/训练例Transductive learning 直推学习Transfer learning 迁移学习Treebank 树库Tria-by-error 试错法True negative 真负类True positive 真正类True Positive Rate/TPR 真正例率Turing Machine 图灵机Twice-learning ⼆次学习Letter UUnderfitting ⽋拟合/⽋配Undersampling ⽋采样Understandability 可理解性Unequal cost ⾮均等代价Unit-step function 单位阶跃函数Univariate decision tree 单变量决策树Unsupervised learning ⽆监督学习/⽆导师学习Unsupervised layer-wise training ⽆监督逐层训练Upsampling 上采样Letter VVanishing Gradient Problem 梯度消失问题Variational inference 变分推断VC Theory VC维理论Version space 版本空间Viterbi algorithm 维特⽐算法Von Neumann architecture 冯 · 诺伊曼架构Letter WWasserstein GAN/WGAN Wasserstein⽣成对抗⽹络Weak learner 弱学习器Weight 权重Weight sharing 权共享Weighted voting 加权投票法Within-class scatter matrix 类内散度矩阵Word embedding 词嵌⼊Word sense disambiguation 词义消歧Letter ZZero-data learning 零数据学习Zero-shot learning 零次学习Aapproximations近似值arbitrary随意的affine仿射的arbitrary任意的amino acid氨基酸amenable经得起检验的axiom公理,原则abstract提取architecture架构,体系结构;建造业absolute绝对的arsenal军⽕库assignment分配algebra线性代数asymptotically⽆症状的appropriate恰当的Bbias偏差brevity简短,简洁;短暂broader⼴泛briefly简短的batch批量Cconvergence 收敛,集中到⼀点convex凸的contours轮廓constraint约束constant常理commercial商务的complementarity补充coordinate ascent同等级上升clipping剪下物;剪报;修剪component分量;部件continuous连续的covariance协⽅差canonical正规的,正则的concave⾮凸的corresponds相符合;相当;通信corollary推论concrete具体的事物,实在的东西cross validation交叉验证correlation相互关系convention约定cluster⼀簇centroids 质⼼,形⼼converge收敛computationally计算(机)的calculus计算Dderive获得,取得dual⼆元的duality⼆元性;⼆象性;对偶性derivation求导;得到;起源denote预⽰,表⽰,是…的标志;意味着,[逻]指称divergence 散度;发散性dimension尺度,规格;维数dot⼩圆点distortion变形density概率密度函数discrete离散的discriminative有识别能⼒的diagonal对⾓dispersion分散,散开determinant决定因素disjoint不相交的Eencounter遇到ellipses椭圆equality等式extra额外的empirical经验;观察ennmerate例举,计数exceed超过,越出expectation期望efficient⽣效的endow赋予explicitly清楚的exponential family指数家族equivalently等价的Ffeasible可⾏的forary初次尝试finite有限的,限定的forgo摒弃,放弃fliter过滤frequentist最常发⽣的forward search前向式搜索formalize使定形Ggeneralized归纳的generalization概括,归纳;普遍化;判断(根据不⾜)guarantee保证;抵押品generate形成,产⽣geometric margins⼏何边界gap裂⼝generative⽣产的;有⽣产⼒的Hheuristic启发式的;启发法;启发程序hone怀恋;磨hyperplane超平⾯Linitial最初的implement执⾏intuitive凭直觉获知的incremental增加的intercept截距intuitious直觉instantiation例⼦indicator指⽰物,指⽰器interative重复的,迭代的integral积分identical相等的;完全相同的indicate表⽰,指出invariance不变性,恒定性impose把…强加于intermediate中间的interpretation解释,翻译Jjoint distribution联合概率Llieu替代logarithmic对数的,⽤对数表⽰的latent潜在的Leave-one-out cross validation留⼀法交叉验证Mmagnitude巨⼤mapping绘图,制图;映射matrix矩阵mutual相互的,共同的monotonically单调的minor较⼩的,次要的multinomial多项的multi-class classification⼆分类问题Nnasty讨厌的notation标志,注释naïve朴素的Oobtain得到oscillate摆动optimization problem最优化问题objective function⽬标函数optimal最理想的orthogonal(⽮量,矩阵等)正交的orientation⽅向ordinary普通的occasionally偶然的Ppartial derivative偏导数property性质proportional成⽐例的primal原始的,最初的permit允许pseudocode伪代码permissible可允许的polynomial多项式preliminary预备precision精度perturbation 不安,扰乱poist假定,设想positive semi-definite半正定的parentheses圆括号posterior probability后验概率plementarity补充pictorially图像的parameterize确定…的参数poisson distribution柏松分布pertinent相关的Qquadratic⼆次的quantity量,数量;分量query疑问的Rregularization使系统化;调整reoptimize重新优化restrict限制;限定;约束reminiscent回忆往事的;提醒的;使⼈联想…的(of)remark注意random variable随机变量respect考虑respectively各⾃的;分别的redundant过多的;冗余的Ssusceptible敏感的stochastic可能的;随机的symmetric对称的sophisticated复杂的spurious假的;伪造的subtract减去;减法器simultaneously同时发⽣地;同步地suffice满⾜scarce稀有的,难得的split分解,分离subset⼦集statistic统计量successive iteratious连续的迭代scale标度sort of有⼏分的squares平⽅Ttrajectory轨迹temporarily暂时的terminology专⽤名词tolerance容忍;公差thumb翻阅threshold阈,临界theorem定理tangent正弦Uunit-length vector单位向量Vvalid有效的,正确的variance⽅差variable变量;变元vocabulary词汇valued经估价的;宝贵的Wwrapper包装分类:。

AI术语

人工智能专业重要词汇表1、A开头的词汇:Artificial General Intelligence/AGI通用人工智能Artificial Intelligence/AI人工智能Association analysis关联分析Attention mechanism注意力机制Attribute conditional independence assumption属性条件独立性假设Attribute space属性空间Attribute value属性值Autoencoder自编码器Automatic speech recognition自动语音识别Automatic summarization自动摘要Average gradient平均梯度Average-Pooling平均池化Accumulated error backpropagation累积误差逆传播Activation Function激活函数Adaptive Resonance Theory/ART自适应谐振理论Addictive model加性学习Adversarial Networks对抗网络Affine Layer仿射层Affinity matrix亲和矩阵Agent代理/ 智能体Algorithm算法Alpha-beta pruningα-β剪枝Anomaly detection异常检测Approximation近似Area Under ROC Curve/AUC R oc 曲线下面积2、B开头的词汇Backpropagation Through Time通过时间的反向传播Backpropagation/BP反向传播Base learner基学习器Base learning algorithm基学习算法Batch Normalization/BN批量归一化Bayes decision rule贝叶斯判定准则Bayes Model Averaging/BMA贝叶斯模型平均Bayes optimal classifier贝叶斯最优分类器Bayesian decision theory贝叶斯决策论Bayesian network贝叶斯网络Between-class scatter matrix类间散度矩阵Bias偏置/ 偏差Bias-variance decomposition偏差-方差分解Bias-Variance Dilemma偏差–方差困境Bi-directional Long-Short Term Memory/Bi-LSTM双向长短期记忆Binary classification二分类Binomial test二项检验Bi-partition二分法Boltzmann machine玻尔兹曼机Bootstrap sampling自助采样法/可重复采样/有放回采样Bootstrapping自助法Break-Event Point/BEP平衡点3、C开头的词汇Calibration校准Cascade-Correlation级联相关Categorical attribute离散属性Class-conditional probability类条件概率Classification and regression tree/CART分类与回归树Classifier分类器Class-imbalance类别不平衡Closed -form闭式Cluster簇/类/集群Cluster analysis聚类分析Clustering聚类Clustering ensemble聚类集成Co-adapting共适应Coding matrix编码矩阵COLT国际学习理论会议Committee-based learning基于委员会的学习Competitive learning竞争型学习Component learner组件学习器Comprehensibility可解释性Computation Cost计算成本Computational Linguistics计算语言学Computer vision计算机视觉Concept drift概念漂移Concept Learning System /CLS概念学习系统Conditional entropy条件熵Conditional mutual information条件互信息Conditional Probability Table/CPT条件概率表Conditional random field/CRF条件随机场Conditional risk条件风险Confidence置信度Confusion matrix混淆矩阵Connection weight连接权Connectionism连结主义Consistency一致性/相合性Contingency table列联表Continuous attribute连续属性Convergence收敛Conversational agent会话智能体Convex quadratic programming凸二次规划Convexity凸性Convolutional neural network/CNN卷积神经网络Co-occurrence同现Correlation coefficient相关系数Cosine similarity余弦相似度Cost curve成本曲线Cost Function成本函数Cost matrix成本矩阵Cost-sensitive成本敏感Cross entropy交叉熵Cross validation交叉验证Crowdsourcing众包Curse of dimensionality维数灾难Cut point截断点Cutting plane algorithm割平面法4、D开头的词汇Data mining数据挖掘Data set数据集Decision Boundary决策边界Decision stump决策树桩Decision tree决策树/判定树Deduction演绎Deep Belief Network深度信念网络Deep Convolutional Generative Adversarial Network/DCGAN深度卷积生成对抗网络Deep learning深度学习Deep neural network/DNN深度神经网络Deep Q-Learning深度Q 学习Deep Q-Network深度Q 网络Density estimation密度估计Density-based clustering密度聚类Differentiable neural computer可微分神经计算机Dimensionality reduction algorithm降维算法Directed edge有向边Disagreement measure不合度量Discriminative model判别模型Discriminator判别器Distance measure距离度量Distance metric learning距离度量学习Distribution分布Divergence散度Diversity measure多样性度量/差异性度量Domain adaption领域自适应Downsampling下采样D-separation (Directed separation)有向分离Dual problem对偶问题Dummy node哑结点Dynamic Fusion动态融合Dynamic programming动态规划5、E开头的词汇Eigenvalue decomposition特征值分解Embedding嵌入Emotional analysis情绪分析Empirical conditional entropy经验条件熵Empirical entropy经验熵Empirical error经验误差Empirical risk经验风险End-to-End端到端Energy-based model基于能量的模型Ensemble learning集成学习Ensemble pruning集成修剪Error Correcting Output Codes/ECOC纠错输出码Error rate错误率Error-ambiguity decomposition误差-分歧分解Euclidean distance欧氏距离Evolutionary computation演化计算Expectation-Maximization期望最大化Expected loss期望损失Exploding Gradient Problem梯度爆炸问题Exponential loss function指数损失函数Extreme Learning Machine/ELM超限学习机6、F开头的词汇Factorization因子分解False negative假负类False positive假正类False Positive Rate/FPR假正例率Feature engineering特征工程Feature selection特征选择Feature vector特征向量Featured Learning特征学习Feedforward Neural Networks/FNN前馈神经网络Fine-tuning微调Flipping output翻转法Fluctuation震荡Forward stagewise algorithm前向分步算法Frequentist频率主义学派Full-rank matrix满秩矩阵Functional neuron功能神经元7、G开头的词汇Gain ratio增益率Game theory博弈论Gaussian kernel function高斯核函数Gaussian Mixture Model高斯混合模型General Problem Solving通用问题求解Generalization泛化Generalization error泛化误差Generalization error bound泛化误差上界Generalized Lagrange function广义拉格朗日函数Generalized linear model广义线性模型Generalized Rayleigh quotient广义瑞利商Generative Adversarial Networks/GAN生成对抗网络Generative Model生成模型Generator生成器Genetic Algorithm/GA遗传算法Gibbs sampling吉布斯采样Gini index基尼指数Global minimum全局最小Global Optimization全局优化Gradient boosting梯度提升Gradient Descent梯度下降Graph theory图论Ground-truth真相/真实8、H开头的词汇Hard margin硬间隔Hard voting硬投票Harmonic mean调和平均Hesse matrix海塞矩阵Hidden dynamic model隐动态模型Hidden layer隐藏层Hidden Markov Model/HMM隐马尔可夫模型Hierarchical clustering层次聚类Hilbert space希尔伯特空间Hinge loss function合页损失函数Hold-out留出法Homogeneous同质Hybrid computing混合计算Hyperparameter超参数Hypothesis假设Hypothesis test假设验证9、I开头的词汇ICML国际机器学习会议Improved iterative scaling/IIS改进的迭代尺度法Incremental learning增量学习Independent and identically distributed/i.i.d.独立同分布Independent Component Analysis/ICA独立成分分析Indicator function指示函数Individual learner个体学习器Induction归纳Inductive bias归纳偏好Inductive learning归纳学习Inductive Logic Programming/ILP归纳逻辑程序设计Information entropy信息熵Information gain信息增益Input layer输入层Insensitive loss不敏感损失Inter-cluster similarity簇间相似度International Conference for Machine Learning/ICML国际机器学习大会Intra-cluster similarity簇内相似度Intrinsic value固有值Isometric Mapping/Isomap等度量映射Isotonic regression等分回归Iterative Dichotomiser迭代二分器10、K开头的词汇Kernel method核方法Kernel trick核技巧Kernelized Linear Discriminant Analysis/KLDA核线性判别分析K-fold cross validation k 折交叉验证/k 倍交叉验证K-Means Clustering K –均值聚类K-Nearest Neighbours Algorithm/KNN K近邻算法Knowledge base知识库Knowledge Representation知识表征11、L开头的词汇Label space标记空间Lagrange duality拉格朗日对偶性Lagrange multiplier拉格朗日乘子Laplace smoothing拉普拉斯平滑Laplacian correction拉普拉斯修正Latent Dirichlet Allocation隐狄利克雷分布Latent semantic analysis潜在语义分析Latent variable隐变量Lazy learning懒惰学习Learner学习器Learning by analogy类比学习Learning rate学习率Learning Vector Quantization/LVQ学习向量量化Least squares regression tree最小二乘回归树Leave-One-Out/LOO留一法linear chain conditional random field线性链条件随机场Linear Discriminant Analysis/LDA线性判别分析Linear model线性模型Linear Regression线性回归Link function联系函数Local Markov property局部马尔可夫性Local minimum局部最小Log likelihood对数似然Log odds/logit对数几率Logistic Regression Logistic 回归Log-likelihood对数似然Log-linear regression对数线性回归Long-Short Term Memory/LSTM长短期记忆Loss function损失函数12、M开头的词汇Machine translation/MT机器翻译Macron-P宏查准率Macron-R宏查全率Majority voting绝对多数投票法Manifold assumption流形假设Manifold learning流形学习Margin theory间隔理论Marginal distribution边际分布Marginal independence边际独立性Marginalization边际化Markov Chain Monte Carlo/MCMC马尔可夫链蒙特卡罗方法Markov Random Field马尔可夫随机场Maximal clique最大团Maximum Likelihood Estimation/MLE极大似然估计/极大似然法Maximum margin最大间隔Maximum weighted spanning tree最大带权生成树Max-Pooling最大池化Mean squared error均方误差Meta-learner元学习器Metric learning度量学习Micro-P微查准率Micro-R微查全率Minimal Description Length/MDL最小描述长度Minimax game极小极大博弈Misclassification cost误分类成本Mixture of experts混合专家Momentum动量Moral graph道德图/端正图Multi-class classification多分类Multi-document summarization多文档摘要Multi-layer feedforward neural networks多层前馈神经网络Multilayer Perceptron/MLP多层感知器Multimodal learning多模态学习Multiple Dimensional Scaling多维缩放Multiple linear regression多元线性回归Multi-response Linear Regression /MLR多响应线性回归Mutual information互信息13、N开头的词汇Naive bayes朴素贝叶斯Naive Bayes Classifier朴素贝叶斯分类器Named entity recognition命名实体识别Nash equilibrium纳什均衡Natural language generation/NLG自然语言生成Natural language processing自然语言处理Negative class负类Negative correlation负相关法Negative Log Likelihood负对数似然Neighbourhood Component Analysis/NCA近邻成分分析Neural Machine Translation神经机器翻译Neural Turing Machine神经图灵机Newton method牛顿法NIPS国际神经信息处理系统会议No Free Lunch Theorem/NFL没有免费的午餐定理Noise-contrastive estimation噪音对比估计Nominal attribute列名属性Non-convex optimization非凸优化Nonlinear model非线性模型Non-metric distance非度量距离Non-negative matrix factorization非负矩阵分解Non-ordinal attribute无序属性Non-Saturating Game非饱和博弈Norm范数Normalization归一化Nuclear norm核范数Numerical attribute数值属性14、O开头的词汇Objective function目标函数Oblique decision tree斜决策树Occam’s razor奥卡姆剃刀Odds几率Off-Policy离策略One shot learning一次性学习One-Dependent Estimator/ODE独依赖估计On-Policy在策略Ordinal attribute有序属性Out-of-bag estimate包外估计Output layer输出层Output smearing输出调制法Overfitting过拟合/过配Oversampling过采样15、P开头的词汇Paired t-test成对t 检验Pairwise成对型Pairwise Markov property成对马尔可夫性Parameter参数Parameter estimation参数估计Parameter tuning调参Parse tree解析树Particle Swarm Optimization/PSO粒子群优化算法Part-of-speech tagging词性标注Perceptron感知机Performance measure性能度量Plug and Play Generative Network即插即用生成网络Plurality voting相对多数投票法Polarity detection极性检测Polynomial kernel function多项式核函数Pooling池化Positive class正类Positive definite matrix正定矩阵Post-hoc test后续检验Post-pruning后剪枝potential function势函数Precision查准率/准确率Prepruning预剪枝Principal component analysis/PCA主成分分析Principle of multiple explanations多释原则Prior先验Probability Graphical Model概率图模型Proximal Gradient Descent/PGD近端梯度下降Pruning剪枝Pseudo-label伪标记16、Q开头的词汇Quantized Neural Network量子化神经网络Quantum computer量子计算机Quantum Computing量子计算Quasi Newton method拟牛顿法17、R开头的词汇Radial Basis Function/RBF径向基函数Random Forest Algorithm随机森林算法Random walk随机漫步Recall查全率/召回率Receiver Operating Characteristic/ROC受试者工作特征Rectified Linear Unit/ReLU线性修正单元Recurrent Neural Network循环神经网络Recursive neural network递归神经网络Reference model参考模型Regression回归Regularization正则化Reinforcement learning/RL强化学习Representation learning表征学习Representer theorem表示定理reproducing kernel Hilbert space/RKHS再生核希尔伯特空间Re-sampling重采样法Rescaling再缩放Residual Mapping残差映射Residual Network残差网络Restricted Boltzmann Machine/RBM受限玻尔兹曼机Restricted Isometry Property/RIP限定等距性Re-weighting重赋权法Robustness稳健性/鲁棒性Root node根结点Rule Engine规则引擎Rule learning规则学习18、S开头的词汇Saddle point鞍点Sample space样本空间Sampling采样Score function评分函数Self-Driving自动驾驶Self-Organizing Map/SOM自组织映射Semi-naive Bayes classifiers半朴素贝叶斯分类器Semi-Supervised Learning半监督学习semi-Supervised Support Vector Machine半监督支持向量机Sentiment analysis情感分析Separating hyperplane分离超平面Sigmoid function Sigmoid 函数Similarity measure相似度度量Simulated annealing模拟退火Simultaneous localization and mapping同步定位与地图构建Singular Value Decomposition奇异值分解Slack variables松弛变量Smoothing平滑Soft margin软间隔Soft margin maximization软间隔最大化Soft voting软投票Sparse representation稀疏表征Sparsity稀疏性Specialization特化Spectral Clustering谱聚类Speech Recognition语音识别Splitting variable切分变量Squashing function挤压函数Stability-plasticity dilemma可塑性-稳定性困境Statistical learning统计学习Status feature function状态特征函Stochastic gradient descent随机梯度下降Stratified sampling分层采样Structural risk结构风险Structural risk minimization/SRM结构风险最小化Subspace子空间Supervised learning监督学习/有导师学习support vector expansion支持向量展式Support Vector Machine/SVM支持向量机Surrogat loss替代损失Surrogate function替代函数Symbolic learning符号学习Symbolism符号主义Synset同义词集19、T开头的词汇T-Distribution Stochastic Neighbour Embedding/t-SNE T–分布随机近邻嵌入Tensor张量Tensor Processing Units/TPU张量处理单元The least square method最小二乘法Threshold阈值Threshold logic unit阈值逻辑单元Threshold-moving阈值移动Time Step时间步骤Tokenization标记化Training error训练误差Training instance训练示例/训练例Transductive learning直推学习Transfer learning迁移学习Treebank树库Tria-by-error试错法True negative真负类True positive真正类True Positive Rate/TPR真正例率Turing Machine图灵机Twice-learning二次学习20、U开头的词汇Underfitting欠拟合/欠配Undersampling欠采样Understandability可理解性Unequal cost非均等代价Unit-step function单位阶跃函数Univariate decision tree单变量决策树Unsupervised learning无监督学习/无导师学习Unsupervised layer-wise training无监督逐层训练Upsampling上采样21、V开头的词汇Vanishing Gradient Problem梯度消失问题Variational inference变分推断VC Theory VC维理论Version space版本空间Viterbi algorithm维特比算法Von Neumann architecture冯·诺伊曼架构22、W开头的词汇Wasserstein GAN/WGAN Wasserstein生成对抗网络Weak learner弱学习器Weight权重Weight sharing权共享Weighted voting加权投票法Within-class scatter matrix类内散度矩阵Word embedding词嵌入Word sense disambiguation词义消歧23、Z开头的词汇Zero-data learning零数据学习Zero-shot learning零次学习。

面向多目标优化的进化算法研究

面向多目标优化的进化算法研究进化算法是一种通过模拟生物进化过程进行问题求解的算法,它以获取优秀解决方案为目标。

然而,在现实生活中,我们常常面临的是多个目标冲突的优化问题,而传统的进化算法在面对这类问题时往往无法取得令人满意的结果。

因此,研究面向多目标优化的进化算法成为了近年来研究的热点。

面向多目标优化的进化算法(多目标进化算法,MOEA)旨在找到一个解集,使得在目标空间中这些解尽可能地分布于全局的帕累托前沿(Pareto front),即无法找到一个解能在所有目标上优于它。

MOEA的优势在于能够提供一系列平衡解,给决策者提供了更多的选择余地。

在研究面向多目标优化的进化算法时,一个重要的问题是如何评估不同解的优劣程度。

传统的单目标优化算法可以使用一个标量函数来评估解的质量,但在多目标优化中,我们需要一个评估函数来同时考虑多个目标,并给出一个综合的评价。

常用的评价指标包括帕累托前沿覆盖度、空间覆盖率、边缘优势度等。

常见的面向多目标优化的进化算法包括遗传算法、粒子群算法、蚁群算法等。

遗传算法是一种模拟生物进化过程,通过选择、交叉、变异等操作来搜索解空间。

粒子群算法则通过模拟鸟群觅食行为来搜索最优解。

蚁群算法则模拟了蚂蚁在寻找食物的过程,通过信息素和启发函数来引导搜索。

在具体应用中,面向多目标优化的进化算法已经在许多领域取得了广泛的应用。

例如,在工程设计中,我们常常需要考虑多个目标,如成本、质量、效率等。

传统的单目标优化算法往往难以满足这些多个目标的需求,而多目标进化算法能够同时考虑这些目标,找到一个平衡解,能够在不同目标之间做出权衡。

另一个应用领域是组合优化问题,如旅行商问题、物流路径规划等。

这些问题往往涉及到多个冲突的约束条件,使用传统的单目标优化算法很难得到满意的解决方案。

而多目标进化算法可以通过搜索帕累托前沿,提供一系列的可行解,给决策者提供了更多的选择。

然而,面向多目标优化的进化算法也面临一些挑战与问题。

旅行商问题外文文献翻译

旅行商问题外文文献翻译(含:英文原文及中文译文)文献出处:Mask Dorigo. Traveling salesman problem [C]// IEEE International Conference on Evolutionary Computation. IEEE, 2013,3(1), PP:30-41.英文原文Traveling salesman problemMask Dorigo1 IntroductionIn operational research and theoretical computer science, the Traveling Salesman Problem (TSP) is a NP-difficult combinatorial optimization problem. By giving pairs of city-to-city distances, find each city exactly one shortest trip. It is a special case of buyer travel problems.The problem was first elaborated in 1930 as one of the most in-depth research questions in mathematics problems and optimization. It becomes a benchmark for many optimization methods. Although the problem is difficult to calculate, a large number of heuristic detections and exact methods are known to solve certain situations that contain tens of thousands of cities.TSP has many applications, even based on its most essential concept itself, such as planning, logistics, and manufacturing microchips. With minor changes, it has emerged as a sub-problem in many areas, such asDNA sequencing. In these applications, the cities in the TSP represent the customers, welding points, or DNA fragments. The distance in the TSP represents the travel time or cost, or similarity measure between DNA fragments. In many applications, additional constraints, such as limited resources or time windows, make the problem quite difficult. In computational complexity theory, the decision version of the TSP (given a length L, the goal is to judge whether there is any travel shorter than L) belongs to the class of np complete problems. Therefore, it is likely that in the worst case scenario, the operating time required to solve any of the TSP's algorithms increases exponentially with the number of cities.2 HistoryThe origin of the traveling salesman problem is still unclear. A manual of 1832 referred to the problem of travel salesmen, including examples from Germany and Switzerland. However, there is no mathematical treatment in the book. The traveling salesman problem was elaborated in the 19th century by the Irish mathematician W.R. and the English mathematician Thomas Kirkman. Hamilton's Icosian game is a casual game based on finding the Hamilton Circle. The general form of TSP, first studied by mathematicians and especially Karl Menger at the Vienna and Harvard universities in 1930, Karl Menger defined the problem, considered the obvious brute force algorithm, and examined the heuristics of non-nearest neighbors:We express the messenger problem (because in practice, every postman must solve this problem, and many tourists do the same), and its task is to know the limited number of points and their paired distances and find the shortest connection route. Of course, this problem is solvable for a limited number of trials. The rule allows the number of trials to be less than the number of species at a given point, but it is not known. First from the starting point to the nearest point, then from that point to the next point from its nearest point, this rule does not generally constitute the shortest possible line.After Hassler Whitney introduced the TSP at Princeton University, this issue quickly became popular in the European and American scientific communities in the 1950s and 1960s. In Dan Monica, the RAND Corporation's George Dantzig, Delbert Ray Fulkerson, and Selmer M. Johnson contributed to this and they solved TSP as an integer linear programming and an improved cutting plane problem. With these new solution methods, they built an optimal tour that solved an instance with 49 cities, and at the same time proved that no other tour can be shorter. In the following decades, the problem was studied by many researchers in mathematics, computer science, chemistry, physics, and other sciences.Richard M. Karp's research in 1972 showed that the Hamiltonian problem is NP-complete, which means that the TSP is NP-hard. Thisprovides a mathematical explanation as to why it is difficult to find the best travel.In the late 1970s and 1980s, there was a major breakthrough in the problem. Together with others, Gröötschel, Padberg, and Rinaldi used cut-plane methods and branch-and-bound methods to successfully solve instances of up to 2,392 cities.In the 1990s, Applegate, Bixby, Chvátal, and Cook developed the "Concordance" program that was used in many recent solutions. In 1991, Gerhard Reinelt published TSPLIB, which collected examples of different difficulties and was used by many research groups to compare results. In 2005, Cook and others found the best travel through 33,810 cities from a chip layout problem. This is the largest example of solving problems in TSPLIB. For many other examples with millions of cities, problem solving can be found and 1% is guaranteed to be the best one.3 Description3.1 As a Graphic ProblemTSP can be transformed into an undirected weighted graph. For example, the city is the vertex of the graph, the path is the edge of the graph, and the path distance is the length of the edge. This is a minimization problem that starts and ends at a specified vertex, and other vertices have exactly one access. A Hamiltonian circle is one of the best travels of the TSP and is proportional to the distance on each side.Normally, the model is a complete graph (ie each pair of vertices is connected by edges). If there is no path between the two cities, adding an edge of any length that does not affect the best travel becomes a complete picture.3.2 Asymmetry and symmetryIn a symmetrical TSP, the distance between two cities in each opposite direction is the same, forming an undirected graph. This symmetry splits the possible solutions in half. In an asymmetric TSP, there may be no two-way paths or two-way paths different to form a directed graph. Traffic accidents, one-way flights, and tickets of different times and prices are examples of disruptions to this symmetry.3.3 Related issuesAn equivalent proposition in graph theory is to give a complete weighted graph (where the vertices represent cities, the paths represented by the edges, and the weights represent costs or distances) and find the Hamiltonian ring with the smallest weight. Returning to the requirements of the departure city does not change the computational complexity of the problem. Look at the Hamilton route problem.Another related problem is the Bottleneck Traveling Salesman Problem (bottlenecks TSP): Find a Hamiltonian ring with the lowest critical edge weight in the weighted graph. The problem is of considerable practical significance, except that in the obvious areas oftransportation and logistics, a typical example is the drilling of drilling holes in PCBs for the manufacture of printed circuit dispatches. In machining or drilling applications, the “city” is the part or drill hole (different in size), and the “overhead of traverse” contains the time for replacement parts (stand-alone job scheduling problem). The general traveling salesman problem involves the “state,” “one or more” “city,” where the salesman visits each “city” from each “state,” and is also referred to as the “travel politician problem.” Surprisingly, Behzad and Modarres found that the general traveling salesman problem can be transformed into a standard traveling salesman problem with the same number of cities as the modified distance matrix.The problem of sequential ordering involves accessing a series of issues that have a city of priority relations with each other.The traveling salesman problem solves the buyer's purchase of a set of products. He can buy these products in several cities, but at different prices, while not all cities offer the same products. The goal is to find a path in all cities to minimize total expenses (travel expenses + purchase expenses).4 Calculation SolutionsThe traditional ideas for solving NP-hard problems are the following:1) Design the algorithm to find the exact solution (only applicable tosmall problems, which will be completed soon).2) Develop a "sub-optimal" or heuristic algorithm, ie the algorithm seems or may provide a good solution, but it cannot be proven to be optimal.3) It is possible to find solutions or heuristics in special cases of problems (sub-problems).4.1 Computational ComplexityThe problem has been proved to be an NP-difficult problem (more precisely, it is a complex class FP NP ), and the decision problem version (given the cost and a number x to determine whether there is a cheaper path than X) is a NP-complete problem. The bottleneck traveling salesman problem is also an NP-hard problem. Cancelling the "visit only once" condition for each city does not eliminate Np-difficulty, because it is easy to see that the best travel in the flat case must be visited once per city (otherwise, as seen by the triangle inequality, A short cut to skip repeat visits will not increase the length of the tour.)4.2 Approximate ComplexityIn general, finding the shortest traveling salesman problem is a NPO-complete. If the distance is measurable and symmetrical, the problem becomes APX-complete. Christofides's algorithm is within about 1.5.If the limits are 1 and 2 (but still a metric), the approximate ratio is7/6. In the case of asymmetry and metering, only the logarithmic performance can be guaranteed. The best performance of the current algorithm is 0.814log n. If there is a constant factor approximation, it is an open problem.The corresponding maximization problem found the longest traveling salesman to travel around 63/38. If the distance function is symmetric, the longest tour can be approximated by 4/3 with a deterministic algorithm and a random algorithm.4.3 Accurate AlgorithmThe most straightforward approach is to try all permutations (ordered combinations) to see which one is the least expensive (use brute force search). The time complexity of this method is O (n !), the factorial of the number of cities, so this solution, even if only 20 cities are unrealistic. One of the earliest applications of dynamic programming was the Held-Karp algorithm. The time complexity of problem solving was O (n 22n ).Dynamic programming solutions require the time complexity of the index. Use inclusion-exclusion to solve problems in 2n time and space.It seems difficult to improve these times. For example, it is not known whether there is an accurate algorithm for TSP and the time complexity is O (1.9999n).4.4 Other methods include1) Different branch and bound algorithms can be used for TSP in 40-60 cities.2) An improved linear programming algorithm that handles TSPs in 200 cities.3) Branch and bounds and specific cuts are the preferred method of solving a large number of instances. The current method has a record of solving 85,900 city examples (2006).A solution for 15,112 German towns was discovered in TSPLIB in 2001 using the cutting plane method proposed by George Dantzig, Ray Fulkerson, and Selmer M. Johnson in 1954 based on linear programming.Rice University and Princeton University have performed calculations on a network of 110 processors. The total computation time is equivalent to a 2.5 MHz processor working 22.6 years. In 2004, the problem of traveling salesman visited all 24,978 towns in Sweden and was about 72,500 kilometers in length. At the same time, it proved that there is no shorter travel.In March 2005, accessing all 33,810 point of travel salesman problems on a circuit board was solved by using the Concord TSP solver: a tour with a length of 66,048,945 units was found, which at the same time proved that there was no shorter tour. Calculated for approximately 15.7 CPU years (Cook et al., 2006). In April 2006, an instance of 85,900 points using the Concord TSP solver solved the CPU time of more than136 years (2006).4) Heuristic approximation algorithmA variety of heuristic approximation algorithms that can quickly produce good solutions have been developed. The current method can solve very large problems (with millions of cities) in a reasonable amount of time, and only 2–3% of the probability is far from the optimal solution.Constructive heuristics The nearest neighbor (neural network) algorithm (or so-called greedy algorithm) lets the salesman choose the nearest city that has not been visited as his next action goal. The algorithm quickly produces a valid short path. For N cities randomly distributed on one plane, the average path generated by the algorithm is 25% longer than the shortest path. However, there are many cities with special distributions that make the neural network algorithm give the worst path (Gutin, Y eo, and Zverovich, 2002). This is a real problem with symmetric and asymmetric traveling salesman problems (Gutin and Y eo, 2007). Rosenkrantz et al. showed that the neural network algorithm satisfies the triangle inequality when the approximation factor Θ(log| V | ).The approximate ratio of the construction based on the minimum spanning tree is 2 . The Christofides algorithm achieved a ratio of 1.5.Bitonic travel is a monotonic polygon made up of the smallest perimeter of a set of points, which can be calculated efficiently throughdynamic planning.Another constructive heuristic, the twice comparison merge (MTS) (Kahng, Reda 2004), performs two consecutive matches, and the second match is executed after all the first matching edges have been removed. Then merge to produce the final travel.Iterative refinements, pairwise exchanges, or Lin-Kernighan heuristics, pairwise exchanges, or 2-technologies involve the repeated deletion of two edges and the replacement of edges that are not needed to create a new and shorter tour. This is a special case of a K-OPT method. Please note that Lin –Kernighan is often a misname of 2-OPT. Lin –Kernighan is actually a more general approach.K-opt heuristics, a given tour, removes k-disjoint edges. Regroup the remaining parts into one tour, leaving the disjoint sub-tours (ie, the end of a disjointed part together). This actually simplifies the TSP under consideration into a much simpler problem. There are 2K-2 other connection possibilities for each part of the endpoint: 2 K total destination can be connected, so this part cannot be considered. This constrained 2K-TSP can then be resolved using brute force methods to find the lowest partial reorganization of the original part. K-opt is a special case of V-opt or variable-opt technology. The most popular K-opt is 3-opt, which was introduced by Shen Lin of Bell Labs in 1965. There is a 3 - OPT special case where the edges do not intersect (adjacent to bothsides). The v-opt heuristic, the variable-opt method is related to the k-opt method, and is a generalization of the K-opt method. While K -opt removes a fixed number (K) from the original tour, the variable-opt method does not delete fixed-size edge sets. Instead, they continue to grow during the search process. The best method known here is Lin-Kernighan's method (mentioned above as a 2-OPT error). Shen Lin and Brian Kernighan first published their method in 1972, which was the most reliable heuristic for solving traveling salesman problems for nearly two decades. The more advanced variable-opt approach was developed at Bell Labs in the late 1980s by David Johnson and his research team. These methods (sometimes referred to as) add methods from tabu search and evolutionary computation to the Lin-Kernighan method. The basic Lin-Kernighan technique gives results that guarantee at least 3 -opt. The Lin–Kernighan–Johnson method calculates Lin–Kernighan's weekly tour, then disrupts the tour through so-called mutations that move at least four sides and connect them in different ways, and then establishes a new tour with the V-opt method. The v-opt method is widely considered to be the most powerful heuristic to solve a problem, and can solve problems under special circumstances, such as the Hamilton ring problem and other non-decimal TSPs, but other heuristics cannot. Over the years, Lin-Kernighan-Johnson has been shown to be the best solution to all TSP solutions that have been tried.中文译文旅行商问题Mask Dorigo1 引言在运筹学和理论计算机科学中,旅行商问题(TSP )是一个NP-困难的组合优化问题。

多目标优化和进化算法

多目标优化和进化算法

多目标优化(Multi-Objective Optimization,简称MOO)是指在优化问题中存在多个目标函数需要同时优化的情况。

在实际问题中,往往存在多个目标之间相互制约、冲突的情况,因此需要寻找一种方法来平衡这些目标,得到一组最优解,这就是MOO的研究范畴。

进化算法(Evolutionary Algorithm,简称EA)是一类基于生物进化原理的优化算法,其基本思想是通过模拟进化过程来搜索最优解。

进化算法最初是由荷兰学者Holland于1975年提出的,随后经过不断的发展和完善,已经成为了一种重要的优化算法。

在实际应用中,MOO和EA经常被结合起来使用,形成了一种被称为多目标进化算法(Multi-Objective Evolutionary Algorithm,简称MOEA)的优化方法。

MOEA通过模拟生物进化过程,利用选择、交叉和变异等操作来生成新的解,并通过多目标评价函数来评估每个解的优劣。

MOEA能够在多个目标之间进行平衡,得到一组最优解,从而为实际问题提供了有效的解决方案。

MOEA的发展历程可以追溯到20世纪80年代初,最早的研究成果是由美国学者Goldberg和Deb等人提出的NSGA(Non-dominated Sorting Genetic Algorithm),该算法通过非支配排序和拥挤度距离来保持种群的多样性,从而得到一组最优解。

随后,又出现了许多基于NSGA的改进算法,如NSGA-II、

MOEA/D、SPEA等。

总之,MOO和EA是两个独立的研究领域,但它们的结合产生了MOEA这一新的研究方向。

MOEA已经在许多领域得到了广泛应用,如工程设计、决策分析、金融投资等。

Galapagos GH官方说明

Galapagos∙Created by David Rutten∙Send Message View GroupsInformationGalapagos Evolutionary SolverLocation: Planet EarthMembers: 86Latest Activity: 17 hours agoEvolutionary ComputingThe term "Evolutionary Computing" may very well be widely known at this point in time, but they are still very much a programmers tool. 'By programmers for programmers' if you will. The applications out there that apply evolutionary logic are either aimed at solving specific problems, or they are generic libraries that allow other programmers to piggyback along. It is my hope that Galapagos will provide a generic platform for the application of Evolutionary Algorithms to be used on a wide variety of problems by non-programmers.piggyback['pɪgɪbæk]adv.在背上, 在肩上v.把...扛在肩上; 背负式装运Galapagos is available in the current Grasshopper build. For more information on the concept behind Galapagos, please go the the Evolutionary Principles applied to Problem Solving article.Galapagos:最小包装盒相关搜索:Galapagos, 包装盒左:原始右:优化Evolutionary Principles applied to Problem Solving /profiles/blogs/evolutionary-principles∙Posted by David Rutten on September 25, 2010 at 9:00am∙Send Message View David Rutten's blogThis blog post is a rough approximation of the lecture I gave at the AAG10conference in Vienna on September 21st 2010. Naturally it will be quitea different experience as the medium is quite different, but it my hope the basic premise of the lecture remains intact. This post deals with Evolutionary Solvers in general, but I use Rhino, Grasshopper and Galapagos to demonstrate the topics.September 24th 2010David Ruttenpremise[prem·ise || 'premɪs]n.前提, 房屋连地基v.提论, 假定, 预述; 作出前提demonstrate[dem·on·strate || 'demənstreɪt]v.论证, 证明; 示范操作, 展示; 说明, 教; 显示, 表露; 示威Evolutionary Principles applied toProblem SolvingusingThere is nothing particularly new about Evolutionary Solvers or Genetic Algorithms. The first references to this field of computation stem from the early 60's when Lawrence J. Fogel published the landmark paper "On the Organization of Intellect" which sparked the first endeavors into evolutionary computing. The early 70's witnessed further forays with seminal work produced by -among others- Ingo Rechenberg and John HenryHolland. Evolutionary Computation didn't gain popularity beyond the programmer world until Richard Dawkins' book "The Blind Watchmaker" in 1986, which came with a small program that generated a seemingly endless stream of body-plans called "Bio-morphs" based on human selection. Since the 80's the advent of the personal computer has made it possible for individuals without government funding to apply evolutionary principles to personal projects and they have since made it into the common parlance.endeavor[en·deav·or || ɪn'devə]n.努力; 尽力parlance[par·lance || 'pɑrləns /'pɑːl-]n.谈话, 用法, 说法The term "Evolutionary Computing" may very well be widely known at this point in time, but they are still very much a programmers tool. 'By programmers for programmers' if you will. The applications out there that apply evolutionary logic are either aimed at solving specific problems, or they are generic libraries that allow other programmers to piggyback along. It is my hope that Galapagos will provide a generic platform for the application of Evolutionary Algorithms to be used on a wide variety of problems by non-programmers.Pros and ConsBefore we dive into the subject matter too deeply though I feel it is important to highlight some of the (dis)advantages of this particular type of solver, just so you know what to expect. Since we are not living in the best of all possible worlds there is often no such thing as the perfect solution. Every approach has drawbacks and limitations. In the case of Evolutionary Algorithms these are luckily well known and easily understood drawbacks, even though they are not trivial. Indeed, they may well be prohibitive for many a particular problem.trivial['triv·i·al || 'trɪvɪəl]adj.琐细的, 微不足道的, 价值不高的Firstly; Evolutionary Algorithms are slow. Dead slow. It is not unheard of that a single process may run for days or even weeks. Especially complicated set-ups that require a long time in order to solve a single iteration will quickly run out of hand. A light/shadow or acoustic computation for example may easily take a minute per iteration. If we assume we'll need at least 50 generations of 50 individuals each (which is almost certainly an underestimate unless the problem has a very obvious solution.) we're already looking at a two-day runtime.Secondly, Evolutionary Algorithms do not guarantee a solution. Unless a predefined 'good-enough' value is specified, the process will tend to run on indefinitely, never reaching The Answer, or, having reached it, not recognizing it for what it is.All is not bleak and dismal however, Evolutionary Algorithms have strong benefits as well, some of them rather unique amongst the plethora of computational methods. They are remarkably flexible for example, able to tackle a wide variety of problems. There are classes of problems which are by definition beyond the reach of even the best solver implementation and other classes that are very difficult to solve, but these are typically rare in the province of the human meso-world. By and large the problems we encounter on a daily basis fall into the 'evolutionary solvable' category.implementation[im·ple·men·ta·tion || ‚ɪmplɪmen'teɪʃn]n.履行; 成就; 完成category[cat·ego·ry || 'kætɪgərɪ]n.种类; 范畴; 别Evolutionary Algorithms are also quite forgiving. They will happily chew on problems that have been under- or over-constrained or otherwise poorlyformulated. Furthermore, because the run-time process is progressive, intermediate answers can be harvested at practically any time. Unlike many dedicated algorithms, Evolutionary Solvers spew forth a never ending stream of answers, where newer answers are generally of a higher quality than older answers. So even a pre-maturely aborted run will yield something which could be called a result. It might not be a very good result, but it will be a result of sorts.maturely[mə'tʊrlɪ/mə'tʃʊə-]adv.成熟地; 谨慎地; 充分地Finally, Evolutionary Solvers allow -in principle- for a high degree of interaction with the user. This too is a fairly unique feature, especially given the broad range of possible applications. The run-time process is highly transparent and browsable, and there exists a lot of opportunity for a dialogue between algorithm and human. The solver can be coached across barriers with the aid of human intelligence, or it can be goaded into exploring sub-optimal branches and superficially dead-ends.barrier[bar·ri·er || 'bærɪə]n.障碍, 栅栏optimal[op·ti·mal || 'ɑptɪml /'ɒp-]adj.最佳的, 最理想的The ProcessIn this section I shall briefly outline the process of an Evolutionary Solver run. It is a highly simplified version of the remainder of the blog post, and I'll skip over many interesting and even important details. I'll show the process as a series of image frames, where each frame shows the state of the 'population' at a given moment in time. Before I can start however, I need to explain what the image below means.brieflyadv.暂时地; 简要地What you see here is the Fitness Landscape of a particular model. The model contains two variables, meaning two values which are allowed to change. In Evolutionary Computing we refer to variables as genes. As we change Gene A, the state of the model changes and it either becomes better or worse (depending on what we're looking for). So as Gene A changes, the fitness of the entire model goes up or down. But for every value of A, we can also vary Gene B, resulting in better or worse combinations of A and B. Every combination of A and B results in a particular fitness, and this fitness is expressed as the height of the Fitness Landscape. It is the job of the solver to find the highest peak in this landscape.combination[com·bi·na·tion || ‚kɒmbɪ'neɪʃn]n.结合; 团体; 联合; 联盟peak[pɪːk]n.山顶, 山峰; 高峰, 最高点, 顶端; 山; 尖端v.使尖起, 使成峰状; 使达到高峰; 达到高峰; 耸起; 缩小; 消瘦Of course a lot of problems are defined by not just two but many genes, in which case we can no longer speak of a 'landscape' in the strict sense.A model with 12 genes would be a 12-dimensional fitness volume deformed in 13 dimensions instead of a two-dimensional fitness plane deformed in 3 dimensions. As this is impossible to visualize I shall only use one andtwo-dimensional models, but note that when we speak of a "landscape", it might mean something terribly more complex than the above image shows.dimensional[di'men·sion·al || -ʃənl]adj.空间的As the solver starts it has no idea about the actual shape of the fitness landscape. Indeed, if we knew the shape we wouldn't need to bother with all this messy evolutionary stuff in the first place. So the initial step of the solver is to populate the landscape (or "model-space") with a random collection of individuals (or "genomes"). A genome is nothing more than a specific value for each and every gene. In the above case, a genome could for example be {A=0.2 B=0.5}. The solver will then evaluate the fitness for each and every one of these random genomes, giving us the following distribution:messy[mess·y || 'mesɪ]adj.混乱的; 肮脏的; 麻烦的Once we know how fit every genome is (i.e., the elevation of the red dots), we can make a hierarchy from fittest to lamest. We are looking for high-ground in the landscape and it is a reasonable assumption that the higher genomes are closer to potential high-ground than the low ones. Therefore we can kill off the worst performing ones and focus on the remainder:hierarchy['hi·er·arch·y || 'haɪərɑrkɪ/-rɑːk-]n.阶级组织, 僧侣政治, 教士政治assumption[as·sump·tion || ə'sʌmpʃn]n.设想, 假定; 承担; 担任; 夺取potential[po·ten·tial || pəʊ'tenʃl]n.潜在性, 可能性adj.有潜力的, 潜在的, 可能的It is not good enough to simply pick the best performing genome from the initial population and call it quits. Since all the genomes in Generation 0 were picked at random, it is actually quite unlikely that any of them will have hit the jack-pot. What we need to do is breed the best performing genomes in Generation 0 to create Generation 1. When we breed two genomes,their offspring will end up somewhere in the intermediate model-space, thus exploring fresh ground:intermediate[,in·ter'me·di·ate || ‚ɪntə(r)'mɪːdɪeɪtd]n.中间物, 调停者v.作中间人; 干预adj.中间的, 中级的We now have a new population, which is no longer completely random and which is already starting to cluster around the three fitness 'peaks'. All we have to do is repeat the above steps (kill off the worst performing genomes, breed the best-performing genomes) until we have reached the highest peak.intermediate[,in·ter'me·di·ate || ‚ɪntə(r)'mɪːdɪeɪtd]n.中间物, 调停者v.作中间人; 干预adj.中间的, 中级的peak[pɪːk]n.山顶, 山峰; 高峰, 最高点, 顶端; 山; 尖端v.使尖起, 使成峰状; 使达到高峰; 达到高峰; 耸起; 缩小; 消瘦In order to perform this process, an Evolutionary Solver requires five interlocking parts, which I'll discuss in something resembling detail. We could call this the anatomy of the Solver.1.Fitness Function2.Selection Mechanism3.Coupling Algorithm4.Coalescence Algorithm5.Mutation Factoryinterlockn.联锁, 连结v.使连锁; 使连扣; 使连结; 连锁; 连扣; 连结resemble[re·sem·ble || rɪ'zembl]v.相似, 象, 类似anatomy[a'nat·o·my || -mɪ]n.解剖学, 骨骸, 剖析Fitness FunctionsIn biological evolution, the quality known as "Fitness" is actually something of a stumbling block. Usually it is very difficult to say exactly what it means to be fit. It certainly has little or nothing to do with being the strongest, or the fastest, or the most vicious. The reason there are no flying dogs isn't that evolution hasn't gotten around to making any yet, it is that the dog lifestyle is supremely incompatible with flying and the sacrifices required to equip a dog with flight would certainly detract more from the overall fitness than flight would add to it. Fitness is the result of a million conflicting forces. Evolutionary Fitness is the ultimate compromise.stumble[stum·ble || 'stʌmbl]n.绊倒, 失策v.绊倒, 失策, 失足; 使绊倒, 使困惑supremely[su·preme·ly || sʊ'prɪːmlɪ]adv.至上地; 崇高地incompatible[in·com·pat·i·ble || ‚ɪnkəm'pætəbl]adj.不相容的, 矛盾的, 不能并存的sacrifice[sac·ri·fice || 'sækrɪfaɪs]n.祭牲, 祭品; 牺牲; 献祭; 牺牲的行为v.牺牲; 赔本出售; 献出; 献祭; 献祭; 作牺牲打detract[de·tract || dɪ'trækt]v.转移; 减损; 使分心; 诋毁; 减损, 降低A fit individual is on average able to produce more offspring than an unfit one, so we could say that fitness equals the number of genetic children.A better measure yet would be to count the number of grand-children. And a better measure yet would be to count the allele frequency in the gene-pool of the genes that made up the individual in question. But these are all rather ad-hoc definitions that cannot be measured on the spot.At least in Evolutionary Computation, fitness is a very easy concept. Fitness is whatever we want it to be. We are trying to solve a specific problem, and therefore we know what it means to be fit. If for example we are seeking to position a shape so that it may be milled with minimum material waste, there is a very strict fitness function that leaves no room for argument.Let's have a look at the fitness landscape again and let's imagine it represents a model that seeks to encase an object in a minimum volume bounding-box. A minimum bounding-box is the smallest orthogonal box that completely contains any given shape. In the image below, the green shape is encased by two bounding boxes. B has a smaller area than A and is therefore fitter.When we need to mill or 3D-print a shape, it is often a good idea to rotate it until it requires the least amount of material to be used during manufacturing. For a real minimum bounding-box we need at least three rotation axes, but since that will not allow me to display the real fitness landscape, we will restrict ourselves to rotation around the world X and Y axes. So, Gene A will represent the rotation around the X axis and Gene B will represent rotation around the Y axis. There is no need to allow for rotation higher than 360 degrees, so both genes have a limited working domain. (In fact, since we are talking about orthogonal boxes, even a 0-90 degree domain would suffice). Behold rotation around a single axis:When we pick two rotational angles at random, we end up somewhere on the fitness landscape. If we allow for 4 decimal places in the rotation angles it means we can actually generate almost 810,000,000,000 (or 810 billion) unique rotations. It is therefore exceptionally unlikely that we manage to pick a random rotation that yields the best possible answer. But let's say we don't even manage to get close. Let's say we manage to pick a random genome that is at the bad end of the fitness scale, i.e. at the bottom of the fitness landscape. What can we say about the blood-line of this genome? When we track the descendants of a particular genome there is always a large amount of randomness involved due to the workings of the Solver, but there is a strong general tendency that can be distinguished. Just like water will always flow downhill along the steepest slope, so genetic descendants will generally climb uphill along the steepest slope:Every individual tries to maximize its own fitness, as high fitness is rewarded by the solver. And the steepest uphill climb is the fastest way towards high fitness. So if the black sphere represents the location of the ancestral genome, the orange track represents the pathway of its most successful offspring. We can repeat this exercise for a large amount of sample points which will tell us something about how the Solver and the Fitness Landscape interact:Since every genome is pulled uphill, every peak in the fitness landscape has a basin of attraction around it. This basin represents all the points in model-space that will converge upon that specific peak. It is important to notice that the area of the basin is in no way representative of the quality of the peak. Indeed, a very poor solution may have a large basin of attraction while a good peak might have a small catchment area. Problems like this are typically very difficult to solve, as the solution tends to get stuck in local optima. But we'll have a look at problematic fitness functions later on.First, let's have a closer look at the actual fitness landscape for our minimum bounding-box model. I'm afraid it's not quite as simple as the image we've been using so far. I was actually quite surprised how organic and un-box-like the actual fitness landscape for this problem is. Remember, the x-axis rotation is mapped along the Gene A direction and the y-axis rotation along the Gene B direction. So every point on the AB plane represents a unique rotation composed of two angles. The elevation of this point is a direct mapping of the volume of the bounding-box at those two rotation angles:The first thing to notice is that the landscape is periodic. I.e., it repeats itself every 90 degrees in both directions. Also, this landscape is in fact inverted as we're looking for a minimum volume, not a maximum one. Thus, the orange peaks in fact represent the worst solutions to this problem. Note that there are 16 of these peaks in the entire range and that they are rounded. When we look at the bottom of this fitness landscape, we get a rather different view:It would appear that the lowest points in this landscape (the minimum bounding-boxes) are both fewer in number and of a different kind. We only get 8 optimal solutions and they are all very sharp, indicating a somewhat more fragile state.Still, on the whole we have nothing to complain about. All the solutions are of equal quality and there are no local optima at all. We can generalize this landscape to a 2-dimensional graph:No matter where you end up as an ancestral genome, your blood-line will always find its way to a minimum bounding box. There's nowhere for it to get 'stuck'. So it's really just a question about who gets there first. If we look at a slightly more complex fitness graph, it becomes apparent that this need not be the case:This fitness landscape has two kinds of solutions. The high quality sharp ones near the bottom of the graph and the low quality flat ones near the top. The basin of attraction is given for both solutions (yellow for high quality, pink for low quality) and you can see that about half of the model space is attracted to the low quality solutions.An even worse example (flipped upright again this time, so high values indicate good solutions) would be the following fitness landscape:The basins for these peaks are very small indeed and therefore easy to miss by a random sampling of the landscape. As soon as a lucky genome finds the peak on the left, its offspring will rapidly populate the low peak causing the rest of the population to go extinct. It is now even less likely that the better peak on the right will be found. The smaller the basins for solution, the harder it is to solve a problem with an evolutionary algorithm.Another example of a cumbersome problem to solve would be a discontinuous fitness landscape:Even though there are strictly speaking no local optima, there is also no 'improvement' on the plateaus. A genome which finds itself in the middleof one of these horizontal patches doesn't know where to go. If it takes a step to the left, nothing changes. If it takes a step to the right, nothing changes. There's no 'pressure' in this fitness landscape, so all the genomes will wander about aimlessly, until one of them has the good fortune to suddenly step onto a higher plateau. At this point it will quickly dominate the gene-pool and the wandering starts again until the next plateau is accidentally found.Even worse than this though is a landscape that has a high degree of noise or chaos. A landscape may be continuous and yet feature so much detail that it becomes impossible to make any intelligible pronunciations regarding the fitness of a local patch:In a landscape like this, mommy and daddy may both be very similar and both be very fit, but when they mate the offspring might end up in one of the fissures. A landscape like this defies navigation.Selection MechanismsBiological Evolution proceeds by Natural Selection. The ruthless force identified by Darwin as the arbiter of progress. Put simply, Natural Selection affects the direction of the gene-pool over time by regulating who gets to mate. In extreme cases mating is prevented because a specific genome is so unfit that the bearer cannot survive until reproductive age. Another rather extreme case would be sterility. However, there's a myriad ways in which Natural Selection can make it difficult or impossible for certain individuals to pass on their genetic footprint.However, Natural Selection isn't the only game in town. For a long time now humans have been using Artificial Selection in order to breed specific characteristics into a (sub)species. When we try to solve problems using an Evolutionary Solver, we always use some form of artificial selection. There's no such thing as sex or gender in the computer. The process of selection is also much simpler than in nature, as there is basically only one question that needs to be answered: Who gets to mate?Allow me to enumerate the mechanisms for parent selection that are available in Galapagos. This is only a small subset of the selection algorithms that are possible, but they seem to cover the basics rather well.First off, we have Isotropic Selection, which is the simplest kind of algorithm you can imagine. In fact, it is the absence of a selection algorithm. In Isotropic Selection everyone gets to mate:No matter where you find yourself on this fitness graph, your chances of ending up in a mating couple are constant. You might think that this is a particularly pointless selection strategy as it does nothing to further the evolution of the gene-pool. But it is not without precedent in nature. Take for example wind-pollination or coral spawning. If you're a sexually functioning member of such a species, you get to play ball come mating season. Another example would be females in a walrus colony. Every female in a colony gets to breed with the dominant male, no matter how fit or unfit she is. Isotropic Selection is certainly not without function either. For one, it dampens the speed with which a population runs uphill. It therefore acts as a safe-guard against a premature colonization of a local -and possibly inferior- optimum.Another mechanism available in Galapagos is Exclusive Selection, where only the top N% of the population get to mate:If you're lucky enough to be in the top N%, you'll likely have multiple offspring. A good analogy in nature for Exclusive Selection would be Walrus males. There's only a few harems to go around and far too many males to assign them all (a harem of one female after all is not really a harem). The flunkies get to sit on the side-line without a single chance to father a walrus baby, doing whatever it is walruses do when they can't get any action.Another common pattern in nature is Biased Selection, where the chance of mating increases as the fitness increases. This is something we typically see with species that form stable couples. Everyone is basically capable of finding a mate, but the really attractive individuals manage to get a lot of hanky-panky on the side, thus increasing their chancesof becomes genetic founders for future generations. Biased Selection can be amplified by using power functions, which have the effect of flattening or exaggerating the curve.Coupling AlgorithmsCoupling is the process of finding mates. Once a genome has been elected to mate by the active Selection Algorithm, it has to pick a mate from the population to complete the act. There are of course many ways in which mate selection could occur, but Galapagos at the moment only allows one; selection by genomic distance. In order to explain this in detail, I should first tell you how a Genome Map works. Thisis a Genome Map. It displays all the genomes (individuals) in a certain population as dots on a grid. The distance between two genomes on the grid is roughly analogous with the distance between the genomes in gene-space.I say roughly because it is in fact impossible to draw a map with exact distances. A single genome is defined by a number of genes. We assume that all the genomes in a species have the same number of genes (this is not technically a limitation of Evolutionary Algorithms, even though it is currently a limitation of Galapagos). Therefore the distance between two genomes is an N-Dimensional value, where N equals the number of genes. It is not possible to accurately display an N-Dimensional point cloud on a 2-Dimensional screen so the Genome Map is only a coarse approximation. It also follows that the axes of this graph have no meaning whatsoever, the only information a Genome Map conveys is which genomes are more or less similar (close together) and which genomes are more or less different (far apart).Imagine you are an individual that has been selected for mating (yay). The population is well distributed and you are somewhere near the average (I'm sure you are a wildly original and delightful person in real life, but for the time being try to imagine you are in fact sort of average):That red dot is you. Who looks attractive?You could of course limit your search of potential partners to your immediate neighbourhood. This means that you mate with individuals who are very much like you and it means your offspring will also be very much like you.When this is taken to extremes we call it incestuous mating behaviour and it can become detrimental pretty quickly. Biological incest has a nasty habit of expressing unhealthy but recessive genes, but in the digital world of Evolutionary Solvers the biggest risk of incest is a rapid decline in population diversity. Low diversity decreases the chances of finding alternate solution basins and thus it risks getting stuck in local optima.The other extreme is to exclude everyone near you. You'll often hear it said that opposites attract, but that's true only up to a point. At some point the genomes at the other end of the scale become so different as to be incompatible.This is called zoophilic mating and it can be equally detrimental. This is especially true when a population is not a single group of genomes, but in fact contains multiple sub-species, each of which is climbing their own little fitness peak.You definitely do not want to mate with a member in a different sub-species, as the offspring would likely land somewhere in the middle. And since these two species are climbing different peaks, "in the middle" actually puts you in a fitness valley.It would seem that the best option is to balance in-breeding andout-breeding. To select individuals that are not too close and not too far. In Galapagos you can specify an in-breeding factor (between -100% and +100%, total out-breeding vs. total in-breeding respectively) that allows you to guide this relative offset:Note that mate selection at present completely ignores mate fitness. This is something that needs looking into for future releases, but even without any advanced selection algorithms the solver still works.Coalescence AlgorithmsOnce a mate has been selected, offspring needs to be generated. On the genetic level this is anything but fun and games. The biological process of gene recombination is horrendously complicated and itself subject to evolution (meiotic drive for example). The digital variant is much more basic. This is partially because genes in evolutionary algorithms are not very similar to biological genes. Ironically, biological genes are far more digital than programmatic genes. As Mendel discovered in the 1860's,。

多目标进化算法性能评价指标综述

多目标进化算法性能评价指标综述多目标进化算法(Multi-objective Evolutionary Algorithms,MOEAs)是一类优化算法,用于解决具有多个目标函数的多目标优化问题。

MOEAs在解决多目标优化问题上具有很强的适应性和鲁棒性,并在许多领域有着广泛的应用。

为了评价MOEAs的性能,人们提出了许多指标。

这些指标可以分为两类:一类是针对解集的评价指标,另一类是针对算法的评价指标。

首先,针对解集的评价指标主要用于从集合的角度评价解集的性能。

常见的解集评价指标有:1. Pareto前沿指标:衡量解集的覆盖度和质量。

Pareto前沿是指在多目标优化问题中不可被改进的解的集合。

Pareto前沿指标包括Hypervolume、Generational Distance、Inverted Generational Distance等。

2. 支配关系指标:衡量解集中解之间支配关系的分布情况。

例如,Nondominated Sorting和Crowding Distance。

3. 散度指标:衡量解集中解的多样性。

例子有Entropy和Spacing 等。

4.非支配解比例:衡量解集中非支配解的比例。

非支配解是指在解集中不被其他解支配的解。

除了解集评价指标,人们还提出了一些用于评价MOEAs性能的算法评价指标,例如:1.收敛性:衡量算法是否能找到接近最优解集的解集。

2.多样性:衡量算法是否能提供多样性的解。

3.计算效率:衡量算法是否能在较少的计算代价下找到高质量的解集。

除了上述指标,还有一些用于评价MOEAs性能的进阶指标,例如:1.可行性:衡量解集中的解是否满足的问题的约束条件。

2.动态性:衡量算法在动态环境中的适应性。

3.可解释性:衡量算法生成的解是否易于被解释和理解。

以上只是一些常用的指标,根据具体的问题和应用场景,还可以针对性地定义其他指标来评价MOEAs性能。

综上所述,MOEAs性能的评价是一个多方面的任务,需要综合考虑解集的质量、表示多样性以及算法的计算效率等方面。