伍德里奇《计量经济学导论--现代观点》

计量经济学应用研究的可信性革命

计量经济学应用研究的可信性革命在过去的几十年中,计量经济学作为经济学的一个重要分支,已经经历了两次可信性革命。

这些革命彻底改变了我们对于计量经济学应用研究可信性的理解和实践。

第一次可信性革命发生在20世纪70年代,以弗朗哥·魁奈尔(Franco Modigliani)和默顿·米勒(Merton Miller)的诺贝尔经济学奖获奖研究——MM定理(Modigliani-Miller Theorem)为标志。

MM定理为企业的投资决策和财务政策提供了新的视角,并改变了经济学家对于公司财务政策的研究方向。

这一理论革命强调了如果一个公司的投资决策和财务政策不受到税收、破产成本、以及代理关系等因素的影响,那么公司的投资决策和财务政策将与企业的市场价值无关。

这一革命性的研究为后来的企业金融、资产定价和宏观经济学等领域的研究提供了重要的理论基础。

第二次可信性革命发生在21世纪初,以本·伯南克(Ben Bernanke)和亚当·斯密(Adam Smith)的宏观经济理论为标志。

他们强调了货币政策对于稳定经济的重要性,并提出了一个基于“流动性陷阱”和“自然失业率”的理论框架来解释经济周期。

这一理论框架强调了中央银行通过控制货币供应量来稳定经济的必要性,以及经济中的结构性因素如劳动力市场和金融市场的不完善对于宏观经济稳定的影响。

这一革命性的研究为后来的货币政策制定、经济周期分析以及国际经济政策协调等领域的研究提供了重要的理论基础。

今天,我们正处在一个新的可信性革命的边缘。

以大数据和人工智能为代表的新兴技术正在对计量经济学应用研究产生深远的影响。

大数据的出现使得我们能够处理和分析了更多的数据,而人工智能则帮助我们更加深入地挖掘和理解这些数据背后的经济规律和趋势。

大数据已经改变了计量经济学应用研究的范式。

传统的计量经济学研究通常依赖于假设和简化现实世界的复杂性。

然而,大数据为我们提供了一个更为接近现实的、复杂的数据世界,使我们能够更准确地刻画和理解经济现象。

《计量经济学导论》考研伍德里奇考研复习笔记二

《计量经济学导论》考研伍德里奇考研复习笔记二第1章计量经济学的性质与经济数据1.1 复习笔记一、什么是计量经济学计量经济学是以一定的经济理论为基础,运用数学与统计学的方法,通过建立计量经济模型,定量分析经济变量之间的关系。

在进行计量分析时,首先需要利用经济数据估计出模型中的未知参数,然后对模型进行检验,在模型通过检验后还可以利用计量模型来进行预测。

在进行计量分析时获得的数据有两种形式,实验数据与非实验数据:(1)非实验数据是指并非从对个人、企业或经济系统中的某些部分的控制实验而得来的数据。

非实验数据有时被称为观测数据或回顾数据,以强调研究者只是被动的数据搜集者这一事实。

(2)实验数据通常是通过实验所获得的数据,但社会实验要么行不通要么实验代价高昂,所以在社会科学中要得到这些实验数据则困难得多。

二、经验经济分析的步骤经验分析就是利用数据来检验某个理论或估计某种关系。

1.对所关心问题的详细阐述问题可能涉及到对一个经济理论某特定方面的检验,或者对政府政策效果的检验。

2构造经济模型经济模型是描述各种经济关系的数理方程。

3经济模型变成计量模型先了解一下计量模型和经济模型有何关系。

与经济分析不同,在进行计量经济分析之前,必须明确函数的形式,并且计量经济模型通常都带有不确定的误差项。

通过设定一个特定的计量经济模型,我们就知道经济变量之间具体的数学关系,这样就解决了经济模型中内在的不确定性。

在多数情况下,计量经济分析是从对一个计量经济模型的设定开始的,而没有考虑模型构造的细节。

一旦设定了一个计量模型,所关心的各种假设便可用未知参数来表述。

4搜集相关变量的数据5用计量方法来估计计量模型中的参数,并规范地检验所关心的假设在某些情况下,计量模型还用于对理论的检验或对政策影响的研究。

三、经济数据的结构1横截面数据(1)横截面数据集,是指在给定时点对个人、家庭、企业、城市、州、国家或一系列其他单位采集的样本所构成的数据集。

伍德里奇计量经济学笔记

伍德里奇计量经济学笔记伍德里奇计量经济学(Wooldridge Econometrics)是一门应用计量经济学的学科,它结合了经济学和数理统计学的理论和方法。

1. 引言- 计量经济学的定义:利用数理统计学和计量经济模型来分析经济问题。

- 经济学模型包括描述经济系统和理论关系的方程。

- 计量经济学的目标是估计和测试经济模型中的参数。

2. 统计学基础- 假设检验:用统计方法来验证经济理论。

- 最小二乘法(OLS):估计经济模型中未知参数的方法。

- OLS估计结果的性质和假设:无偏性、一致性和有效性。

3. 单变量回归模型- 简单线性回归模型:一个自变量和一个因变量之间的线性关系。

- 估计参数和评估模型:OLS估计、t统计量、R方和调整的R 方。

- 解释和预测:利用估计的模型进行解释和预测。

4. 多变量回归模型- 多元线性回归模型:多个自变量和一个因变量之间的线性关系。

- 估计参数和评估模型:OLS估计、t统计量、F统计量、R方和调整的R方。

- 控制变量和决策:利用控制变量来减少混淆因素,做出更准确的决策。

5. 动态模型- 差分方程:描述变量随时间变化的关系。

- 滞后变量和滞后因变量:引入滞后变量来解释变量之间的时序关系。

- 动态因果关系:解释一些经济变量之间的长期和短期关系。

6. 面板数据模型- 面板数据:包含多个个体和多个时间观测的数据集。

- 固定效应模型和随机效应模型:解释面板数据中个体效应和时间效应。

- 引入个体和时间固定效应:控制个体特征和时间变化对变量关系的影响。

7. 工具变量估计- 决定性和随机性端变量:用于解决内生性问题的变量。

- 工具变量的选择和检验:选择有效的工具变量来估计内生性模型。

- 两阶段最小二乘法(2SLS):用工具变量估计内生性模型。

8. 非线性回归模型- 非线性函数:描述实际经济关系的复杂性。

- 估计非线性模型:使用非线性最小二乘法(NLS)估计非线性模型。

- 非线性回归模型的解释和预测:利用估计的非线性模型进行解释和预测。

伍德里奇计量经济学导论

(3)因此,给定收入X的值Xi,可得消费支出Y的条件均值(conditional mean)或条件期望(conditional expectation): E(Y|X=Xi)

(4)该例中: E(Y | X=80)=65

.

描出散点图发现:随着收入的增加,消费“平均地说”也在增加,且Y的 条件均值均落在一根正斜率的直线上。这条直线称为总体回归线。

. E(y|x) = 0 + 1x

x1=5

x2 =10

.

34

对于所研究的经济问题,通常总体回归直线 E(Yi|Xi) = 0 + 1Xi 是观测不到

的。可以通过收集样本来对总体(真实的)回归直线做出估计。

样本回归模型: Yˆi ˆ0ˆ1Xi

或: Yi ˆ0ˆ1Xiei

② y = 0 + 1 x + u

u 为误差项或扰动项,它代表了除了x之外可以影响y的因素。

l 线性回归的含义: y 和x 之间并不一定存在线性关系,但是,只 要通过转换可以使y的转换形式和x的转换形式存在相对于参数的 线性关系,该模型即称为线性模型。

.

19 19

Ø 总体回归函数的随机设定

l 对于某一个家庭,如何描述可支配收入和消费支出的关系?

l 等式右边的变量被称为解释变量(Explanaiory Variable)或自 变量(Independent Variable)、右边变量、回归元,协变量,或控制变量。

l 等式y = b0 + b1x + u只有一个非常数回归元。我们称之为简单回归模型, 两

变量回归模型或双变量回归模型.

.

Ø 回归分析的目的

a. 函数形式:可以是线性或非线性的。

伍德里奇《计量经济学导论--现代观点》1

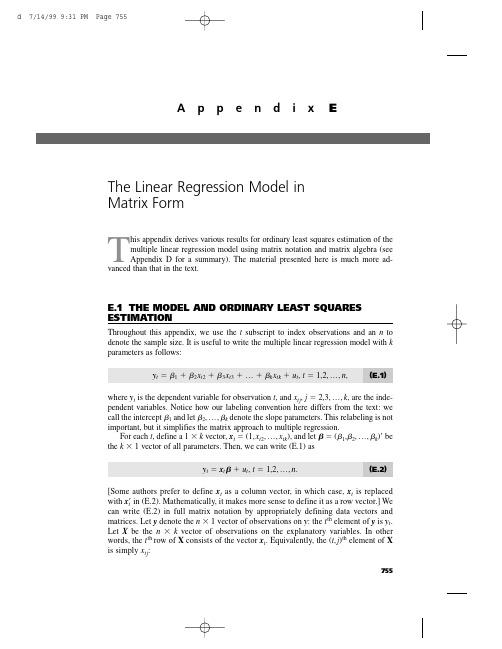

T his appendix derives various results for ordinary least squares estimation of themultiple linear regression model using matrix notation and matrix algebra (see Appendix D for a summary). The material presented here is much more ad-vanced than that in the text.E.1THE MODEL AND ORDINARY LEAST SQUARES ESTIMATIONThroughout this appendix,we use the t subscript to index observations and an n to denote the sample size. It is useful to write the multiple linear regression model with k parameters as follows:y t ϭ1ϩ2x t 2ϩ3x t 3ϩ… ϩk x tk ϩu t ,t ϭ 1,2,…,n ,(E.1)where y t is the dependent variable for observation t ,and x tj ,j ϭ 2,3,…,k ,are the inde-pendent variables. Notice how our labeling convention here differs from the text:we call the intercept 1and let 2,…,k denote the slope parameters. This relabeling is not important,but it simplifies the matrix approach to multiple regression.For each t ,define a 1 ϫk vector,x t ϭ(1,x t 2,…,x tk ),and let ϭ(1,2,…,k )Јbe the k ϫ1 vector of all parameters. Then,we can write (E.1) asy t ϭx t ϩu t ,t ϭ 1,2,…,n .(E.2)[Some authors prefer to define x t as a column vector,in which case,x t is replaced with x t Јin (E.2). Mathematically,it makes more sense to define it as a row vector.] We can write (E.2) in full matrix notation by appropriately defining data vectors and matrices. Let y denote the n ϫ1 vector of observations on y :the t th element of y is y t .Let X be the n ϫk vector of observations on the explanatory variables. In other words,the t th row of X consists of the vector x t . Equivalently,the (t ,j )th element of X is simply x tj :755A p p e n d i x EThe Linear Regression Model inMatrix Formn X ϫ k ϵϭ .Finally,let u be the n ϫ 1 vector of unobservable disturbances. Then,we can write (E.2)for all n observations in matrix notation :y ϭX ϩu .(E.3)Remember,because X is n ϫ k and is k ϫ 1,X is n ϫ 1.Estimation of proceeds by minimizing the sum of squared residuals,as in Section3.2. Define the sum of squared residuals function for any possible k ϫ 1 parameter vec-tor b asSSR(b ) ϵ͚nt ϭ1(y t Ϫx t b )2.The k ϫ 1 vector of ordinary least squares estimates,ˆϭ(ˆ1,ˆ2,…,ˆk ),minimizes SSR(b ) over all possible k ϫ 1 vectors b . This is a problem in multivariable calculus.For ˆto minimize the sum of squared residuals,it must solve the first order conditionѨSSR(ˆ)/Ѩb ϵ0.(E.4)Using the fact that the derivative of (y t Ϫx t b )2with respect to b is the 1ϫ k vector Ϫ2(y t Ϫx t b )x t ,(E.4) is equivalent to͚nt ϭ1xt Ј(y t Ϫx t ˆ) ϵ0.(E.5)(We have divided by Ϫ2 and taken the transpose.) We can write this first order condi-tion as͚nt ϭ1(y t Ϫˆ1Ϫˆ2x t 2Ϫ… Ϫˆk x tk ) ϭ0͚nt ϭ1x t 2(y t Ϫˆ1Ϫˆ2x t 2Ϫ… Ϫˆk x tk ) ϭ0...͚nt ϭ1x tk (y t Ϫˆ1Ϫˆ2x t 2Ϫ… Ϫˆk x tk ) ϭ0,which,apart from the different labeling convention,is identical to the first order condi-tions in equation (3.13). We want to write these in matrix form to make them more use-ful. Using the formula for partitioned multiplication in Appendix D,we see that (E.5)is equivalent to΅1x 12x 13...x 1k1x 22x 23...x 2k...1x n 2x n 3...x nk ΄΅x 1x 2...x n ΄Appendix E The Linear Regression Model in Matrix Form756Appendix E The Linear Regression Model in Matrix FormXЈ(yϪXˆ) ϭ0(E.6) or(XЈX)ˆϭXЈy.(E.7)It can be shown that (E.7) always has at least one solution. Multiple solutions do not help us,as we are looking for a unique set of OLS estimates given our data set. Assuming that the kϫ k symmetric matrix XЈX is nonsingular,we can premultiply both sides of (E.7) by (XЈX)Ϫ1to solve for the OLS estimator ˆ:ˆϭ(XЈX)Ϫ1XЈy.(E.8)This is the critical formula for matrix analysis of the multiple linear regression model. The assumption that XЈX is invertible is equivalent to the assumption that rank(X) ϭk, which means that the columns of X must be linearly independent. This is the matrix ver-sion of MLR.4 in Chapter 3.Before we continue,(E.8) warrants a word of warning. It is tempting to simplify the formula for ˆas follows:ˆϭ(XЈX)Ϫ1XЈyϭXϪ1(XЈ)Ϫ1XЈyϭXϪ1y.The flaw in this reasoning is that X is usually not a square matrix,and so it cannot be inverted. In other words,we cannot write (XЈX)Ϫ1ϭXϪ1(XЈ)Ϫ1unless nϭk,a case that virtually never arises in practice.The nϫ 1 vectors of OLS fitted values and residuals are given byyˆϭXˆ,uˆϭyϪyˆϭyϪXˆ.From (E.6) and the definition of uˆ,we can see that the first order condition for ˆis the same asXЈuˆϭ0.(E.9) Because the first column of X consists entirely of ones,(E.9) implies that the OLS residuals always sum to zero when an intercept is included in the equation and that the sample covariance between each independent variable and the OLS residuals is zero. (We discussed both of these properties in Chapter 3.)The sum of squared residuals can be written asSSR ϭ͚n tϭ1uˆt2ϭuˆЈuˆϭ(yϪXˆ)Ј(yϪXˆ).(E.10)All of the algebraic properties from Chapter 3 can be derived using matrix algebra. For example,we can show that the total sum of squares is equal to the explained sum of squares plus the sum of squared residuals [see (3.27)]. The use of matrices does not pro-vide a simpler proof than summation notation,so we do not provide another derivation.757The matrix approach to multiple regression can be used as the basis for a geometri-cal interpretation of regression. This involves mathematical concepts that are even more advanced than those we covered in Appendix D. [See Goldberger (1991) or Greene (1997).]E.2FINITE SAMPLE PROPERTIES OF OLSDeriving the expected value and variance of the OLS estimator ˆis facilitated by matrix algebra,but we must show some care in stating the assumptions.A S S U M P T I O N E.1(L I N E A R I N P A R A M E T E R S)The model can be written as in (E.3), where y is an observed nϫ 1 vector, X is an nϫ k observed matrix, and u is an nϫ 1 vector of unobserved errors or disturbances.A S S U M P T I O N E.2(Z E R O C O N D I T I O N A L M E A N)Conditional on the entire matrix X, each error ut has zero mean: E(ut͉X) ϭ0, tϭ1,2,…,n.In vector form,E(u͉X) ϭ0.(E.11) This assumption is implied by MLR.3 under the random sampling assumption,MLR.2.In time series applications,Assumption E.2 imposes strict exogeneity on the explana-tory variables,something discussed at length in Chapter 10. This rules out explanatory variables whose future values are correlated with ut; in particular,it eliminates laggeddependent variables. Under Assumption E.2,we can condition on the xtjwhen we com-pute the expected value of ˆ.A S S U M P T I O N E.3(N O P E R F E C T C O L L I N E A R I T Y) The matrix X has rank k.This is a careful statement of the assumption that rules out linear dependencies among the explanatory variables. Under Assumption E.3,XЈX is nonsingular,and so ˆis unique and can be written as in (E.8).T H E O R E M E.1(U N B I A S E D N E S S O F O L S)Under Assumptions E.1, E.2, and E.3, the OLS estimator ˆis unbiased for .P R O O F:Use Assumptions E.1 and E.3 and simple algebra to writeˆϭ(XЈX)Ϫ1XЈyϭ(XЈX)Ϫ1XЈ(Xϩu)ϭ(XЈX)Ϫ1(XЈX)ϩ(XЈX)Ϫ1XЈuϭϩ(XЈX)Ϫ1XЈu,(E.12)where we use the fact that (XЈX)Ϫ1(XЈX) ϭIk . Taking the expectation conditional on X givesAppendix E The Linear Regression Model in Matrix Form 758E(ˆ͉X)ϭϩ(XЈX)Ϫ1XЈE(u͉X)ϭϩ(XЈX)Ϫ1XЈ0ϭ,because E(u͉X) ϭ0under Assumption E.2. This argument clearly does not depend on the value of , so we have shown that ˆis unbiased.To obtain the simplest form of the variance-covariance matrix of ˆ,we impose the assumptions of homoskedasticity and no serial correlation.A S S U M P T I O N E.4(H O M O S K E D A S T I C I T Y A N DN O S E R I A L C O R R E L A T I O N)(i) Var(ut͉X) ϭ2, t ϭ 1,2,…,n. (ii) Cov(u t,u s͉X) ϭ0, for all t s. In matrix form, we canwrite these two assumptions asVar(u͉X) ϭ2I n,(E.13)where Inis the nϫ n identity matrix.Part (i) of Assumption E.4 is the homoskedasticity assumption:the variance of utcan-not depend on any element of X,and the variance must be constant across observations, t. Part (ii) is the no serial correlation assumption:the errors cannot be correlated across observations. Under random sampling,and in any other cross-sectional sampling schemes with independent observations,part (ii) of Assumption E.4 automatically holds. For time series applications,part (ii) rules out correlation in the errors over time (both conditional on X and unconditionally).Because of (E.13),we often say that u has scalar variance-covariance matrix when Assumption E.4 holds. We can now derive the variance-covariance matrix of the OLS estimator.T H E O R E M E.2(V A R I A N C E-C O V A R I A N C EM A T R I X O F T H E O L S E S T I M A T O R)Under Assumptions E.1 through E.4,Var(ˆ͉X) ϭ2(XЈX)Ϫ1.(E.14)P R O O F:From the last formula in equation (E.12), we haveVar(ˆ͉X) ϭVar[(XЈX)Ϫ1XЈu͉X] ϭ(XЈX)Ϫ1XЈ[Var(u͉X)]X(XЈX)Ϫ1.Now, we use Assumption E.4 to getVar(ˆ͉X)ϭ(XЈX)Ϫ1XЈ(2I n)X(XЈX)Ϫ1ϭ2(XЈX)Ϫ1XЈX(XЈX)Ϫ1ϭ2(XЈX)Ϫ1.Appendix E The Linear Regression Model in Matrix Form759Formula (E.14) means that the variance of ˆj (conditional on X ) is obtained by multi-plying 2by the j th diagonal element of (X ЈX )Ϫ1. For the slope coefficients,we gave an interpretable formula in equation (3.51). Equation (E.14) also tells us how to obtain the covariance between any two OLS estimates:multiply 2by the appropriate off diago-nal element of (X ЈX )Ϫ1. In Chapter 4,we showed how to avoid explicitly finding covariances for obtaining confidence intervals and hypotheses tests by appropriately rewriting the model.The Gauss-Markov Theorem,in its full generality,can be proven.T H E O R E M E .3 (G A U S S -M A R K O V T H E O R E M )Under Assumptions E.1 through E.4, ˆis the best linear unbiased estimator.P R O O F :Any other linear estimator of can be written as˜ ϭA Јy ,(E.15)where A is an n ϫ k matrix. In order for ˜to be unbiased conditional on X , A can consist of nonrandom numbers and functions of X . (For example, A cannot be a function of y .) To see what further restrictions on A are needed, write˜ϭA Ј(X ϩu ) ϭ(A ЈX )ϩA Јu .(E.16)Then,E(˜͉X )ϭA ЈX ϩE(A Јu ͉X )ϭA ЈX ϩA ЈE(u ͉X ) since A is a function of XϭA ЈX since E(u ͉X ) ϭ0.For ˜to be an unbiased estimator of , it must be true that E(˜͉X ) ϭfor all k ϫ 1 vec-tors , that is,A ЈX ϭfor all k ϫ 1 vectors .(E.17)Because A ЈX is a k ϫ k matrix, (E.17) holds if and only if A ЈX ϭI k . Equations (E.15) and (E.17) characterize the class of linear, unbiased estimators for .Next, from (E.16), we haveVar(˜͉X ) ϭA Ј[Var(u ͉X )]A ϭ2A ЈA ,by Assumption E.4. Therefore,Var(˜͉X ) ϪVar(ˆ͉X )ϭ2[A ЈA Ϫ(X ЈX )Ϫ1]ϭ2[A ЈA ϪA ЈX (X ЈX )Ϫ1X ЈA ] because A ЈX ϭI kϭ2A Ј[I n ϪX (X ЈX )Ϫ1X Ј]Aϵ2A ЈMA ,where M ϵI n ϪX (X ЈX )Ϫ1X Ј. Because M is symmetric and idempotent, A ЈMA is positive semi-definite for any n ϫ k matrix A . This establishes that the OLS estimator ˆis BLUE. How Appendix E The Linear Regression Model in Matrix Form 760Appendix E The Linear Regression Model in Matrix Formis this significant? Let c be any kϫ 1 vector and consider the linear combination cЈϭc11ϩc22ϩ… ϩc kk, which is a scalar. The unbiased estimators of cЈare cЈˆand cЈ˜. ButVar(c˜͉X) ϪVar(cЈˆ͉X) ϭcЈ[Var(˜͉X) ϪVar(ˆ͉X)]cՆ0,because [Var(˜͉X) ϪVar(ˆ͉X)] is p.s.d. Therefore, when it is used for estimating any linear combination of , OLS yields the smallest variance. In particular, Var(ˆj͉X) ՅVar(˜j͉X) for any other linear, unbiased estimator of j.The unbiased estimator of the error variance 2can be written asˆ2ϭuˆЈuˆ/(n Ϫk),where we have labeled the explanatory variables so that there are k total parameters, including the intercept.T H E O R E M E.4(U N B I A S E D N E S S O Fˆ2)Under Assumptions E.1 through E.4, ˆ2is unbiased for 2: E(ˆ2͉X) ϭ2for all 2Ͼ0. P R O O F:Write uˆϭyϪXˆϭyϪX(XЈX)Ϫ1XЈyϭM yϭM u, where MϭI nϪX(XЈX)Ϫ1XЈ,and the last equality follows because MXϭ0. Because M is symmetric and idempotent,uˆЈuˆϭuЈMЈM uϭuЈM u.Because uЈM u is a scalar, it equals its trace. Therefore,ϭE(uЈM u͉X)ϭE[tr(uЈM u)͉X] ϭE[tr(M uuЈ)͉X]ϭtr[E(M uuЈ|X)] ϭtr[M E(uuЈ|X)]ϭtr(M2I n) ϭ2tr(M) ϭ2(nϪ k).The last equality follows from tr(M) ϭtr(I) Ϫtr[X(XЈX)Ϫ1XЈ] ϭnϪtr[(XЈX)Ϫ1XЈX] ϭnϪn) ϭnϪk. Therefore,tr(IkE(ˆ2͉X) ϭE(uЈM u͉X)/(nϪ k) ϭ2.E.3STATISTICAL INFERENCEWhen we add the final classical linear model assumption,ˆhas a multivariate normal distribution,which leads to the t and F distributions for the standard test statistics cov-ered in Chapter 4.A S S U M P T I O N E.5(N O R M A L I T Y O F E R R O R S)are independent and identically distributed as Normal(0,2). Conditional on X, the utEquivalently, u given X is distributed as multivariate normal with mean zero and variance-covariance matrix 2I n: u~ Normal(0,2I n).761Appendix E The Linear Regression Model in Matrix Form Under Assumption E.5,each uis independent of the explanatory variables for all t. Inta time series setting,this is essentially the strict exogeneity assumption.T H E O R E M E.5(N O R M A L I T Y O Fˆ)Under the classical linear model Assumptions E.1 through E.5, ˆconditional on X is dis-tributed as multivariate normal with mean and variance-covariance matrix 2(XЈX)Ϫ1.Theorem E.5 is the basis for statistical inference involving . In fact,along with the properties of the chi-square,t,and F distributions that we summarized in Appendix D, we can use Theorem E.5 to establish that t statistics have a t distribution under Assumptions E.1 through E.5 (under the null hypothesis) and likewise for F statistics. We illustrate with a proof for the t statistics.T H E O R E M E.6Under Assumptions E.1 through E.5,(ˆjϪj)/se(ˆj) ~ t nϪk,j ϭ 1,2,…,k.P R O O F:The proof requires several steps; the following statements are initially conditional on X. First, by Theorem E.5, (ˆjϪj)/sd(ˆ) ~ Normal(0,1), where sd(ˆj) ϭ͙ෆc jj, and c jj is the j th diagonal element of (XЈX)Ϫ1. Next, under Assumptions E.1 through E.5, conditional on X,(n Ϫ k)ˆ2/2~ 2nϪk.(E.18)This follows because (nϪk)ˆ2/2ϭ(u/)ЈM(u/), where M is the nϫn symmetric, idem-potent matrix defined in Theorem E.4. But u/~ Normal(0,I n) by Assumption E.5. It follows from Property 1 for the chi-square distribution in Appendix D that (u/)ЈM(u/) ~ 2nϪk (because M has rank nϪk).We also need to show that ˆand ˆ2are independent. But ˆϭϩ(XЈX)Ϫ1XЈu, and ˆ2ϭuЈM u/(nϪk). Now, [(XЈX)Ϫ1XЈ]Mϭ0because XЈMϭ0. It follows, from Property 5 of the multivariate normal distribution in Appendix D, that ˆand M u are independent. Since ˆ2is a function of M u, ˆand ˆ2are also independent.Finally, we can write(ˆjϪj)/se(ˆj) ϭ[(ˆjϪj)/sd(ˆj)]/(ˆ2/2)1/2,which is the ratio of a standard normal random variable and the square root of a 2nϪk/(nϪk) random variable. We just showed that these are independent, and so, by def-inition of a t random variable, (ˆjϪj)/se(ˆj) has the t nϪk distribution. Because this distri-bution does not depend on X, it is the unconditional distribution of (ˆjϪj)/se(ˆj) as well.From this theorem,we can plug in any hypothesized value for j and use the t statistic for testing hypotheses,as usual.Under Assumptions E.1 through E.5,we can compute what is known as the Cramer-Rao lower bound for the variance-covariance matrix of unbiased estimators of (again762conditional on X ) [see Greene (1997,Chapter 4)]. This can be shown to be 2(X ЈX )Ϫ1,which is exactly the variance-covariance matrix of the OLS estimator. This implies that ˆis the minimum variance unbiased estimator of (conditional on X ):Var(˜͉X ) ϪVar(ˆ͉X ) is positive semi-definite for any other unbiased estimator ˜; we no longer have to restrict our attention to estimators linear in y .It is easy to show that the OLS estimator is in fact the maximum likelihood estima-tor of under Assumption E.5. For each t ,the distribution of y t given X is Normal(x t ,2). Because the y t are independent conditional on X ,the likelihood func-tion for the sample is obtained from the product of the densities:͟nt ϭ1(22)Ϫ1/2exp[Ϫ(y t Ϫx t )2/(22)].Maximizing this function with respect to and 2is the same as maximizing its nat-ural logarithm:͚nt ϭ1[Ϫ(1/2)log(22) Ϫ(yt Ϫx t )2/(22)].For obtaining ˆ,this is the same as minimizing͚nt ϭ1(y t Ϫx t )2—the division by 22does not affect the optimization—which is just the problem that OLS solves. The esti-mator of 2that we have used,SSR/(n Ϫk ),turns out not to be the MLE of 2; the MLE is SSR/n ,which is a biased estimator. Because the unbiased estimator of 2results in t and F statistics with exact t and F distributions under the null,it is always used instead of the MLE.SUMMARYThis appendix has provided a brief discussion of the linear regression model using matrix notation. This material is included for more advanced classes that use matrix algebra,but it is not needed to read the text. In effect,this appendix proves some of the results that we either stated without proof,proved only in special cases,or proved through a more cumbersome method of proof. Other topics—such as asymptotic prop-erties,instrumental variables estimation,and panel data models—can be given concise treatments using matrices. Advanced texts in econometrics,including Davidson and MacKinnon (1993),Greene (1997),and Wooldridge (1999),can be consulted for details.KEY TERMSAppendix E The Linear Regression Model in Matrix Form 763First Order Condition Matrix Notation Minimum Variance Unbiased Scalar Variance-Covariance MatrixVariance-Covariance Matrix of the OLS EstimatorPROBLEMSE.1Let x t be the 1ϫ k vector of explanatory variables for observation t . Show that the OLS estimator ˆcan be written asˆϭΘ͚n tϭ1xt Јx t ΙϪ1Θ͚nt ϭ1xt Јy t Ι.Dividing each summation by n shows that ˆis a function of sample averages.E.2Let ˆbe the k ϫ 1 vector of OLS estimates.(i)Show that for any k ϫ 1 vector b ,we can write the sum of squaredresiduals asSSR(b ) ϭu ˆЈu ˆϩ(ˆϪb )ЈX ЈX (ˆϪb ).[Hint :Write (y Ϫ X b )Ј(y ϪX b ) ϭ[u ˆϩX (ˆϪb )]Ј[u ˆϩX (ˆϪb )]and use the fact that X Јu ˆϭ0.](ii)Explain how the expression for SSR(b ) in part (i) proves that ˆuniquely minimizes SSR(b ) over all possible values of b ,assuming Xhas rank k .E.3Let ˆbe the OLS estimate from the regression of y on X . Let A be a k ϫ k non-singular matrix and define z t ϵx t A ,t ϭ 1,…,n . Therefore,z t is 1ϫ k and is a non-singular linear combination of x t . Let Z be the n ϫ k matrix with rows z t . Let ˜denote the OLS estimate from a regression ofy on Z .(i)Show that ˜ϭA Ϫ1ˆ.(ii)Let y ˆt be the fitted values from the original regression and let y ˜t be thefitted values from regressing y on Z . Show that y ˜t ϭy ˆt ,for all t ϭ1,2,…,n . How do the residuals from the two regressions compare?(iii)Show that the estimated variance matrix for ˜is ˆ2A Ϫ1(X ЈX )Ϫ1A Ϫ1,where ˆ2is the usual variance estimate from regressing y on X .(iv)Let the ˆj be the OLS estimates from regressing y t on 1,x t 2,…,x tk ,andlet the ˜j be the OLS estimates from the regression of yt on 1,a 2x t 2,…,a k x tk ,where a j 0,j ϭ 2,…,k . Use the results from part (i)to find the relationship between the ˜j and the ˆj .(v)Assuming the setup of part (iv),use part (iii) to show that se(˜j ) ϭse(ˆj )/͉a j ͉.(vi)Assuming the setup of part (iv),show that the absolute values of the tstatistics for ˜j and ˆj are identical.Appendix E The Linear Regression Model in Matrix Form 764。

计量经济学导论伍德里奇数据集

数据集概述:计量经济学导论伍德里奇数据集是一个包含了多个经济指标的样本数据集,用于开展计量经济学研究和统计推断。

该数据集是经济计量学领域中常用的数据集之一,可用于分析各种经济现象之间的相互关系和影响。

本篇文章将介绍数据集的基本情况、样本选择的原因和意义,以及数据预处理和结果分析的方法。

数据集特点:计量经济学导论伍德里奇数据集包含了多个经济指标的时间序列数据,包括国内生产总值、失业率、消费支出、投资额等。

这些指标涵盖了宏观经济领域的多个方面,可以用于分析各种经济现象之间的相互关系和影响。

数据集的时间跨度较长,包含了多个年份的数据,为研究经济变化提供了丰富的样本。

此外,数据集还提供了不同年份的季节调整数据,方便了对经济指标进行更准确的统计分析。

样本选择原因和意义:本篇文章选择计量经济学导论伍德里奇数据集作为研究样本的原因和意义在于,该数据集包含了多个重要的宏观经济指标,可以用于分析宏观经济现象之间的相互关系和影响。

通过对该数据集进行深入分析和挖掘,可以更好地了解经济运行规律和趋势,为政策制定和预测提供更有价值的参考依据。

此外,该数据集还可以用于检验计量经济学模型的准确性和适用性,为经济学的理论研究和应用提供有力的支持。

数据预处理:在进行数据分析之前,需要对数据进行预处理,包括缺失值填充、异常值处理和数据清洗等。

在本篇文章中,我们采用了以下方法进行数据预处理:1. 缺失值填充:对于缺失的数据,我们采用了均值插补的方法进行了填充。

2. 异常值处理:通过对数据进行箱型图观察,剔除了明显异常的数据点。

3. 数据清洗:对不符合要求的数据进行了清洗,如去除无效样本和不符合研究目的的数据。

结果分析:通过对预处理后的数据进行统计分析,我们发现了一些有趣的结论:1. 国内生产总值和失业率之间存在负相关关系,即当失业率上升时,国内生产总值也相应下降。

这可能是由于失业率上升时,消费者和投资者的信心受到影响,导致需求下降,进而影响到经济增长。

伍德里奇《计量经济学导论--现代观点》1

T his appendix derives various results for ordinary least squares estimation of themultiple linear regression model using matrix notation and matrix algebra (see Appendix D for a summary). The material presented here is much more ad-vanced than that in the text.E.1THE MODEL AND ORDINARY LEAST SQUARES ESTIMATIONThroughout this appendix,we use the t subscript to index observations and an n to denote the sample size. It is useful to write the multiple linear regression model with k parameters as follows:y t ϭ1ϩ2x t 2ϩ3x t 3ϩ… ϩk x tk ϩu t ,t ϭ 1,2,…,n ,(E.1)where y t is the dependent variable for observation t ,and x tj ,j ϭ 2,3,…,k ,are the inde-pendent variables. Notice how our labeling convention here differs from the text:we call the intercept 1and let 2,…,k denote the slope parameters. This relabeling is not important,but it simplifies the matrix approach to multiple regression.For each t ,define a 1 ϫk vector,x t ϭ(1,x t 2,…,x tk ),and let ϭ(1,2,…,k )Јbe the k ϫ1 vector of all parameters. Then,we can write (E.1) asy t ϭx t ϩu t ,t ϭ 1,2,…,n .(E.2)[Some authors prefer to define x t as a column vector,in which case,x t is replaced with x t Јin (E.2). Mathematically,it makes more sense to define it as a row vector.] We can write (E.2) in full matrix notation by appropriately defining data vectors and matrices. Let y denote the n ϫ1 vector of observations on y :the t th element of y is y t .Let X be the n ϫk vector of observations on the explanatory variables. In other words,the t th row of X consists of the vector x t . Equivalently,the (t ,j )th element of X is simply x tj :755A p p e n d i x EThe Linear Regression Model inMatrix Formn X ϫ k ϵϭ .Finally,let u be the n ϫ 1 vector of unobservable disturbances. Then,we can write (E.2)for all n observations in matrix notation :y ϭX ϩu .(E.3)Remember,because X is n ϫ k and is k ϫ 1,X is n ϫ 1.Estimation of proceeds by minimizing the sum of squared residuals,as in Section3.2. Define the sum of squared residuals function for any possible k ϫ 1 parameter vec-tor b asSSR(b ) ϵ͚nt ϭ1(y t Ϫx t b )2.The k ϫ 1 vector of ordinary least squares estimates,ˆϭ(ˆ1,ˆ2,…,ˆk ),minimizes SSR(b ) over all possible k ϫ 1 vectors b . This is a problem in multivariable calculus.For ˆto minimize the sum of squared residuals,it must solve the first order conditionѨSSR(ˆ)/Ѩb ϵ0.(E.4)Using the fact that the derivative of (y t Ϫx t b )2with respect to b is the 1ϫ k vector Ϫ2(y t Ϫx t b )x t ,(E.4) is equivalent to͚nt ϭ1xt Ј(y t Ϫx t ˆ) ϵ0.(E.5)(We have divided by Ϫ2 and taken the transpose.) We can write this first order condi-tion as͚nt ϭ1(y t Ϫˆ1Ϫˆ2x t 2Ϫ… Ϫˆk x tk ) ϭ0͚nt ϭ1x t 2(y t Ϫˆ1Ϫˆ2x t 2Ϫ… Ϫˆk x tk ) ϭ0...͚nt ϭ1x tk (y t Ϫˆ1Ϫˆ2x t 2Ϫ… Ϫˆk x tk ) ϭ0,which,apart from the different labeling convention,is identical to the first order condi-tions in equation (3.13). We want to write these in matrix form to make them more use-ful. Using the formula for partitioned multiplication in Appendix D,we see that (E.5)is equivalent to΅1x 12x 13...x 1k1x 22x 23...x 2k...1x n 2x n 3...x nk ΄΅x 1x 2...x n ΄Appendix E The Linear Regression Model in Matrix Form756Appendix E The Linear Regression Model in Matrix FormXЈ(yϪXˆ) ϭ0(E.6) or(XЈX)ˆϭXЈy.(E.7)It can be shown that (E.7) always has at least one solution. Multiple solutions do not help us,as we are looking for a unique set of OLS estimates given our data set. Assuming that the kϫ k symmetric matrix XЈX is nonsingular,we can premultiply both sides of (E.7) by (XЈX)Ϫ1to solve for the OLS estimator ˆ:ˆϭ(XЈX)Ϫ1XЈy.(E.8)This is the critical formula for matrix analysis of the multiple linear regression model. The assumption that XЈX is invertible is equivalent to the assumption that rank(X) ϭk, which means that the columns of X must be linearly independent. This is the matrix ver-sion of MLR.4 in Chapter 3.Before we continue,(E.8) warrants a word of warning. It is tempting to simplify the formula for ˆas follows:ˆϭ(XЈX)Ϫ1XЈyϭXϪ1(XЈ)Ϫ1XЈyϭXϪ1y.The flaw in this reasoning is that X is usually not a square matrix,and so it cannot be inverted. In other words,we cannot write (XЈX)Ϫ1ϭXϪ1(XЈ)Ϫ1unless nϭk,a case that virtually never arises in practice.The nϫ 1 vectors of OLS fitted values and residuals are given byyˆϭXˆ,uˆϭyϪyˆϭyϪXˆ.From (E.6) and the definition of uˆ,we can see that the first order condition for ˆis the same asXЈuˆϭ0.(E.9) Because the first column of X consists entirely of ones,(E.9) implies that the OLS residuals always sum to zero when an intercept is included in the equation and that the sample covariance between each independent variable and the OLS residuals is zero. (We discussed both of these properties in Chapter 3.)The sum of squared residuals can be written asSSR ϭ͚n tϭ1uˆt2ϭuˆЈuˆϭ(yϪXˆ)Ј(yϪXˆ).(E.10)All of the algebraic properties from Chapter 3 can be derived using matrix algebra. For example,we can show that the total sum of squares is equal to the explained sum of squares plus the sum of squared residuals [see (3.27)]. The use of matrices does not pro-vide a simpler proof than summation notation,so we do not provide another derivation.757The matrix approach to multiple regression can be used as the basis for a geometri-cal interpretation of regression. This involves mathematical concepts that are even more advanced than those we covered in Appendix D. [See Goldberger (1991) or Greene (1997).]E.2FINITE SAMPLE PROPERTIES OF OLSDeriving the expected value and variance of the OLS estimator ˆis facilitated by matrix algebra,but we must show some care in stating the assumptions.A S S U M P T I O N E.1(L I N E A R I N P A R A M E T E R S)The model can be written as in (E.3), where y is an observed nϫ 1 vector, X is an nϫ k observed matrix, and u is an nϫ 1 vector of unobserved errors or disturbances.A S S U M P T I O N E.2(Z E R O C O N D I T I O N A L M E A N)Conditional on the entire matrix X, each error ut has zero mean: E(ut͉X) ϭ0, tϭ1,2,…,n.In vector form,E(u͉X) ϭ0.(E.11) This assumption is implied by MLR.3 under the random sampling assumption,MLR.2.In time series applications,Assumption E.2 imposes strict exogeneity on the explana-tory variables,something discussed at length in Chapter 10. This rules out explanatory variables whose future values are correlated with ut; in particular,it eliminates laggeddependent variables. Under Assumption E.2,we can condition on the xtjwhen we com-pute the expected value of ˆ.A S S U M P T I O N E.3(N O P E R F E C T C O L L I N E A R I T Y) The matrix X has rank k.This is a careful statement of the assumption that rules out linear dependencies among the explanatory variables. Under Assumption E.3,XЈX is nonsingular,and so ˆis unique and can be written as in (E.8).T H E O R E M E.1(U N B I A S E D N E S S O F O L S)Under Assumptions E.1, E.2, and E.3, the OLS estimator ˆis unbiased for .P R O O F:Use Assumptions E.1 and E.3 and simple algebra to writeˆϭ(XЈX)Ϫ1XЈyϭ(XЈX)Ϫ1XЈ(Xϩu)ϭ(XЈX)Ϫ1(XЈX)ϩ(XЈX)Ϫ1XЈuϭϩ(XЈX)Ϫ1XЈu,(E.12)where we use the fact that (XЈX)Ϫ1(XЈX) ϭIk . Taking the expectation conditional on X givesAppendix E The Linear Regression Model in Matrix Form 758E(ˆ͉X)ϭϩ(XЈX)Ϫ1XЈE(u͉X)ϭϩ(XЈX)Ϫ1XЈ0ϭ,because E(u͉X) ϭ0under Assumption E.2. This argument clearly does not depend on the value of , so we have shown that ˆis unbiased.To obtain the simplest form of the variance-covariance matrix of ˆ,we impose the assumptions of homoskedasticity and no serial correlation.A S S U M P T I O N E.4(H O M O S K E D A S T I C I T Y A N DN O S E R I A L C O R R E L A T I O N)(i) Var(ut͉X) ϭ2, t ϭ 1,2,…,n. (ii) Cov(u t,u s͉X) ϭ0, for all t s. In matrix form, we canwrite these two assumptions asVar(u͉X) ϭ2I n,(E.13)where Inis the nϫ n identity matrix.Part (i) of Assumption E.4 is the homoskedasticity assumption:the variance of utcan-not depend on any element of X,and the variance must be constant across observations, t. Part (ii) is the no serial correlation assumption:the errors cannot be correlated across observations. Under random sampling,and in any other cross-sectional sampling schemes with independent observations,part (ii) of Assumption E.4 automatically holds. For time series applications,part (ii) rules out correlation in the errors over time (both conditional on X and unconditionally).Because of (E.13),we often say that u has scalar variance-covariance matrix when Assumption E.4 holds. We can now derive the variance-covariance matrix of the OLS estimator.T H E O R E M E.2(V A R I A N C E-C O V A R I A N C EM A T R I X O F T H E O L S E S T I M A T O R)Under Assumptions E.1 through E.4,Var(ˆ͉X) ϭ2(XЈX)Ϫ1.(E.14)P R O O F:From the last formula in equation (E.12), we haveVar(ˆ͉X) ϭVar[(XЈX)Ϫ1XЈu͉X] ϭ(XЈX)Ϫ1XЈ[Var(u͉X)]X(XЈX)Ϫ1.Now, we use Assumption E.4 to getVar(ˆ͉X)ϭ(XЈX)Ϫ1XЈ(2I n)X(XЈX)Ϫ1ϭ2(XЈX)Ϫ1XЈX(XЈX)Ϫ1ϭ2(XЈX)Ϫ1.Appendix E The Linear Regression Model in Matrix Form759Formula (E.14) means that the variance of ˆj (conditional on X ) is obtained by multi-plying 2by the j th diagonal element of (X ЈX )Ϫ1. For the slope coefficients,we gave an interpretable formula in equation (3.51). Equation (E.14) also tells us how to obtain the covariance between any two OLS estimates:multiply 2by the appropriate off diago-nal element of (X ЈX )Ϫ1. In Chapter 4,we showed how to avoid explicitly finding covariances for obtaining confidence intervals and hypotheses tests by appropriately rewriting the model.The Gauss-Markov Theorem,in its full generality,can be proven.T H E O R E M E .3 (G A U S S -M A R K O V T H E O R E M )Under Assumptions E.1 through E.4, ˆis the best linear unbiased estimator.P R O O F :Any other linear estimator of can be written as˜ ϭA Јy ,(E.15)where A is an n ϫ k matrix. In order for ˜to be unbiased conditional on X , A can consist of nonrandom numbers and functions of X . (For example, A cannot be a function of y .) To see what further restrictions on A are needed, write˜ϭA Ј(X ϩu ) ϭ(A ЈX )ϩA Јu .(E.16)Then,E(˜͉X )ϭA ЈX ϩE(A Јu ͉X )ϭA ЈX ϩA ЈE(u ͉X ) since A is a function of XϭA ЈX since E(u ͉X ) ϭ0.For ˜to be an unbiased estimator of , it must be true that E(˜͉X ) ϭfor all k ϫ 1 vec-tors , that is,A ЈX ϭfor all k ϫ 1 vectors .(E.17)Because A ЈX is a k ϫ k matrix, (E.17) holds if and only if A ЈX ϭI k . Equations (E.15) and (E.17) characterize the class of linear, unbiased estimators for .Next, from (E.16), we haveVar(˜͉X ) ϭA Ј[Var(u ͉X )]A ϭ2A ЈA ,by Assumption E.4. Therefore,Var(˜͉X ) ϪVar(ˆ͉X )ϭ2[A ЈA Ϫ(X ЈX )Ϫ1]ϭ2[A ЈA ϪA ЈX (X ЈX )Ϫ1X ЈA ] because A ЈX ϭI kϭ2A Ј[I n ϪX (X ЈX )Ϫ1X Ј]Aϵ2A ЈMA ,where M ϵI n ϪX (X ЈX )Ϫ1X Ј. Because M is symmetric and idempotent, A ЈMA is positive semi-definite for any n ϫ k matrix A . This establishes that the OLS estimator ˆis BLUE. How Appendix E The Linear Regression Model in Matrix Form 760Appendix E The Linear Regression Model in Matrix Formis this significant? Let c be any kϫ 1 vector and consider the linear combination cЈϭc11ϩc22ϩ… ϩc kk, which is a scalar. The unbiased estimators of cЈare cЈˆand cЈ˜. ButVar(c˜͉X) ϪVar(cЈˆ͉X) ϭcЈ[Var(˜͉X) ϪVar(ˆ͉X)]cՆ0,because [Var(˜͉X) ϪVar(ˆ͉X)] is p.s.d. Therefore, when it is used for estimating any linear combination of , OLS yields the smallest variance. In particular, Var(ˆj͉X) ՅVar(˜j͉X) for any other linear, unbiased estimator of j.The unbiased estimator of the error variance 2can be written asˆ2ϭuˆЈuˆ/(n Ϫk),where we have labeled the explanatory variables so that there are k total parameters, including the intercept.T H E O R E M E.4(U N B I A S E D N E S S O Fˆ2)Under Assumptions E.1 through E.4, ˆ2is unbiased for 2: E(ˆ2͉X) ϭ2for all 2Ͼ0. P R O O F:Write uˆϭyϪXˆϭyϪX(XЈX)Ϫ1XЈyϭM yϭM u, where MϭI nϪX(XЈX)Ϫ1XЈ,and the last equality follows because MXϭ0. Because M is symmetric and idempotent,uˆЈuˆϭuЈMЈM uϭuЈM u.Because uЈM u is a scalar, it equals its trace. Therefore,ϭE(uЈM u͉X)ϭE[tr(uЈM u)͉X] ϭE[tr(M uuЈ)͉X]ϭtr[E(M uuЈ|X)] ϭtr[M E(uuЈ|X)]ϭtr(M2I n) ϭ2tr(M) ϭ2(nϪ k).The last equality follows from tr(M) ϭtr(I) Ϫtr[X(XЈX)Ϫ1XЈ] ϭnϪtr[(XЈX)Ϫ1XЈX] ϭnϪn) ϭnϪk. Therefore,tr(IkE(ˆ2͉X) ϭE(uЈM u͉X)/(nϪ k) ϭ2.E.3STATISTICAL INFERENCEWhen we add the final classical linear model assumption,ˆhas a multivariate normal distribution,which leads to the t and F distributions for the standard test statistics cov-ered in Chapter 4.A S S U M P T I O N E.5(N O R M A L I T Y O F E R R O R S)are independent and identically distributed as Normal(0,2). Conditional on X, the utEquivalently, u given X is distributed as multivariate normal with mean zero and variance-covariance matrix 2I n: u~ Normal(0,2I n).761Appendix E The Linear Regression Model in Matrix Form Under Assumption E.5,each uis independent of the explanatory variables for all t. Inta time series setting,this is essentially the strict exogeneity assumption.T H E O R E M E.5(N O R M A L I T Y O Fˆ)Under the classical linear model Assumptions E.1 through E.5, ˆconditional on X is dis-tributed as multivariate normal with mean and variance-covariance matrix 2(XЈX)Ϫ1.Theorem E.5 is the basis for statistical inference involving . In fact,along with the properties of the chi-square,t,and F distributions that we summarized in Appendix D, we can use Theorem E.5 to establish that t statistics have a t distribution under Assumptions E.1 through E.5 (under the null hypothesis) and likewise for F statistics. We illustrate with a proof for the t statistics.T H E O R E M E.6Under Assumptions E.1 through E.5,(ˆjϪj)/se(ˆj) ~ t nϪk,j ϭ 1,2,…,k.P R O O F:The proof requires several steps; the following statements are initially conditional on X. First, by Theorem E.5, (ˆjϪj)/sd(ˆ) ~ Normal(0,1), where sd(ˆj) ϭ͙ෆc jj, and c jj is the j th diagonal element of (XЈX)Ϫ1. Next, under Assumptions E.1 through E.5, conditional on X,(n Ϫ k)ˆ2/2~ 2nϪk.(E.18)This follows because (nϪk)ˆ2/2ϭ(u/)ЈM(u/), where M is the nϫn symmetric, idem-potent matrix defined in Theorem E.4. But u/~ Normal(0,I n) by Assumption E.5. It follows from Property 1 for the chi-square distribution in Appendix D that (u/)ЈM(u/) ~ 2nϪk (because M has rank nϪk).We also need to show that ˆand ˆ2are independent. But ˆϭϩ(XЈX)Ϫ1XЈu, and ˆ2ϭuЈM u/(nϪk). Now, [(XЈX)Ϫ1XЈ]Mϭ0because XЈMϭ0. It follows, from Property 5 of the multivariate normal distribution in Appendix D, that ˆand M u are independent. Since ˆ2is a function of M u, ˆand ˆ2are also independent.Finally, we can write(ˆjϪj)/se(ˆj) ϭ[(ˆjϪj)/sd(ˆj)]/(ˆ2/2)1/2,which is the ratio of a standard normal random variable and the square root of a 2nϪk/(nϪk) random variable. We just showed that these are independent, and so, by def-inition of a t random variable, (ˆjϪj)/se(ˆj) has the t nϪk distribution. Because this distri-bution does not depend on X, it is the unconditional distribution of (ˆjϪj)/se(ˆj) as well.From this theorem,we can plug in any hypothesized value for j and use the t statistic for testing hypotheses,as usual.Under Assumptions E.1 through E.5,we can compute what is known as the Cramer-Rao lower bound for the variance-covariance matrix of unbiased estimators of (again762conditional on X ) [see Greene (1997,Chapter 4)]. This can be shown to be 2(X ЈX )Ϫ1,which is exactly the variance-covariance matrix of the OLS estimator. This implies that ˆis the minimum variance unbiased estimator of (conditional on X ):Var(˜͉X ) ϪVar(ˆ͉X ) is positive semi-definite for any other unbiased estimator ˜; we no longer have to restrict our attention to estimators linear in y .It is easy to show that the OLS estimator is in fact the maximum likelihood estima-tor of under Assumption E.5. For each t ,the distribution of y t given X is Normal(x t ,2). Because the y t are independent conditional on X ,the likelihood func-tion for the sample is obtained from the product of the densities:͟nt ϭ1(22)Ϫ1/2exp[Ϫ(y t Ϫx t )2/(22)].Maximizing this function with respect to and 2is the same as maximizing its nat-ural logarithm:͚nt ϭ1[Ϫ(1/2)log(22) Ϫ(yt Ϫx t )2/(22)].For obtaining ˆ,this is the same as minimizing͚nt ϭ1(y t Ϫx t )2—the division by 22does not affect the optimization—which is just the problem that OLS solves. The esti-mator of 2that we have used,SSR/(n Ϫk ),turns out not to be the MLE of 2; the MLE is SSR/n ,which is a biased estimator. Because the unbiased estimator of 2results in t and F statistics with exact t and F distributions under the null,it is always used instead of the MLE.SUMMARYThis appendix has provided a brief discussion of the linear regression model using matrix notation. This material is included for more advanced classes that use matrix algebra,but it is not needed to read the text. In effect,this appendix proves some of the results that we either stated without proof,proved only in special cases,or proved through a more cumbersome method of proof. Other topics—such as asymptotic prop-erties,instrumental variables estimation,and panel data models—can be given concise treatments using matrices. Advanced texts in econometrics,including Davidson and MacKinnon (1993),Greene (1997),and Wooldridge (1999),can be consulted for details.KEY TERMSAppendix E The Linear Regression Model in Matrix Form 763First Order Condition Matrix Notation Minimum Variance Unbiased Scalar Variance-Covariance MatrixVariance-Covariance Matrix of the OLS EstimatorPROBLEMSE.1Let x t be the 1ϫ k vector of explanatory variables for observation t . Show that the OLS estimator ˆcan be written asˆϭΘ͚n tϭ1xt Јx t ΙϪ1Θ͚nt ϭ1xt Јy t Ι.Dividing each summation by n shows that ˆis a function of sample averages.E.2Let ˆbe the k ϫ 1 vector of OLS estimates.(i)Show that for any k ϫ 1 vector b ,we can write the sum of squaredresiduals asSSR(b ) ϭu ˆЈu ˆϩ(ˆϪb )ЈX ЈX (ˆϪb ).[Hint :Write (y Ϫ X b )Ј(y ϪX b ) ϭ[u ˆϩX (ˆϪb )]Ј[u ˆϩX (ˆϪb )]and use the fact that X Јu ˆϭ0.](ii)Explain how the expression for SSR(b ) in part (i) proves that ˆuniquely minimizes SSR(b ) over all possible values of b ,assuming Xhas rank k .E.3Let ˆbe the OLS estimate from the regression of y on X . Let A be a k ϫ k non-singular matrix and define z t ϵx t A ,t ϭ 1,…,n . Therefore,z t is 1ϫ k and is a non-singular linear combination of x t . Let Z be the n ϫ k matrix with rows z t . Let ˜denote the OLS estimate from a regression ofy on Z .(i)Show that ˜ϭA Ϫ1ˆ.(ii)Let y ˆt be the fitted values from the original regression and let y ˜t be thefitted values from regressing y on Z . Show that y ˜t ϭy ˆt ,for all t ϭ1,2,…,n . How do the residuals from the two regressions compare?(iii)Show that the estimated variance matrix for ˜is ˆ2A Ϫ1(X ЈX )Ϫ1A Ϫ1,where ˆ2is the usual variance estimate from regressing y on X .(iv)Let the ˆj be the OLS estimates from regressing y t on 1,x t 2,…,x tk ,andlet the ˜j be the OLS estimates from the regression of yt on 1,a 2x t 2,…,a k x tk ,where a j 0,j ϭ 2,…,k . Use the results from part (i)to find the relationship between the ˜j and the ˆj .(v)Assuming the setup of part (iv),use part (iii) to show that se(˜j ) ϭse(ˆj )/͉a j ͉.(vi)Assuming the setup of part (iv),show that the absolute values of the tstatistics for ˜j and ˆj are identical.Appendix E The Linear Regression Model in Matrix Form 764。

金融学、经济学研究生和博士必读书目和经典文献

金融学研究生必读书目和经典文献一、经济学理论推荐阅读书目1. 萨缪尔森:《经济学》(第十八版),中国邮电出版社,2006年。

2. 曼昆:《经济学原理》,北京大学出版社,上海三联出版社,1999年。

《宏观经济学》,上海财经大学出版社。

3. 马克思:《资本论》,人民出版社,2004年。

4.斯蒂格利茨:《经济学》(第四版),中国人民大学出版社。

5. 杨小凯:《发展经济学——超边际与边际分析》,社会科学文献出版社,2006年。

《经济学-新古典与新古典框架》,社会科学文献出版社; 第1版(2003年) 6.罗默:《高级宏观经济学》,上海财经大学出版社7.范里安:《微观经济学-现代观点》,(第七版),格致出版社8.平狄克:《微观经济学》(第七版),人大出版社9. 杨奎斯特:《递归宏观经济理论》,人大出版社10. 伍德福德:《利息与价格——货币政策理论基础》,人大出版社11. 巴罗:《经济增长》,第二版,格致出版社; (2010年11月1日)12. 菲利普·阿吉翁:《内生增长理论》,北京大学出版社13. 蒋中一:《数理经济学的基本方法》,北京大学出版社14. 肖红叶:《高级微观经济学》,中国金融出版社,2003年。

15. 龚六堂:《高级宏观经济学》,武汉大学出版社,2005年。

16. 亚当·斯密:《国民财富的性质与原因的研究》,商务印书馆,2004年。

17. 瑟尔沃:《增长与发展》,中国财政经济出版社,2001年。

18. 埃克伦德,赫伯特:《经济理论与方法史》,中国人民大学出版社,1996年。

19. 薛求知:《行为经济学》,复旦大学出版社,2003年。

20.速水佑次郎、神门善久:《发展经济学——从贫困走向富裕》,社会科学文献出版社,2008年。

21、(英)安格斯·麦迪森(Angus Maddison),《世界经济千年史》,北京大学出版社。

22.凯恩斯:《就业、利息与货币通论》,商务印书馆,2004年。

计量经济学教材推荐

【计量经济学的内容体系】

狭义的计量经济学以揭示经济现象中的因果关系为目的,主要应用回归分析方法。广义的计量经济学是利用经济理论、统计学和数学定量研究经济现象的经济计量方法,除了回归分析方法,还包括投入产出分析法、时间序列分析方法等。

把计量经济学分为初级、中级、高级三个层次,初级计量经济学一般包括计量经济学所必须的基础数理统计只是和矩阵代数只是、经典的线性计量经济学模型理论与方法(以单一方程模型为主)、单方程模型的应用等内容;中级计量经济学以经典的线性计量经济学模型理论与方法及其应用为主要内容,包括单一方程模型和联立方程模型。在应用方面,主要讨论计量经济学模型在生产、需求、消费、投资、货币需求和宏观经济系统等传统领域的应用,注重于应用过程中实际问题的处理。在描述方法上普遍运用矩阵描述;高级计量经济学以扩展的线性模型理论与方法、非线性模型理论与方法和动态模型理论与方法,以及它们的应用为主要内容。

各类计量经济学教材评析与推荐:

【计量经济学的内容体系】

古扎拉蒂《计量经济学基础》

白砂堤津耶《通过例题学习计量经济学》

伍德里奇《计量经济学导论:现代观点》

斯托克、沃森《计量经济学导论》

威廉·格林《计量经济分析》

林文夫(Fumio Hayashi)《计量经济学》

雨宫健(Takeshi Amemiya)《高级计量经济学》

【本书评价】本书内容由浅入深,首先对概率论、统计学等基础进行了概括与复习,随后在对回归进行全面阐述的过程中,涉及项目评估、面板数据方法、时间序列数据回归等论题,并且在组织结构和论述方式上具有独到之处,反映出当代应用计量经济学的精华。

【读者体会】

Morrow(网友):本书覆盖的内容比伍德里奇的那本书稍微少一点,比如面板数据只讲了固定效应模型,没有讲随机效应模型;受限因变量中没有讲Tobit模型、truncated和censored模型。但是把所讲的内容都讲清楚了,尤其是时间序列部分,比伍德里奇的书说的明白。

计量经济学导论:现代观点第四版习题答案

计量经济学导论:现代观点第四版习题答案DATA SET *****KIntroductory Econometrics: A Modern Approach, 4eJeffrey M. WooldridgeThis document contains a listing of all data sets that are provided with the fourth edition of Introductory Econometrics: A Modern Approach. For each data set, I list its source (wherever possible), where it is used or mentioned in the text (if it is), and, in some cases, notes on how an instructor might use the data set to generate new homework exercises, exam problems, or term projects. In some cases, I suggest ways to improve the data sets.Special thanks to Edmund Wooldridge, who provided valuable assistance in updating the page numbers for the fourth edition.401K.RAWSource: L.E. Papke (1995), “Participation in and Contributions to 401(k) Pension Plans: Evidence from Plan Data,” Journal of Human Resources 30, 311-325.Professor Papke kindly provided these data. She gathered them from the Internal Revenue Service’s Form 5500 tapes.Used in Text: pages 64, 80, 135-136, 173, 217, 685-686Notes: This data set is used in a variety of ways in the text. One additional possibility is to investigate whether the coefficients from the regression of prate on mrate, log(totemp) differ by whether the plan is a sole plan. The Chow test (see Section 7.4), and the less restrictive version that allows different intercepts, can be used.401KSUBS.RAWSource: A. Abadie (2003), “Semiparametric Instrumental VariableEstimation of Treatment Response Models,” Journal of Econometrics 113, 231-263.Professor Abadie kindly provided these data. He obtained them from the 1991 Survey of Income and Program Participation (SIPP).Used in Text: pages 165, 182, 222, 261, 279-280, 288, 298-299, 336, 542Notes: This data set can also be used to illustrate the binary response models, probit and logit, in Chapter 17, where, say, pira (an indicator for having an individual retirement account) is the dependent variable, and e401k [the 401(k) eligibility indicator] is the key explanatory variable.1*****.RAWSource: Data from the National Highway Traffic Safety Administration: “A Digest of State Alcohol-Highway Safety Related Legislation,” U.S. Department of Transportation, NHTSA. I used the third (1985), eighth (1990), and 13th (1995) editions.Used in Text: not usedNotes: This is not so much a data set as a summary of so-called “administrative per se” laws at the state level, for three different years. It could be supplemented with drunk-driving fatalities for a nice econometric analysis. In addition, the data for 2000 or later years can be added, forming the basis for a term project. Many other explanatory variables could be included. Unemployment rates, state-level tax rates on alcohol, and membership in MADD are just a few possibilities.*****.RAWSource: R.C. Fair (1978), “A Theory of Extramarital Affairs,” Journal of Political Economy 86, 45-61, 1978.I collected the data from Professor Fair’s web cite at the Yale University Department of Economics. He originally obtained the data from a survey by Psychology Today.Used in Text: not usedNotes: This is an interesting data set for problem sets, starting in Chapter 7. Even thoughnaffairs (number of extramarital affairs a woman reports) is a count variable, a linear model can be used as decent approximation. Or, you could ask the students to estimate a linear probability model for the binary indicator affair, equal to one of the woman reports having any extramarital affairs. One possibility is to test whether putting the single marriage rating variable, ratemarr, is enough, against the alternative that a full set of dummy variables is needed; see pages 237-238 for a similar example. This is also a good data set to illustrate Poisson regression (using naffairs) in Section 17.3 or probit and logit (using affair) in Section 17.1.*****.RAWSource: Jiyoung Kwon, a doctoral candidate in economics at MSU, kindly provided these data, which she obtained from the Domestic Airline Fares Consumer Report by the U.S. Department of Transportation.Used in Text: pages 501-502, 573Notes: This data set nicely illustrates the different estimates obtained when applying pooled OLS, random effects, and fixed effects.2APPLE.RAWSource: These data were used in the doctoral dissertation of Jeffrey Blend, Department of Agricultural Economics, Michigan State University, 1998. The thesis was supervised by Professor Eileen vanRavensway. Drs. Blend and van Ravensway kindly provided the data, which were obtained from a telephone survey conducted by the Institute for Public Policy and Social Research at MSU.Used in Text: pages 199, 222, 263, 618Notes: This data set is close to a true experimental data set because the price pairs facing afamily were randomly determined. In other words, the family head was presented with prices for the eco-labeled and regular apples, and then asked how much of each kind of apple they would buy at the given prices. As predicted by basic economics, the own price effect is strongly negative and the cross price effect is strongly positive. While the main dependent variable, ecolbs, piles up at zero, estimating a linear model is still worthwhile. Interestingly, because the survey design induces a strong positive correlation between the prices of eco-labeled and regular apples, there is an omitted variable problem if either of the price variables is dropped from the demand equation.A good exam question is to show a simple regression of ecolbs on ecoprc and then a multiple regression on both prices, and ask students to decide whether the price variables must be positively or negatively correlated.*****.RAWSources: Peterson's Guide to Four Year Colleges, 1994 and 1995 (24th and 25th editions). Princeton University Press. Princeton, NJ.The Official 1995 College Basketball Records Book, 1994, NCAA.1995 Information Please Sports Almanac (6th edition). Houghton Mifflin. New York, NY.Used in Text: page 690Notes: These data were collected by Patrick Tulloch, an MSU economics major, for a term project. The “athletic success” variablesare for the year prior to the enrollment and academic data. Updating these data to get a longer stretch of years, and including appearances in the “Sweet 16” NCAA basketball tournaments, would make for a more convincing analysis. With the growing popularity of women’s sports, especially basketball, an analysis that includes success in women’s athletics would be interesting.*****.RAWSources: Peterson's Guide to Four Year Colleges, 1995 (25th edition). Princeton University Press.1995 Information Please Sports Almanac (6th edition). Houghton Mifflin. New York, NYUsed in Text: page 6903Notes: These data were collected by Paul Anderson, an MSU economics major, for a term project. The score from football outcomes for natural rivals (Michigan-Michigan State, California-Stanford, Florida-Florida State, to name a few) is matched with application and academic data. The application and tuition data are for Fall 1994. Football records and scores are from 1993 football season. Extended these data to obtain a long stretch of panel data and other “natural” rivals could be very interesting.ATTEND.RAWSource: These data were collected by Professors Ronald Fisher and Carl Liedholm during a term in which they both taught principles of microeconomics at Michigan State University. Professors Fisher and Liedholm kindly gave me permission to use a random subset of their data, and their research assistant at the time, Jeffrey Guilfoyle, who completed his Ph.D. in economics at MSU, provided helpful hints.Used in Text: pages 111, 151, 198-199, 220-221Notes: The attendance figures were obtained by requiring students to slide their ID cards through a magnetic card reader, under the supervision of a teaching assistant. You might have the students use final, rather than the standardized variable, so that they can see the statistical significance of each variable remains exactly the same. The standardized variable is used only so that the coefficients measure effects in terms of standard deviations from the average score.AUDIT.RAWSource: These data come from a 1988 Urban Institute audit study in the Washington, D.C. area. I obtained them from the article “The Urban Institute Audit Studies: Their Methods andFindings,” by James J. Heckman and Peter Sieg elman. In Fix, M. and Struyk, R., eds., Clear and Convincing Evidence: Measurement of Discrimination in America. Washington, D.C.: Urban Institute Press, 1993, 187-258.Used in Text: pages 768-769, 776, 779BARIUM.RAWSource: C.M. Krupp and P.S. Pollard (1999), \Evidence from the U.S. Chemical Industry,\Canadian Journal of Economics 29, 199-227.Dr. Krupp kindly provided the data. They are monthly data covering February 1978 through December 1988.Used in Text: pages 357-358, 369, 373, 418, 422-423, 440, 655, 657, 665Note: Rather than just having intercept shifts for the different regimes, one could conduct a full Chow test across the different regimes.4BEAUTY.RAWSource: Hamermesh, D.S. and J.E. Biddle (1994), “Beauty and theLabor Market,” American Economic Review 84, 1174-1194.Professor Hamermesh kindly provided me with the data. For manageability, I have included only a subset of the variables, which results in somewhat larger sample sizes than reported for the regressions in the Hamermesh and Biddle paper.Used in Text: pages 236-237, 262-263BWGHT.RAWSource: J. Mullahy (1997), “Instrumental-Variable Estimation of Count Data Models:Applications to Models of Cigarette Smoking Behavior,” Review of Economics and Statistics 79, 596-593.Professor Mullahy kindly provided the data. He obtained them from the 1988 National Health Interview Survey.Used in Text: pages 18, 62, 110, 150-151, 164, 176, 182, 184-187, 255-256, 515-516BWGHT2.RAWSource: Dr. Zhehui Luo, a recent MSU Ph.D. in economics and Visiting Research Associate in the Department of Epidemiology at MSU, kindly provided these data. She obtained them from state files linking birth and infant death certificates, and from the National Center for Health Statistics natality and mortality data.Used in Text: pages 165, 211-222Notes: There are many possibilities with this data set. In addition to number of prenatal visits, smoking and alcohol consumption (during pregnancy) are included as explanatory variables. These can be added to equations of the kind found in Exercise C6.10. In addition, the one- and five-minute APGAR scores are included. These are measures of the well being of infants just after birth. An interesting feature of the score is that it is bounded between zero and 10, makinga linear model less than ideal. Still, a linear model would be informative, and you might ask students about predicted values less than zero or greater than 10.CAMPUS.RAWSource: These data were collected by Daniel Martin, a former MSU undergraduate, for a final project. They come from the FBI Uniform Crime Reports and are for the year 1992.Used in Text: pages 130-1315。

- 1、下载文档前请自行甄别文档内容的完整性,平台不提供额外的编辑、内容补充、找答案等附加服务。

- 2、"仅部分预览"的文档,不可在线预览部分如存在完整性等问题,可反馈申请退款(可完整预览的文档不适用该条件!)。

- 3、如文档侵犯您的权益,请联系客服反馈,我们会尽快为您处理(人工客服工作时间:9:00-18:30)。

证明

D( X ) E{[X E( X )]2} E{X 2 2XE( X ) [E( X )]2} E( X 2 ) 2E( X )E( X ) [E( X )]2

E( X 2 ) [E( X )]2

E( X 2 ) E2( X ).

4. 方差的性质

(1) 设 C 是常数, 则有 D(C ) 0. 证明 D(C ) E(C 2 ) [E(C )]2 C 2 C 2 0. (2) 设 X 是一个随机变量, C 是常数, 则有

D(CX ) C 2D( X ). 证明 D(CX ) E{[CX E(CX )]2}

Байду номын сангаас 2E{[X E( X )]2} C 2D( X ).

(3) 设 X, Y 相互独立, D(X), D(Y) 存在, 则 D( X Y ) D( X ) D(Y ).

证明 D( X Y ) E{[(X Y ) E( X Y )]2} E{[X E( X )] [Y E(Y )]}2 E[ X E( X )]2 E[Y E(Y )]2 2E{[X E( X )][Y E(Y )]}

二、重要概率分布的方差

1. 两点分布

已知随机变量 X 的分布律为

X1

0

p

p 1 p

则有 E( X ) 1 p 0 q p, D( X ) E( X 2 ) [E( X )]2

12 p 02 (1 p) p2 pq

2. 二项分布

设随机变量 X 服从参数为 n, p 二项分布, 其分布律为

n

np

(n 1)!

pk1(1 p)(n1)(k1)

k1 (k 1)![(n 1) (k 1)]!

np[ p (1 p)]n1

np.

D( X ) E( X 2 ) [E( x)]2

E( X 2 ) E[X ( X 1) X ]

E[X ( X 1)] E( X )

n

D( X ) D(Y ).

推广 若 X1, X2 , , Xn 相互独立,则有

D(a1X1 a2 X2 an Xn ) a12D( X1) a22D( X2 ) an2D( Xn ). (4) D(X) 0的充要条件是X以概率1取常数 C,即P{X C} 1.

(5) 若C E( X ), 则D( X ) E( X C )2

P{ X k} n pk (1 p)nk ,(k 0,1,2, ,n),

k

则有

0 p 1.

EX

n

k

k0

n k

p

k

(1

p)nk

n

kn! pk (1 p)nk

k0 k!(n k)!

n

np(n 1)!

pk1(1 p)(n1)(k1)

k1 (k 1)![(n 1) (k 1)]!

3. 随机变量方差的计算

(1) 利用定义计算

离散型随机变量的方差

D( X ) [ xk E( X )]2 pk ,

k 1

其中 P{ X xk } pk , k 1,2, 是 X 的分布律.

连续型随机变量的方差

D(

X

)

[

x

E(

X

)]2

p(

x)

d

x,

其中 p( x) 为X的概率密度.

(2) 利用公式计算 D( X ) E( X 2 ) [E( X )]2.

0 x ex

dx

1/ .

D( X ) E( X 2 ) [E( X )]2

0 x2 ex d x 1 / 2

结论 均匀分布的数学期望位于区间的中点.

D( X ) E( X 2 ) [E( X )]2

bx2

1

d

x

a

b

2

a ba

2

(b a)2 . 12

5. 指数分布

设随机变量 X 服从指数分布,其概率密度为

f

(x)

ex

,

x 0,

其中 0.

0,

x 0.

则有

E( X )

xf

(x)d x

k(k 1)Cnk pk (1 p)nk np

k0

n k(k 1)n!pk (1 p)nk np

k0 k!(n k)!

n

n(n 1) p2

(n 2)!

pk2 (1 p)(n2)(k2)

k2 (n k)!(k 2)!

np

n(n 1) p2[ p (1 p)]n2 np

2(X ),即 D( X ) 2( X ) E{[X E( X )]2 }.

称 D( X ) 为 标 准 差 或 均 方 差, 记 为σ( X ).

2. 方差的意义

方差是一个常用来体现随机变量X取 值分散程度的量.如果D(X)值大, 表示X 取 值分散程度大, E(X)的代表性差;而如果 D(X) 值小, 则表示X 的取值比较集中,以 E(X)作为随机变量的代表性好.

第3.2节 方 差

一、随机变量方差的概念及性质 二、重要概率分布的方差 三、例题讲解 四、矩的概念 五、小结

一、随机变量方差的概念及性质

1. 方差的定义 (定义3.3)

设X是 一 个 随 机 变 量,若E{[X E( X )]2 }存 在, 则 称E{[X E( X ) ]2 } 为 X 的 方 差, 记 为 D( X ) 或

k0 k!

k1 (k 1)!

e e .

D( X ) E( X 2 ) [E( X )]2

E( X 2 ) E[X ( X 1) X ] E[X ( X 1)] E( X )

k(k 1) k e

k0

k!

2e

k 2

2ee 2 .

k2 (k 2)!

(n2 n) p2 np.

D( X ) E( X 2 ) [E( X )]2

(n2 n) p2 np (np)2

np(1 p).

3. 泊松分布

设 X ~ P(), 且分布律为

P{ X k} k e , k 0,1,2, , 0.

k! 则有

E( X ) k k e e k1

所以 D( X ) E( X 2 ) [E( X )]2 2 2 .

泊松分布的期望和方差都等于参数 .

4. 均匀分布

设 X ~ U (a,b),其概率密度为

f

(

x)

b

1

a

,

0,

a x b, 其它.

则有 E( X )

xf ( x)d x

b1

xd x

aba

1 (a b). 2